Recurrent Neural Networks and Sequence-to-Sequence Models

EE 641 - Unit 4

Fall 2025

Introduction

Outline

Theory

- State machines as temporal systems

- Linear dynamical systems & stability

- RNNs as nonlinear AR filters

- Product of Jacobians: \(\prod \|\mathbf{W}\| \cdot |\sigma'|\)

- Effective horizon: γ^T with γ < 1

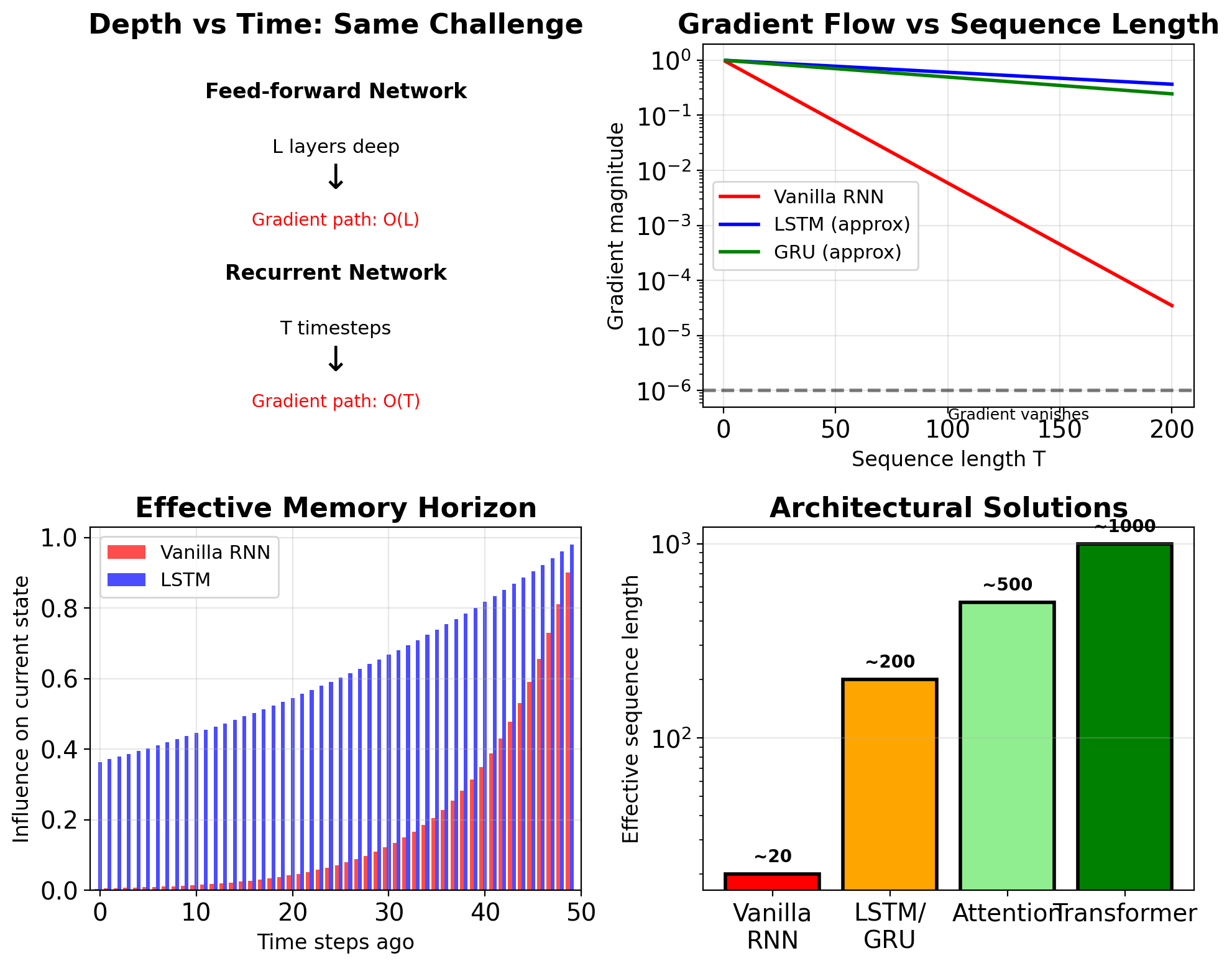

- Why vanilla RNNs fail at T > 20

- Unrolling through time

- O(T²) memory complexity

- Truncation trade-offs

Architectures & Applications

- Gates create gradient highways

- Linear cell state path

- 100+ step memory capability

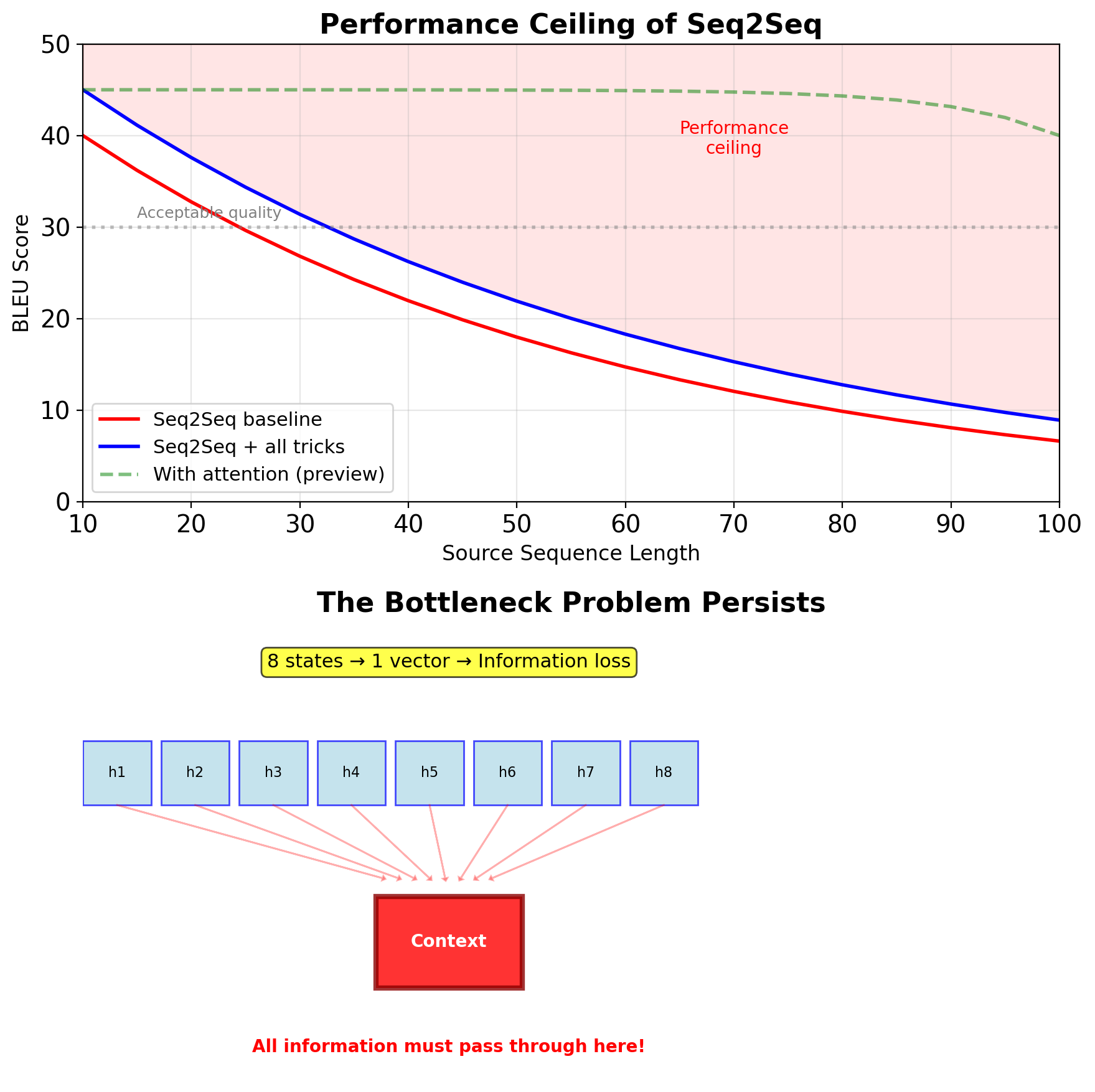

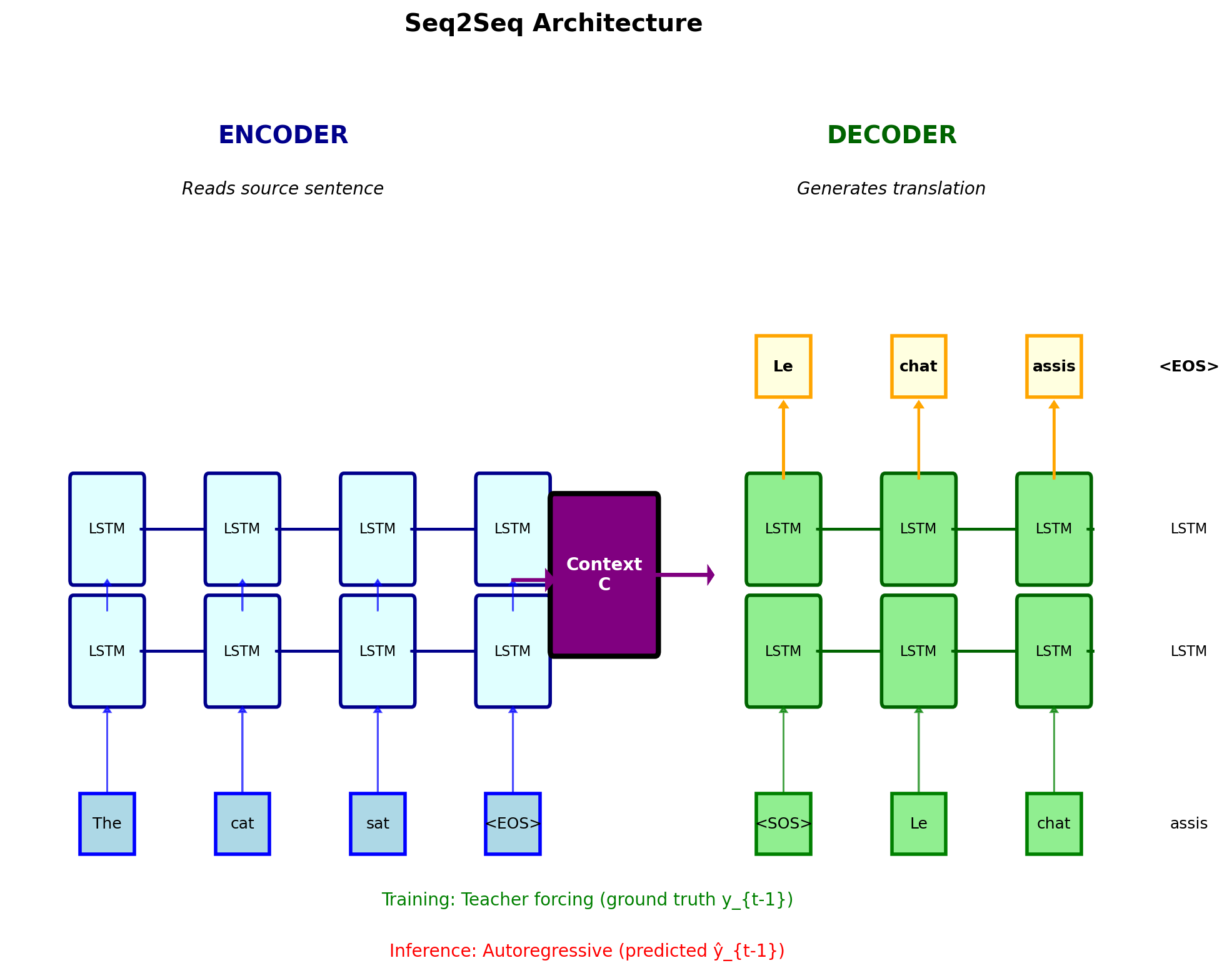

- Encoder-decoder framework

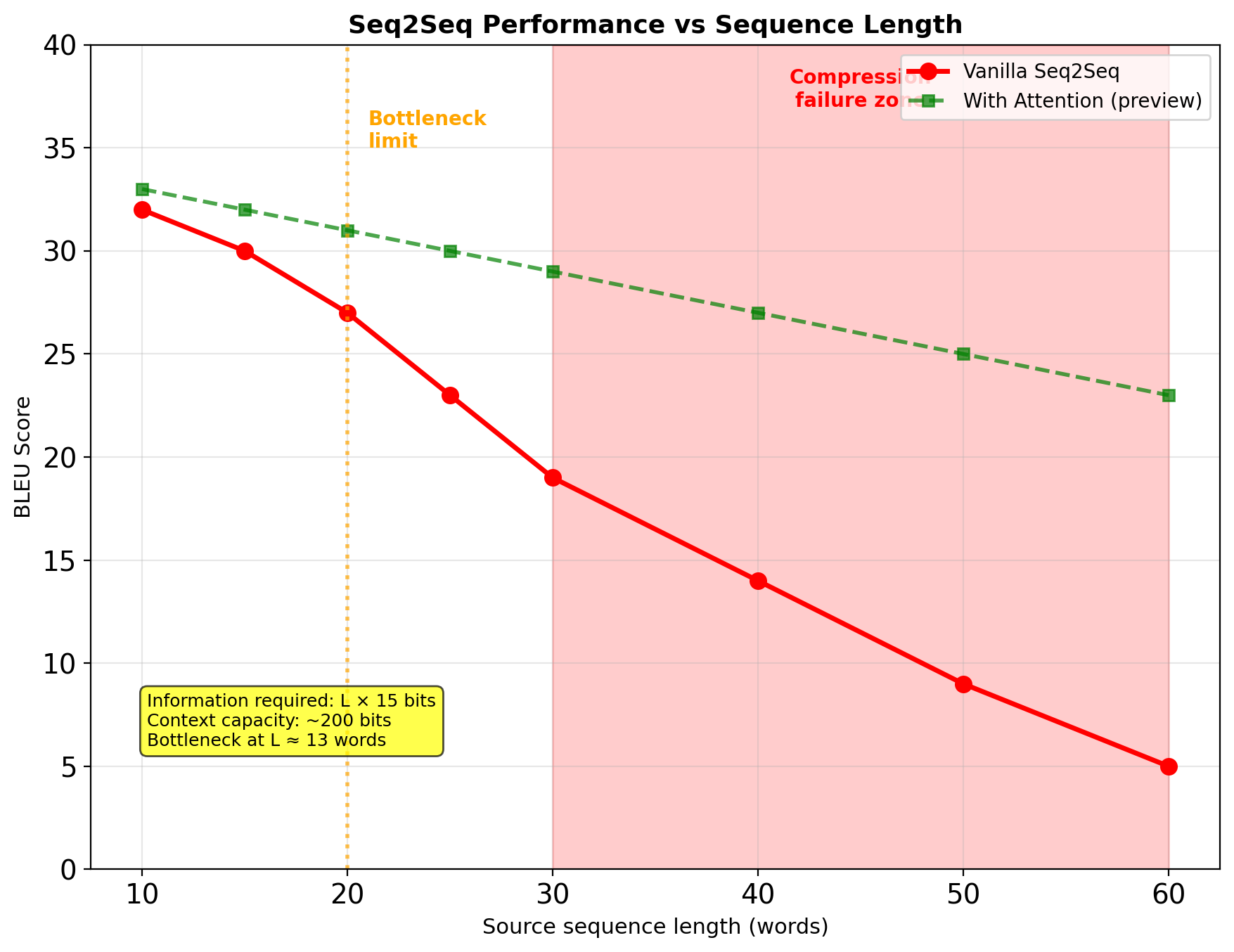

- Fixed bottleneck problem

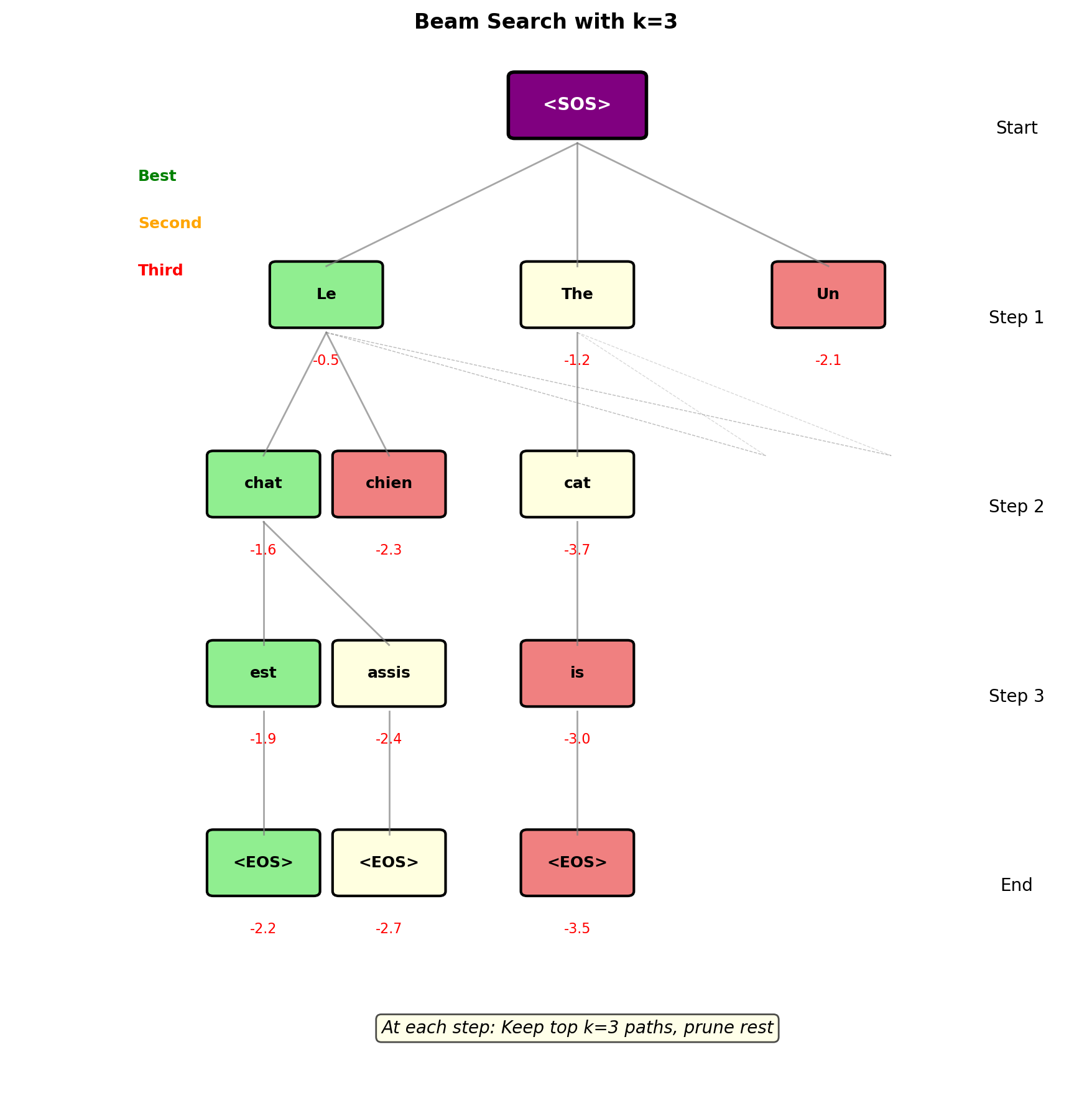

- Beam search decoding

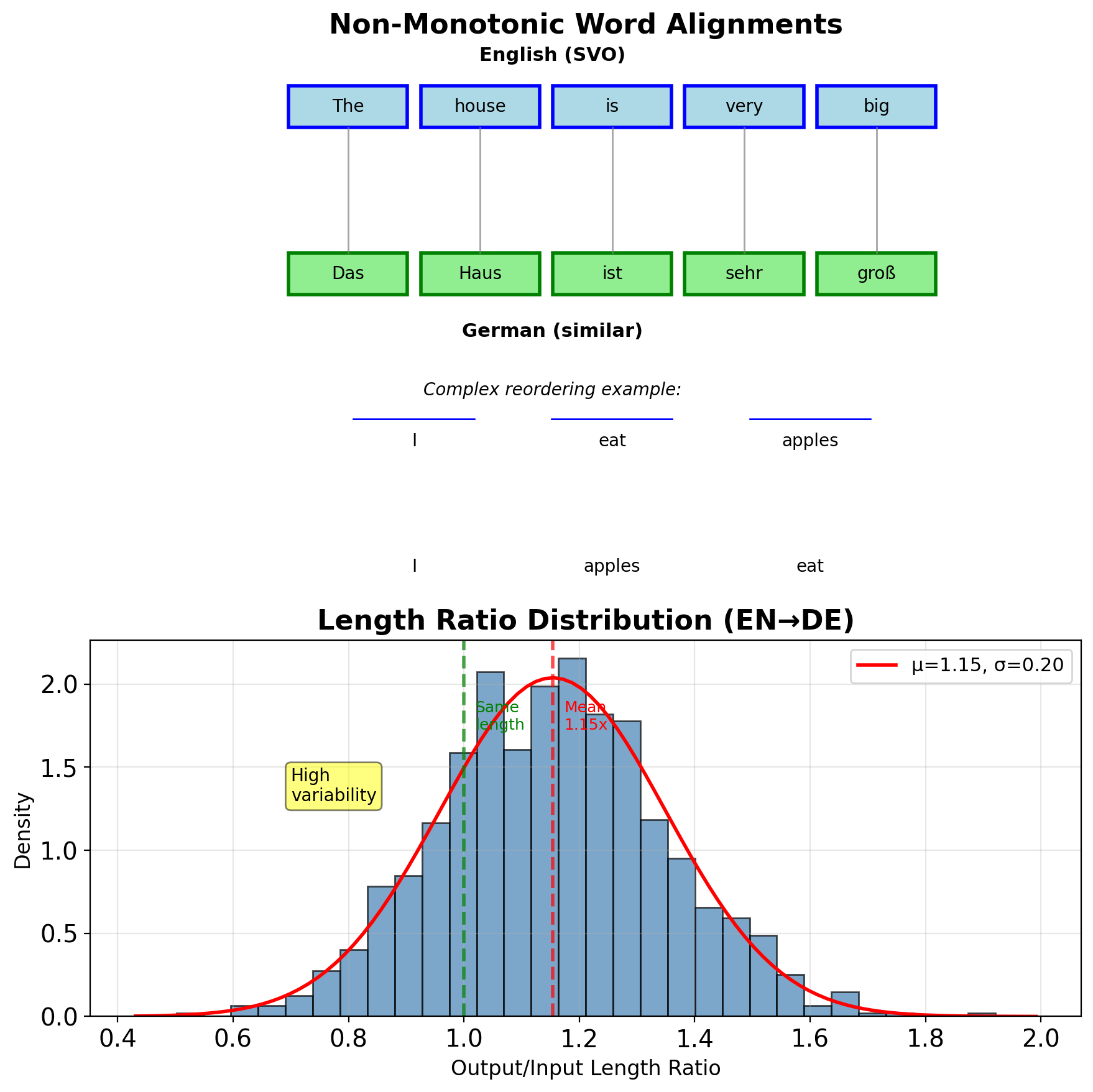

- Variable length mapping

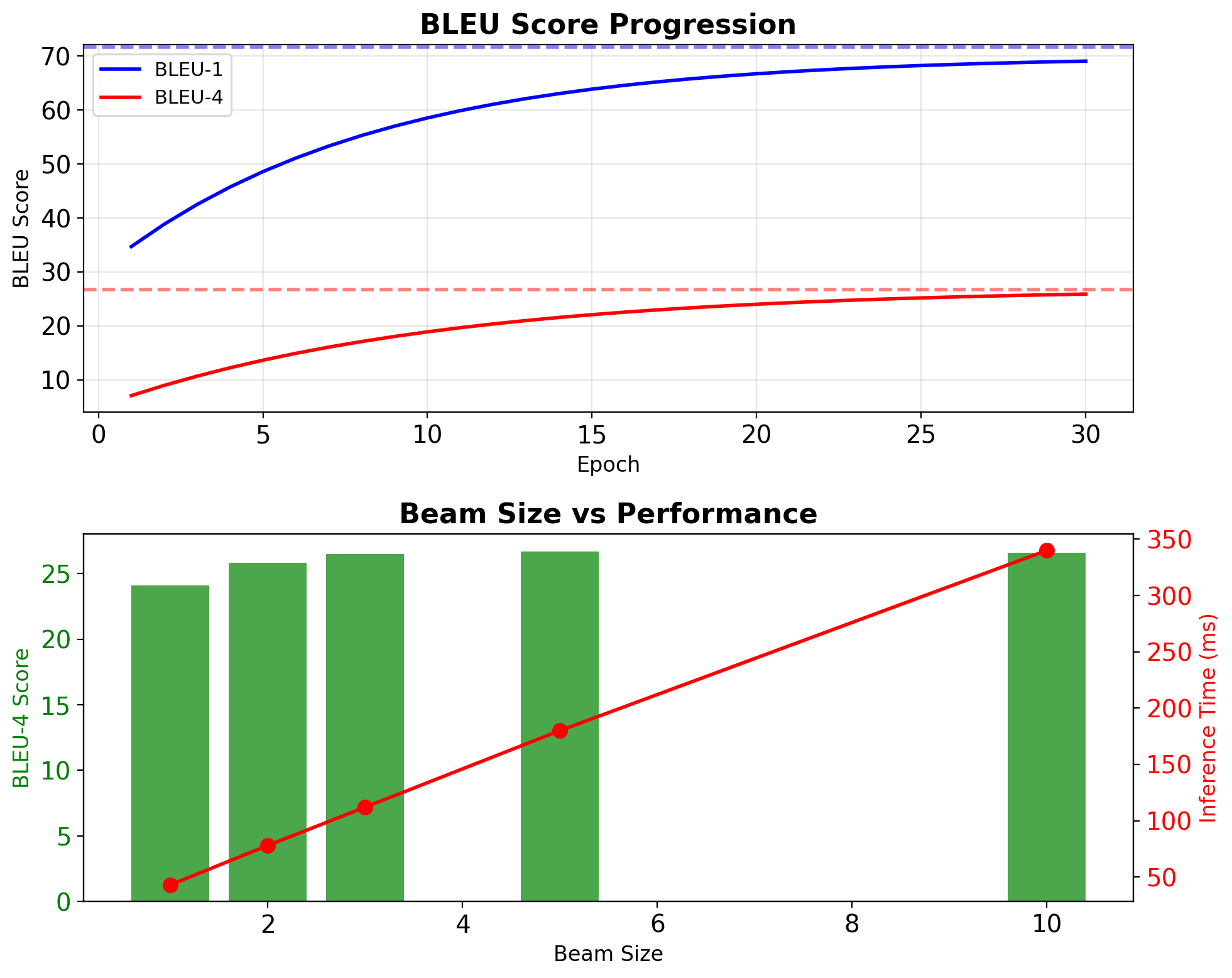

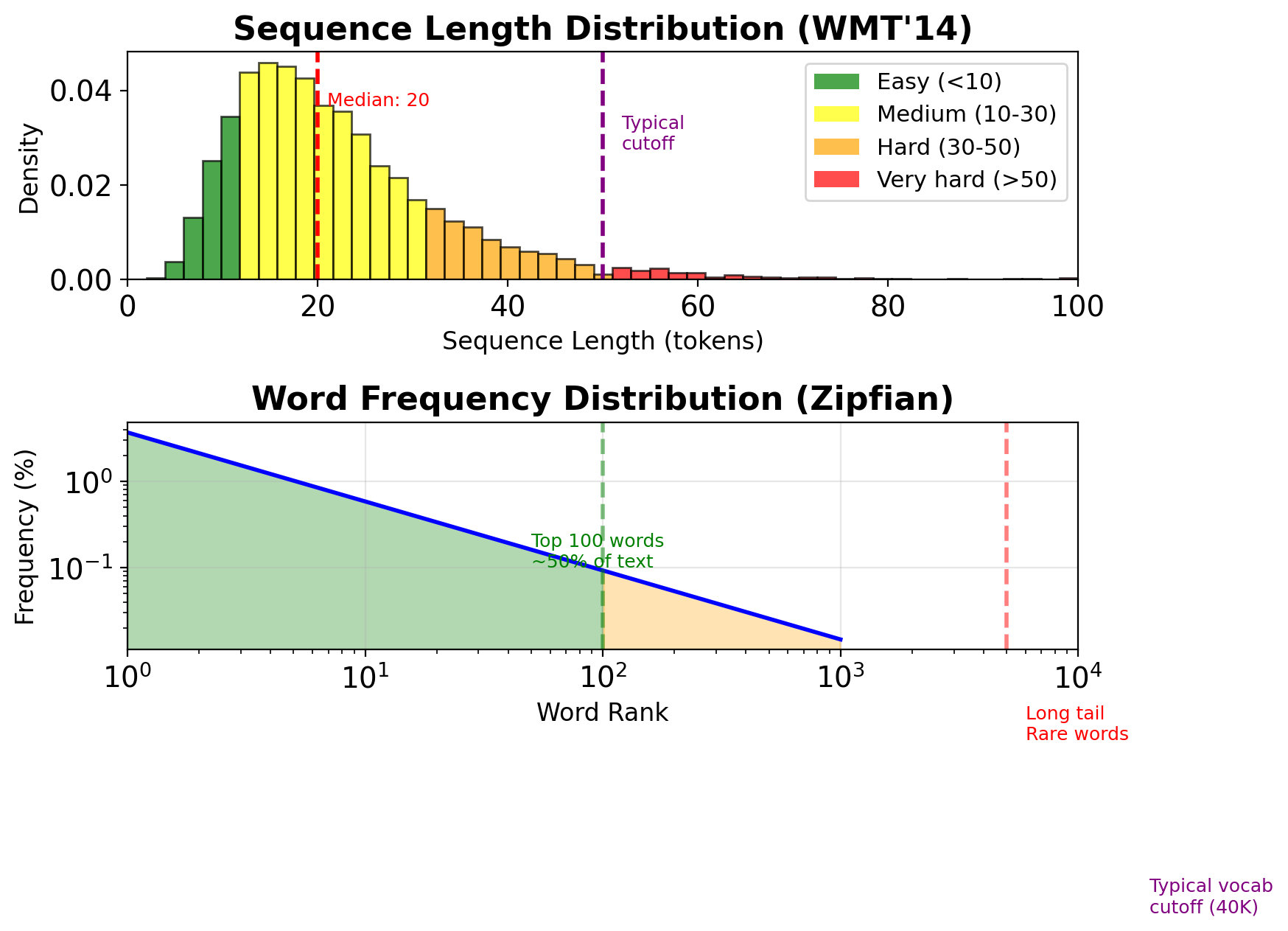

- BLEU evaluation (WMT’14: ~30)

- Information theoretic limits

Reading List

[Gradients] Y. Bengio, P. Simard, and P. Frasconi, “Learning long-term dependencies with gradient descent is difficult,” IEEE Transactions on Neural Networks, vol. 5, no. 2, pp. 157–166, 1994.

[LSTM] S. Hochreiter and J. Schmidhuber, “Long short-term memory,” Neural Computation, vol. 9, no. 8, pp. 1735–1780, 1997.

[RNN] I. Goodfellow, Y. Bengio, and A. Courville, “Deep Learning,” MIT Press, 2016, Chapter 10: Sequence Modeling: Recurrent and Recursive Nets.

[GRU] K. Cho, B. van Merriënboer, C. Gulcehre, D. Bahdanau, F. Bougares, H. Schwenk, and Y. Bengio, “Learning phrase representations using RNN encoder–decoder for statistical machine translation,” in Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, 2014, pp. 1724–1734.

[Seq2Seq] I. Sutskever, O. Vinyals, and Q. V. Le, “Sequence to sequence learning with neural networks,” in Advances in Neural Information Processing Systems, 2014, pp. 3104–3112.

[BPTT] P. J. Werbos, “Backpropagation through time: What it does and how to do it,” Proceedings of the IEEE, vol. 78, no. 10, pp. 1550–1560, 1990.

[Audio] A. Graves, A.-R. Mohamed, and G. Hinton, “Speech recognition with deep recurrent neural networks,” in 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, 2013, pp. 6645–6649.

[Vision] A. Graves, M. Liwicki, S. Fernández, R. Bertolami, H. Bunke, and J. Schmidhuber, “A novel connectionist system for unconstrained handwriting recognition,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, pp. 855–868, 2008.

[Language] A. Karpathy, J. Johnson, and L. Fei-Fei, “Visualizing and understanding recurrent networks,” arXiv preprint arXiv:1506.02078, 2015.

[Medical] Z. C. Lipton, D. C. Kale, C. Elkan, and R. Wetzel, “Learning to diagnose with LSTM recurrent networks,” in International Conference on Learning Representations, 2016.

Mathematical Foundations

State Machines and Temporal Systems

State Machine Formalism

State transition function: \[\mathbf{s}_{t+1} = f(\mathbf{s}_t, \mathbf{u}_t)\]

Output function: \[\mathbf{y}_t = g(\mathbf{s}_t, \mathbf{u}_t)\]

where:

- \(\mathbf{s}_t\) : State at time \(t\)

- \(\mathbf{u}_t\) : Input at time \(t\)

- \(\mathbf{y}_t\) : Output at time \(t\)

Applications: digital circuits (FSMs), control systems, signal processing, recurrent neural networks

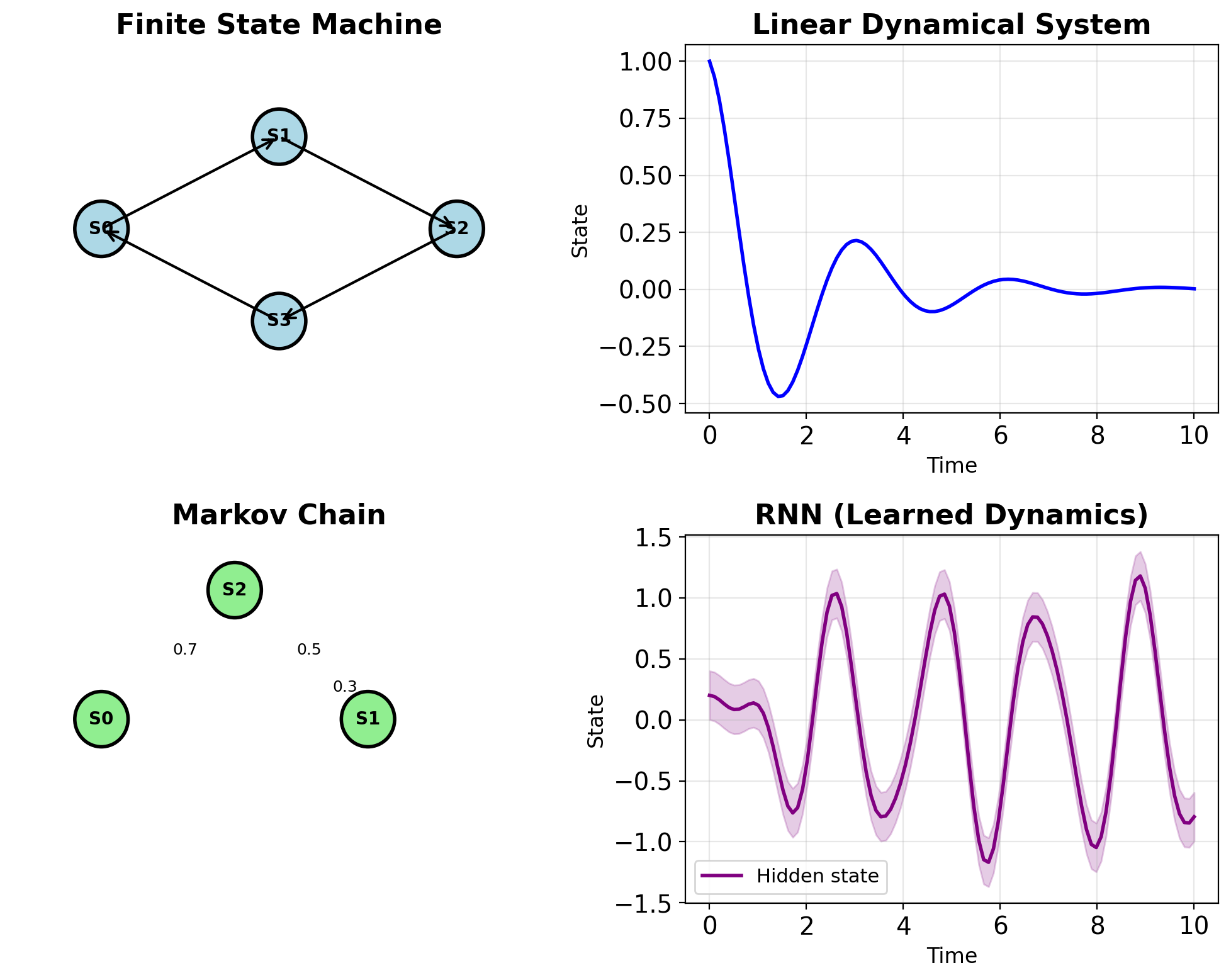

State Machine Complexity Hierarchy

| Type | State Space | Dynamics |

|---|---|---|

| FSM | Discrete, finite | Designed |

| Markov Chain | Discrete | Stochastic |

| Linear System | Continuous | Linear, fixed |

| Nonlinear System | Continuous | Nonlinear, fixed |

| RNN | Continuous | Nonlinear, learned |

RNNs optimize dynamics from data

- FSM: Hand-designed state transitions

- Linear system: Physics-based equations

- RNN: Gradient-based optimization

State Captures Temporal History

Without state (memoryless): \[\mathbf{y}_t = f(\mathbf{x}_t)\]

With explicit history: \[\mathbf{y}_t = f(\mathbf{x}_t, \mathbf{x}_{t-1}, ..., \mathbf{x}_{t-k})\]

With state (compressed history): \[\mathbf{s}_t = g(\mathbf{s}_{t-1}, \mathbf{x}_t)\] \[\mathbf{y}_t = h(\mathbf{s}_t)\]

Trade-offs:

- Explicit history: High memory, exact

- State compression: Low memory, approximate

- Information bottleneck: \(\mathbf{s}_t \in \mathbb{R}^H\) must encode all relevant past

Memory complexity comparison:

| Approach | Memory | Computation | Gradient Flow |

|---|---|---|---|

| Explicit history | \(O(k \times d)\) | \(O(k \times d)\) per step | Direct to each \(\mathbf{x}_{t-i}\) |

| State compression | \(O(H)\) | \(O(H^2)\) per step | Through state chain |

State influence decay:

For state-based compression with linear dynamics: \[\text{Influence}(\mathbf{x}_{t-k}) \approx \|\mathbf{W}\|^k \cdot \|\mathbf{x}_{t-k}\|\]

- Exponential decay with \(\|\mathbf{W}\| < 1\)

- Information loss is inevitable

- Trade memory for computational efficiency

Practical implications:

- Transformers use explicit history: \(O(T^2)\) attention

- RNNs use state compression: \(O(1)\) per step

- Hybrid approaches balance both (e.g., Transformer-XL)

RNNs Generalize All Forms of State Machines

| Aspect | FSM | Linear System | RNN |

|---|---|---|---|

| State space | \(\{S_1, ..., S_n\}\) | \(\mathbb{R}^n\) | \(\mathbb{R}^H\) |

| Transition | Lookup table | \(\mathbf{s}_t = \mathbf{A}\mathbf{s}_{t-1} + \mathbf{B}\mathbf{u}_t\) | \(\mathbf{s}_t = \tanh(\mathbf{W}_{ss}\mathbf{s}_{t-1} + \mathbf{W}_{xs}\mathbf{x}_t)\) |

| Parameters | Designed | Fixed by physics | Learned from data |

| Optimization | Manual | System ID | Backpropagation |

| Capacity | \(\log_2(n)\) bits | Infinite (continuous) | Infinite (continuous) |

RNNs are universal approximators of dynamical systems

- Theoretical capability with sufficient hidden units

- Practically limited by vanishing gradients and finite data

- Effective memory horizon: ~10-20 steps without gating

Differences:

FSM:

- 8 discrete states → 3 bits of information

- Transitions are table lookups

- No interpolation between states

Linear System:

- Continuous state evolution

- Exponential decay/growth patterns

- Fixed by eigenvalues: \(e^{\lambda t}\)

RNN:

- Approximates arbitrary nonlinear dynamics

- Adapts trajectory through training

- Can approximate both FSM and linear systems

Mathematical capacity:

- FSM: \(2^n\) states with n bits

- Linear: Defined by n×n matrix eigenvalues

- RNN: Universal approximator with sufficient hidden units

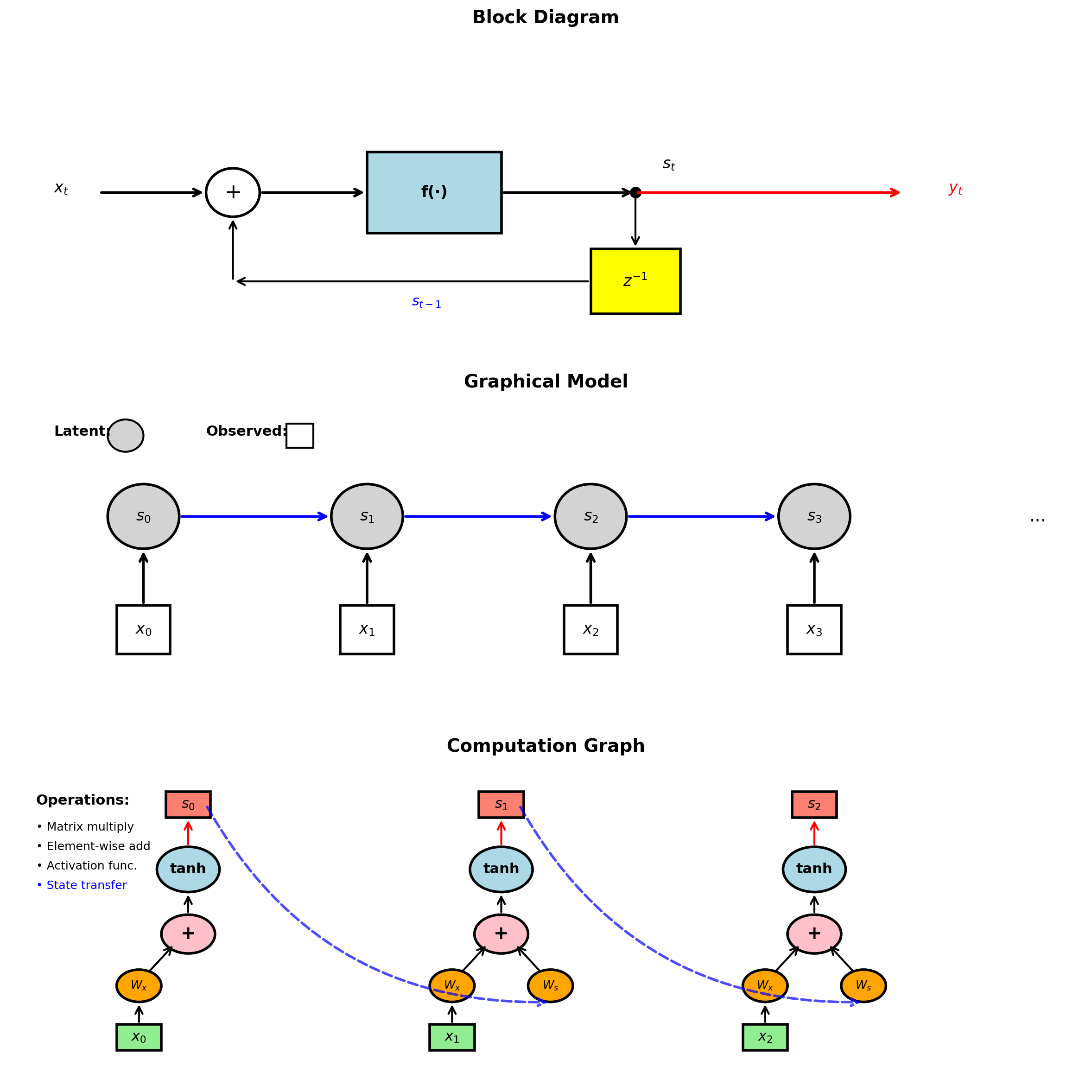

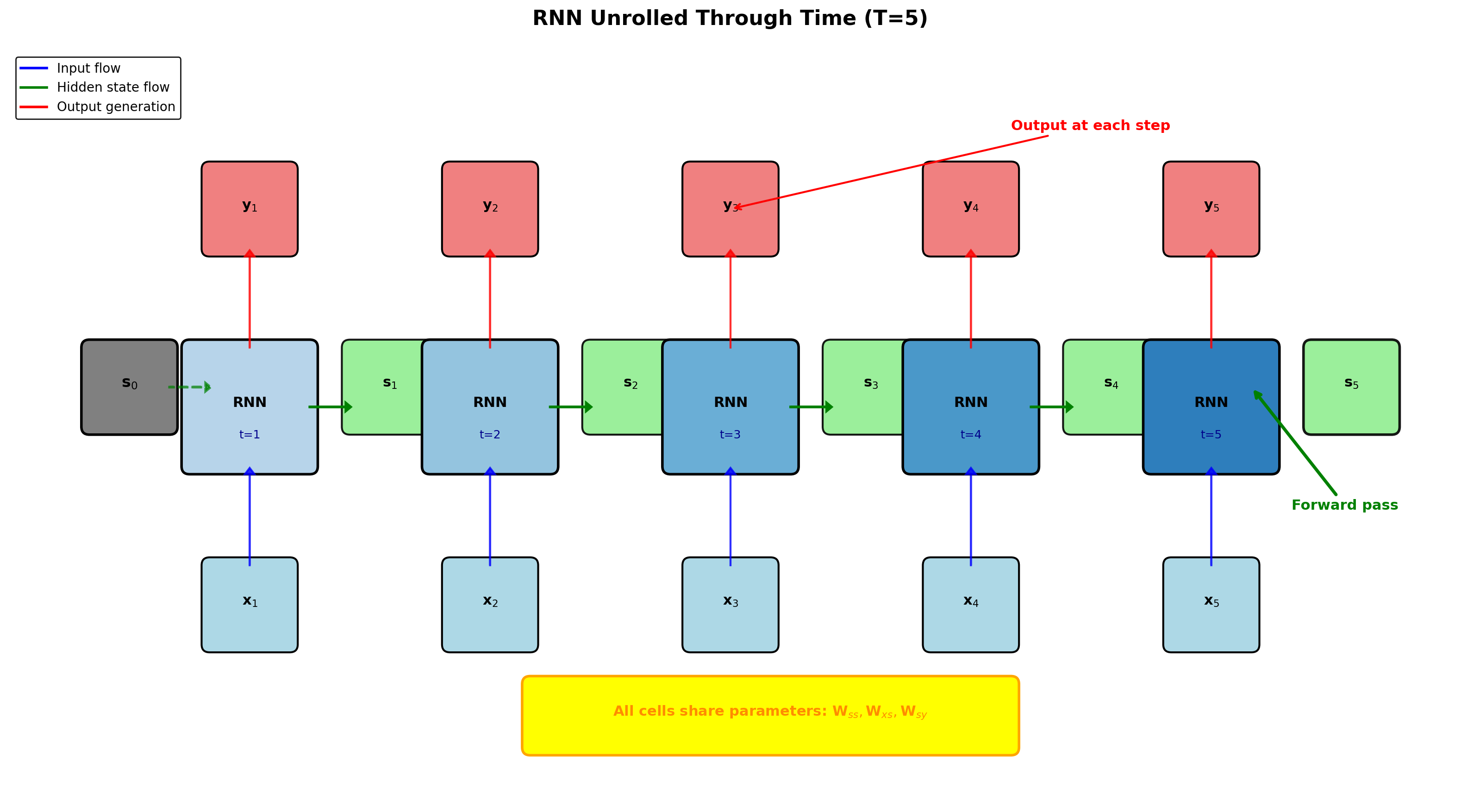

Recurrent and Unrolled Representations Are Mathematically Equivalent

Recurrent form (compact):

- Single state update block

- Feedback connection

- Direct implementation mapping

Unrolled form (explicit):

- Time as spatial dimension

- Each timestep = layer

- Explicit gradient computation

Mathematical equivalence: \[\mathbf{s}_t = f(\mathbf{s}_{t-1}, \mathbf{x}_t) = f(f(\mathbf{s}_{t-2}, \mathbf{x}_{t-1}), \mathbf{x}_t)\]

Unrolling depth = sequence length \(T\)

- Gradient path length: \(O(T)\)

- Memory requirement: \(O(T \times H)\)

Multiple Representations of RNNs

Block diagram (Controls/DSP):

- Emphasizes signal flow

- Shows delay elements explicitly

- Standard in filter design

Graphical model (ML):

- Emphasizes dependencies

- Probabilistic interpretation

- Probabilistic inference ready

Computation graph (Deep Learning):

- Emphasizes operations

- Shows gradient flow

- Automatic differentiation compatible

All represent the same mathematics.

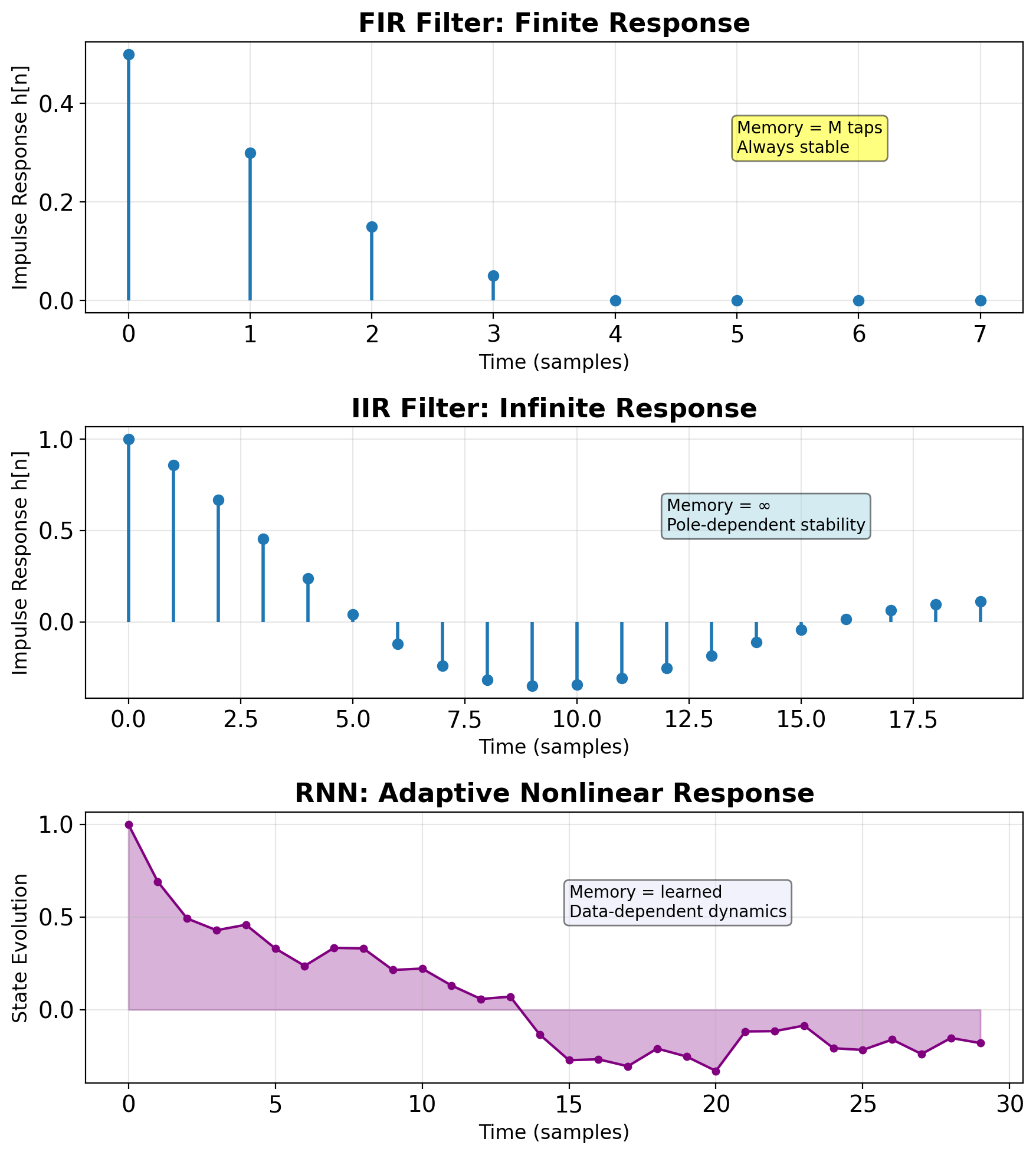

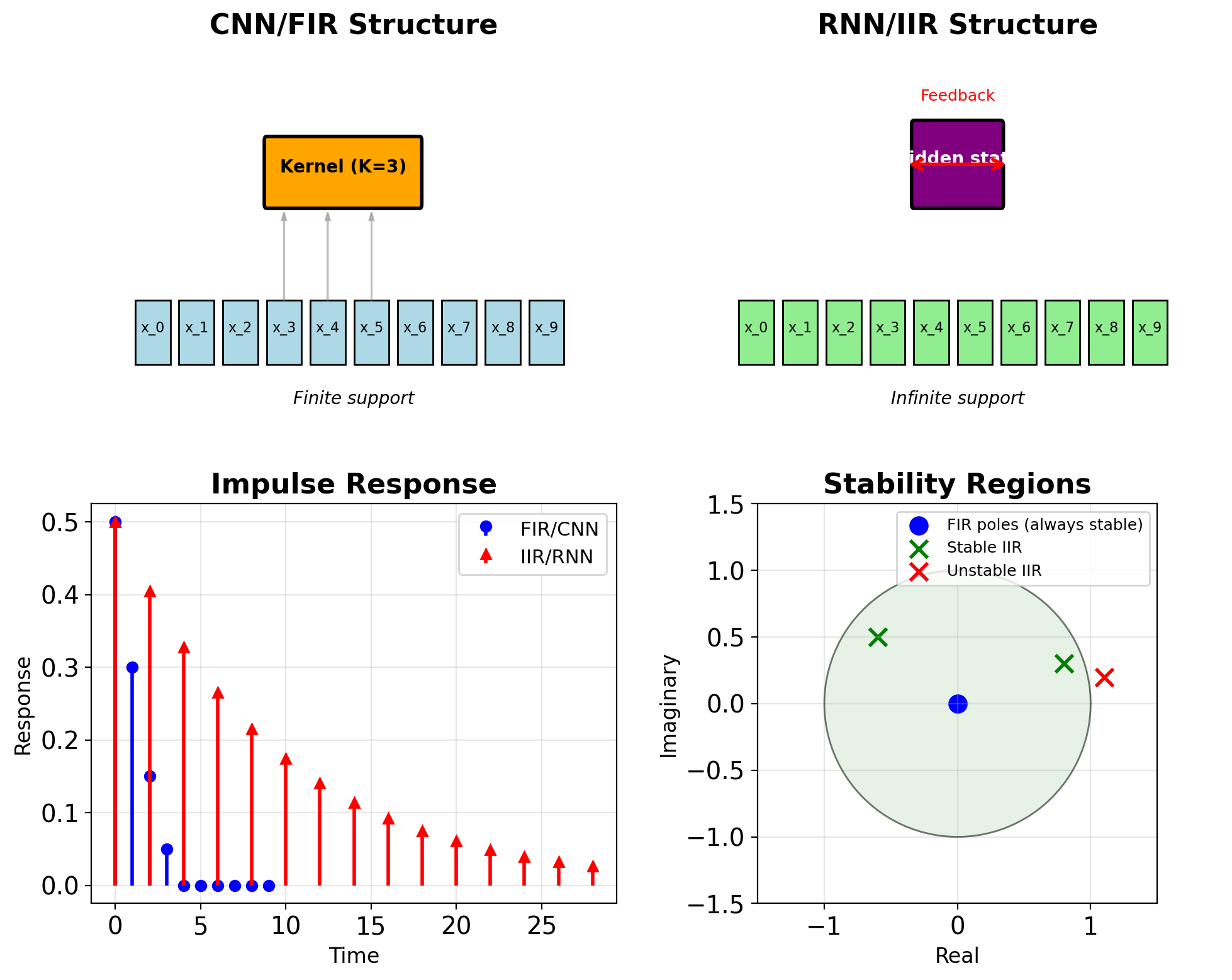

RNNs as Adaptive Nonlinear Filters

| Aspect | FIR Filter | IIR Filter | RNN |

|---|---|---|---|

| Response | Finite | Infinite | Infinite |

| Memory | Explicit (taps) | Implicit (poles) | Implicit (state) |

| Stability | Always stable | Depends on poles | Learned stability |

| Adaptation | LMS/RLS | Adaptive IIR | Backpropagation |

| Nonlinearity | None | None | Activation functions |

Signal processing interpretation:

- Hidden state = filter state variables

- Weights = filter coefficients

- Activation = nonlinear transfer function

- Training = adaptive filter optimization

RNNs: nonlinear adaptive filters in high-dimensional spaces

Discrete-Time Linear State-Space Systems

State update: \[\mathbf{s}_t = \mathbf{A}\mathbf{s}_{t-1} + \mathbf{B}\mathbf{u}_t\]

Output: \[\mathbf{y}_t = \mathbf{C}\mathbf{s}_t + \mathbf{D}\mathbf{u}_t\]

where:

- \(\mathbf{s}_t \in \mathbb{R}^n\) : state vector

- \(\mathbf{u}_t \in \mathbb{R}^m\) : input vector

- \(\mathbf{y}_t \in \mathbb{R}^p\) : output vector

- \(\mathbf{A} \in \mathbb{R}^{n \times n}\) : state transition matrix

- \(\mathbf{B} \in \mathbb{R}^{n \times m}\) : input matrix

- \(\mathbf{C} \in \mathbb{R}^{p \times n}\) : output matrix

Linearity enables closed-form analysis

Recursive Unrolling of State Evolution

Starting from \(\mathbf{s}_0\): \[\mathbf{s}_1 = \mathbf{A}\mathbf{s}_0 + \mathbf{B}\mathbf{u}_1\] \[\mathbf{s}_2 = \mathbf{A}\mathbf{s}_1 + \mathbf{B}\mathbf{u}_2 = \mathbf{A}^2\mathbf{s}_0 + \mathbf{A}\mathbf{B}\mathbf{u}_1 + \mathbf{B}\mathbf{u}_2\]

General solution: \[\mathbf{s}_t = \mathbf{A}^t\mathbf{s}_0 + \sum_{i=0}^{t-1}\mathbf{A}^i\mathbf{B}\mathbf{u}_{t-i}\]

Two components:

- Homogeneous: \(\mathbf{A}^t\mathbf{s}_0\) (initial condition response)

- Particular: \(\sum_{i=0}^{t-1}\mathbf{A}^i\mathbf{B}\mathbf{u}_{t-i}\) (input response)

Long-term behavior dominated by \(\mathbf{A}^t\)

Diagonalization of System Dynamics

For diagonalizable \(\mathbf{A}\): \[\mathbf{A} = \mathbf{V}\boldsymbol{\Lambda}\mathbf{V}^{-1}\]

where:

- \(\mathbf{V} = [\mathbf{v}_1, ..., \mathbf{v}_n]\) : eigenvectors

- \(\boldsymbol{\Lambda} = \text{diag}(\lambda_1, ..., \lambda_n)\) : eigenvalues

Powers simplify: \[\mathbf{A}^t = \mathbf{V}\boldsymbol{\Lambda}^t\mathbf{V}^{-1}\] \[\boldsymbol{\Lambda}^t = \text{diag}(\lambda_1^t, ..., \lambda_n^t)\]

Eigenspace interpretation:

- Transform to eigenspace: \(\tilde{\mathbf{s}} = \mathbf{V}^{-1}\mathbf{s}\)

- Evolution: \(\tilde{s}_i(t) = \lambda_i^t \tilde{s}_i(0)\)

- Each mode evolves independently

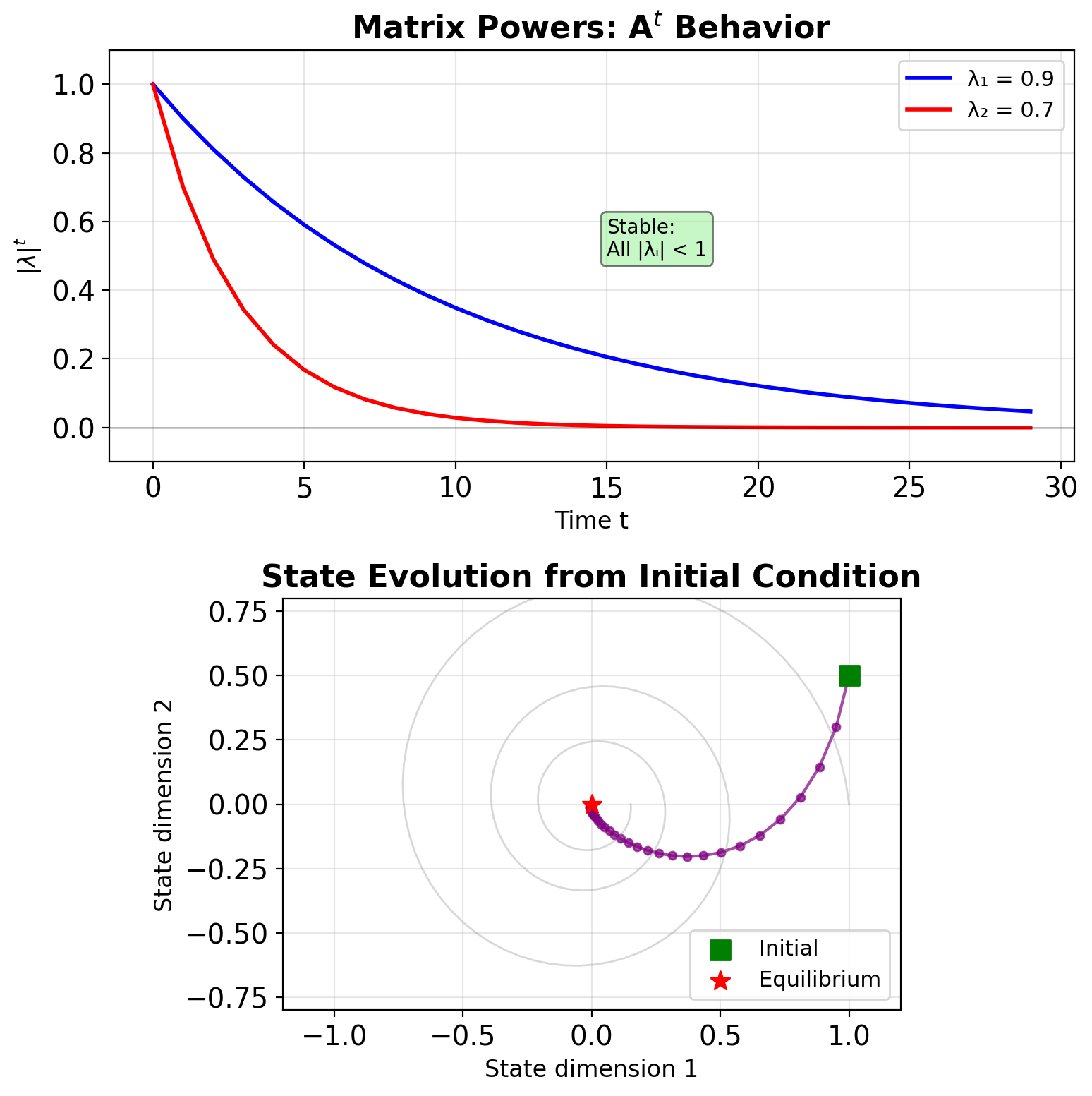

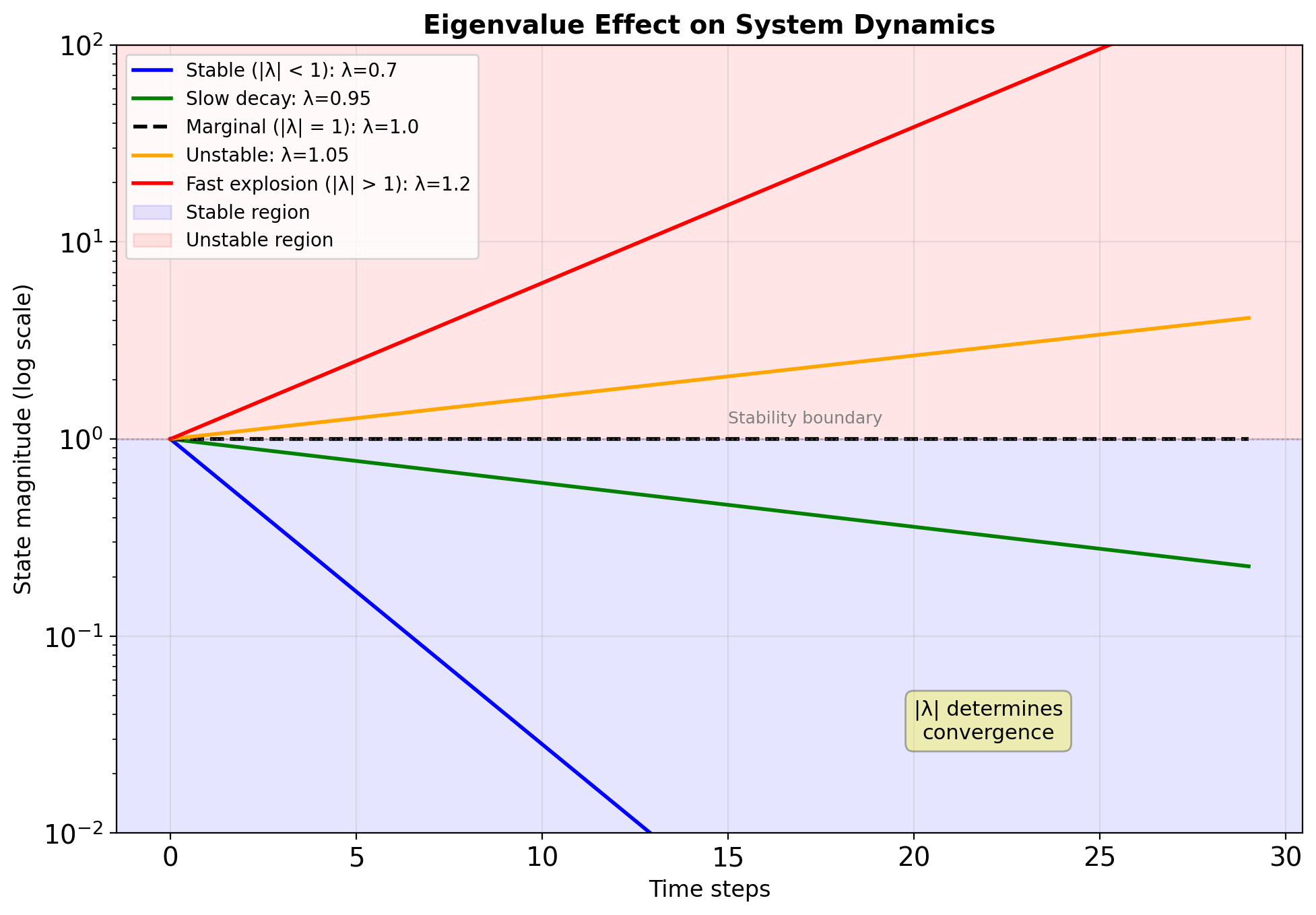

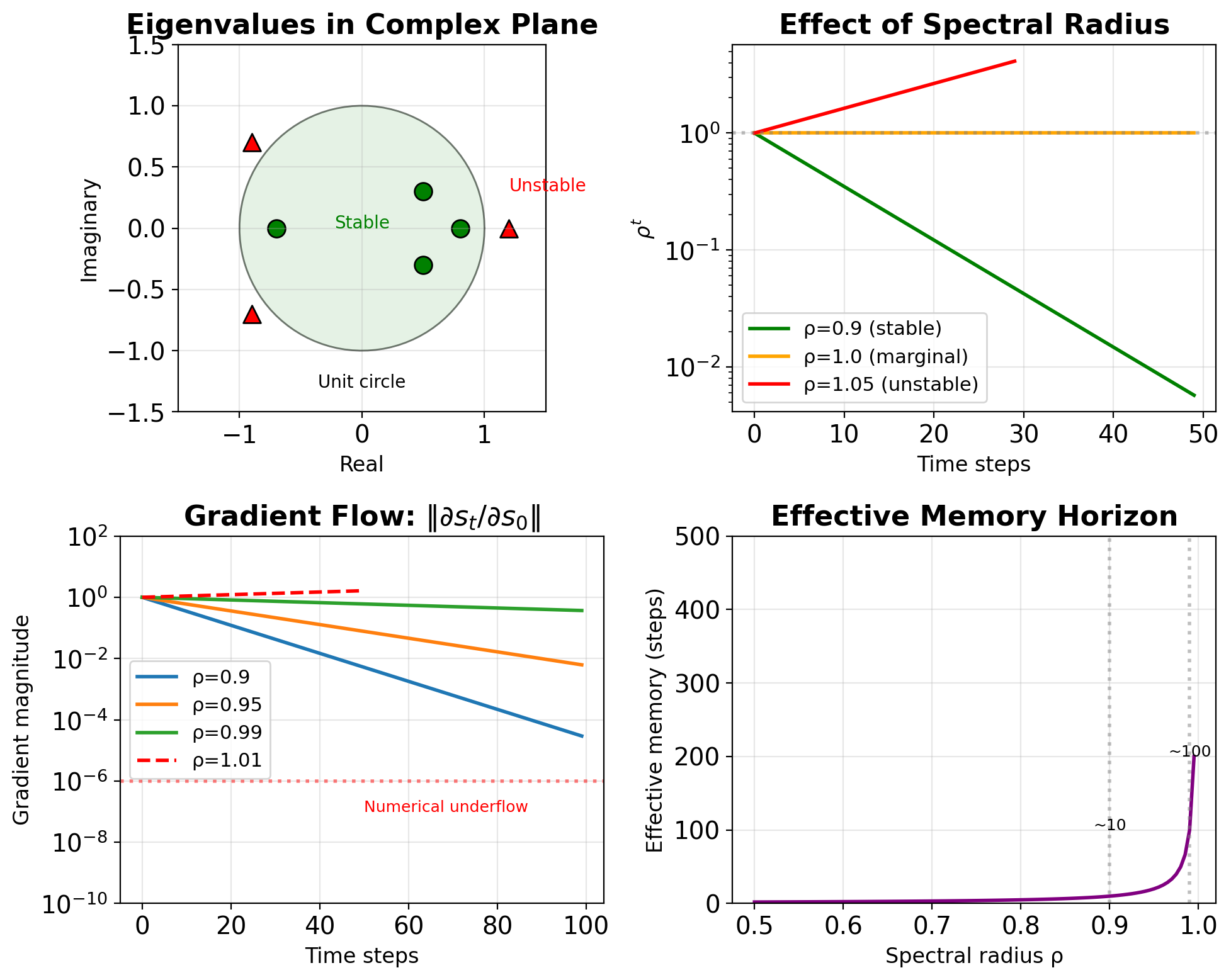

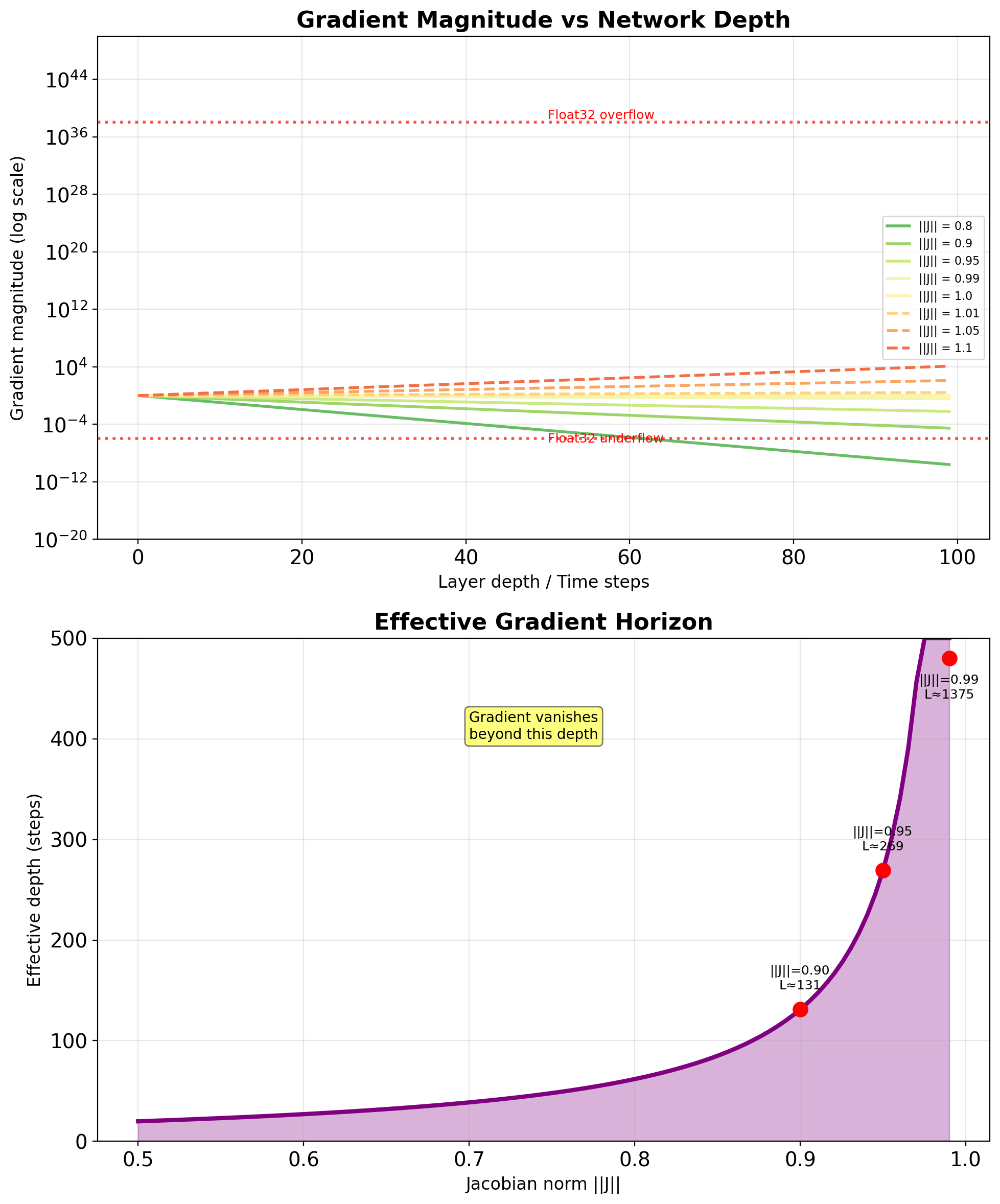

Spectral Radius Determines System Fate

\[\rho(\mathbf{A}) = \max_i |\lambda_i|\]

Stability conditions:

| Spectral Radius | Behavior | Gradient Flow |

|---|---|---|

| \(\rho < 1\) | Stable, converges to 0 | Vanishing |

| \(\rho = 1\) | Marginal stability | Preserved |

| \(\rho > 1\) | Unstable, diverges | Exploding |

For RNNs:

- Initialization: Often set \(\rho(\mathbf{W}_{ss}) \approx 1\)

- Training: Can drift from initialization

- Gradient flow: \(\|\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_0}\| \approx \rho^t\)

Time horizon: Effective memory \(\approx \frac{1}{1-\rho}\)

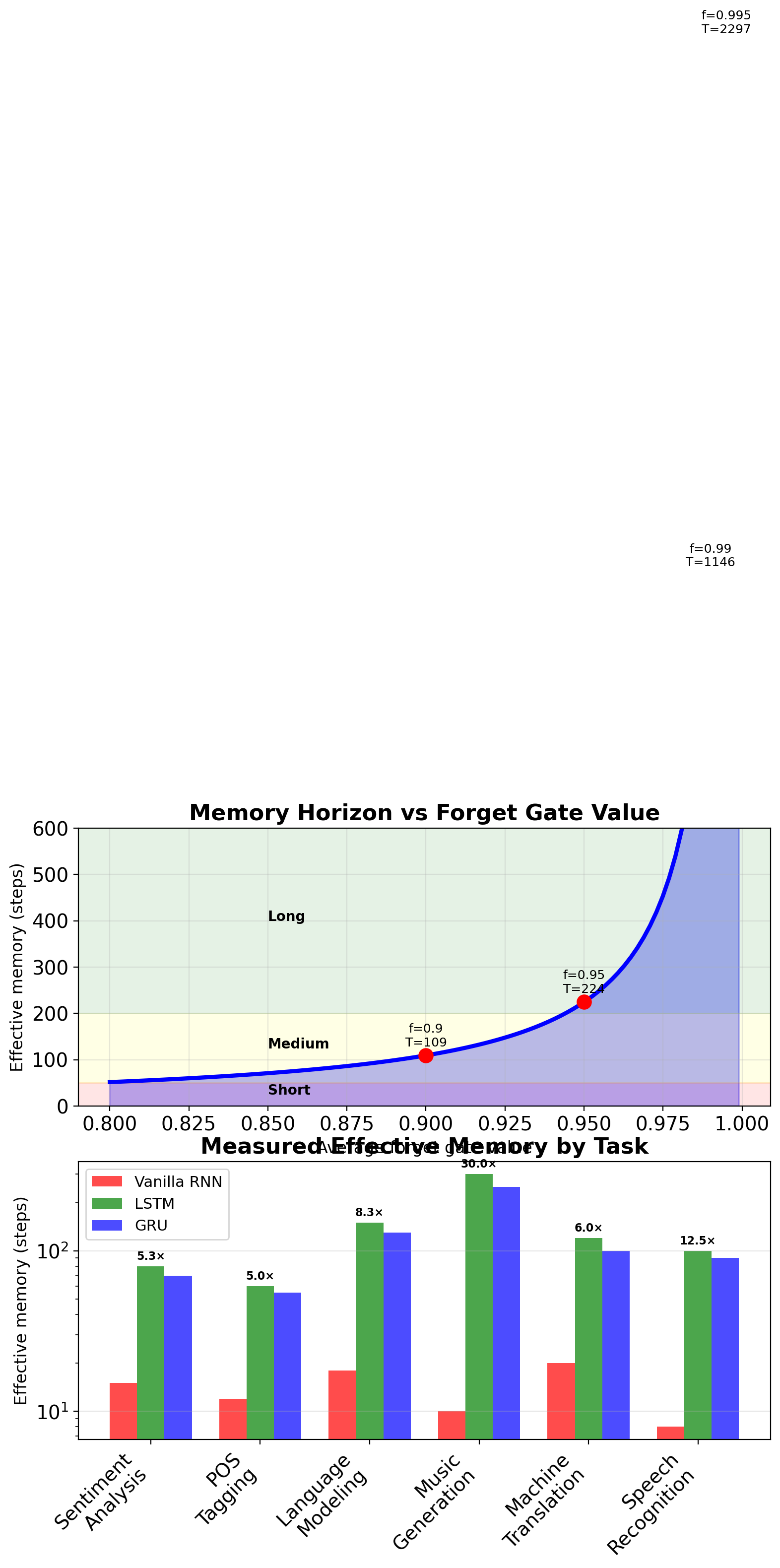

- \(\rho = 0.9\): ~10 steps

- \(\rho = 0.99\): ~100 steps

- \(\rho = 0.999\): ~1000 steps

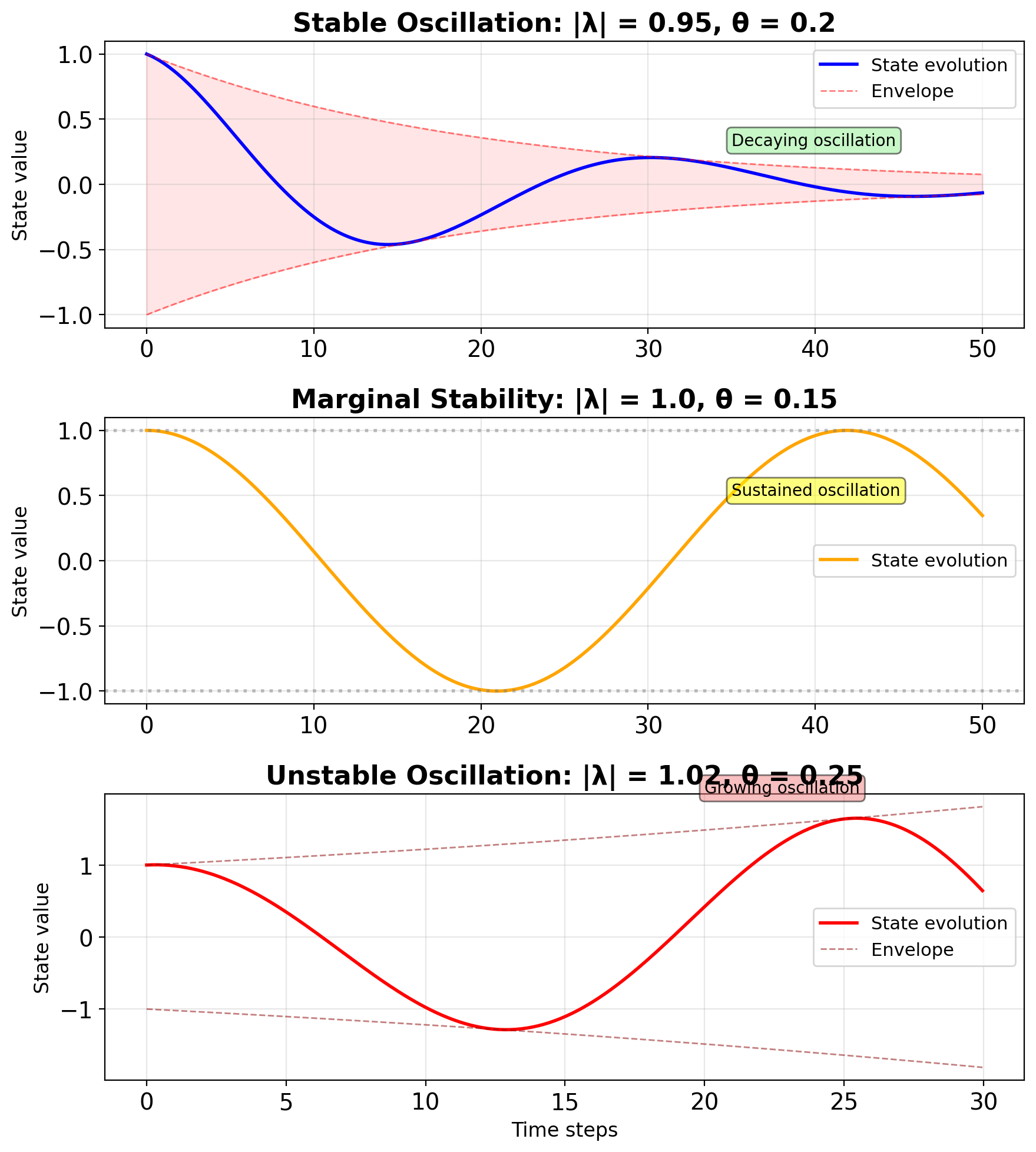

Complex Eigenvalues Create Oscillations

For real matrix \(\mathbf{A}\): \[\lambda = a \pm bi\]

Polar form: \[\lambda = r e^{\pm i\theta}\] where:

- \(r = |\lambda| = \sqrt{a^2 + b^2}\) : magnitude

- \(\theta = \arctan(b/a)\) : frequency

Evolution: \[\lambda^t = r^t e^{\pm it\theta} = r^t(\cos(t\theta) \pm i\sin(t\theta))\]

Results in:

- Exponential envelope: \(r^t\)

- Oscillation: \(\cos(t\theta)\), \(\sin(t\theta)\)

- Period: \(T = 2\pi/\theta\)

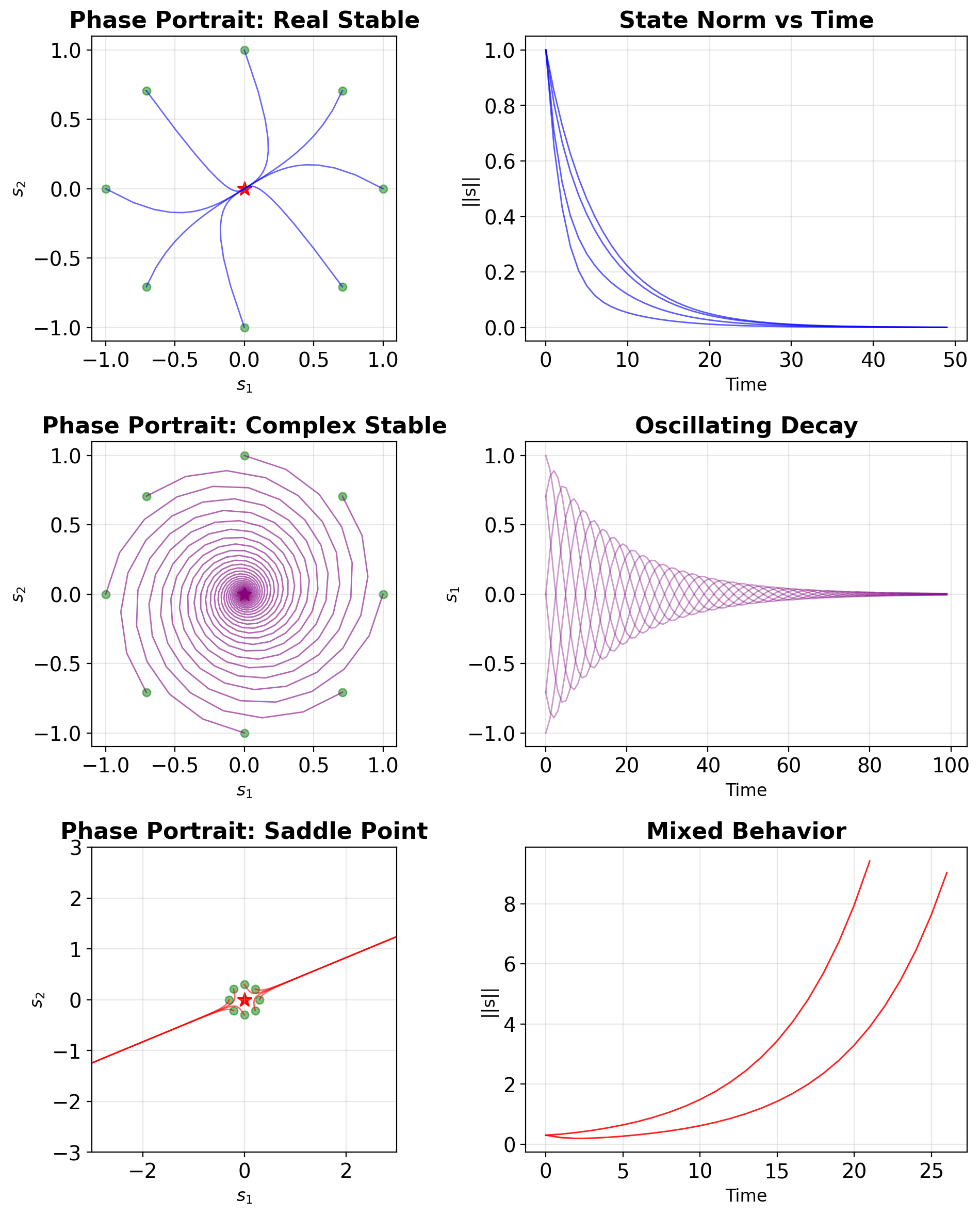

Three Characteristic 2×2 Cases

Case 1: Real, stable \[\mathbf{A}_1 = \begin{bmatrix} 0.8 & 0.1 \\ 0.1 & 0.7 \end{bmatrix}\] \(\lambda = \{0.9, 0.6\}\) → Monotonic decay

Case 2: Complex, stable \[\mathbf{A}_2 = \begin{bmatrix} 0.9 & 0.3 \\ -0.3 & 0.9 \end{bmatrix}\] \(\lambda = 0.9 \pm 0.3i\) → Decaying spiral

Case 3: Real, mixed \[\mathbf{A}_3 = \begin{bmatrix} 1.1 & 0.2 \\ 0.2 & 0.7 \end{bmatrix}\] \(\lambda = \{1.2, 0.6\}\) → Saddle point

Each case has distinct implications for gradient flow in RNNs

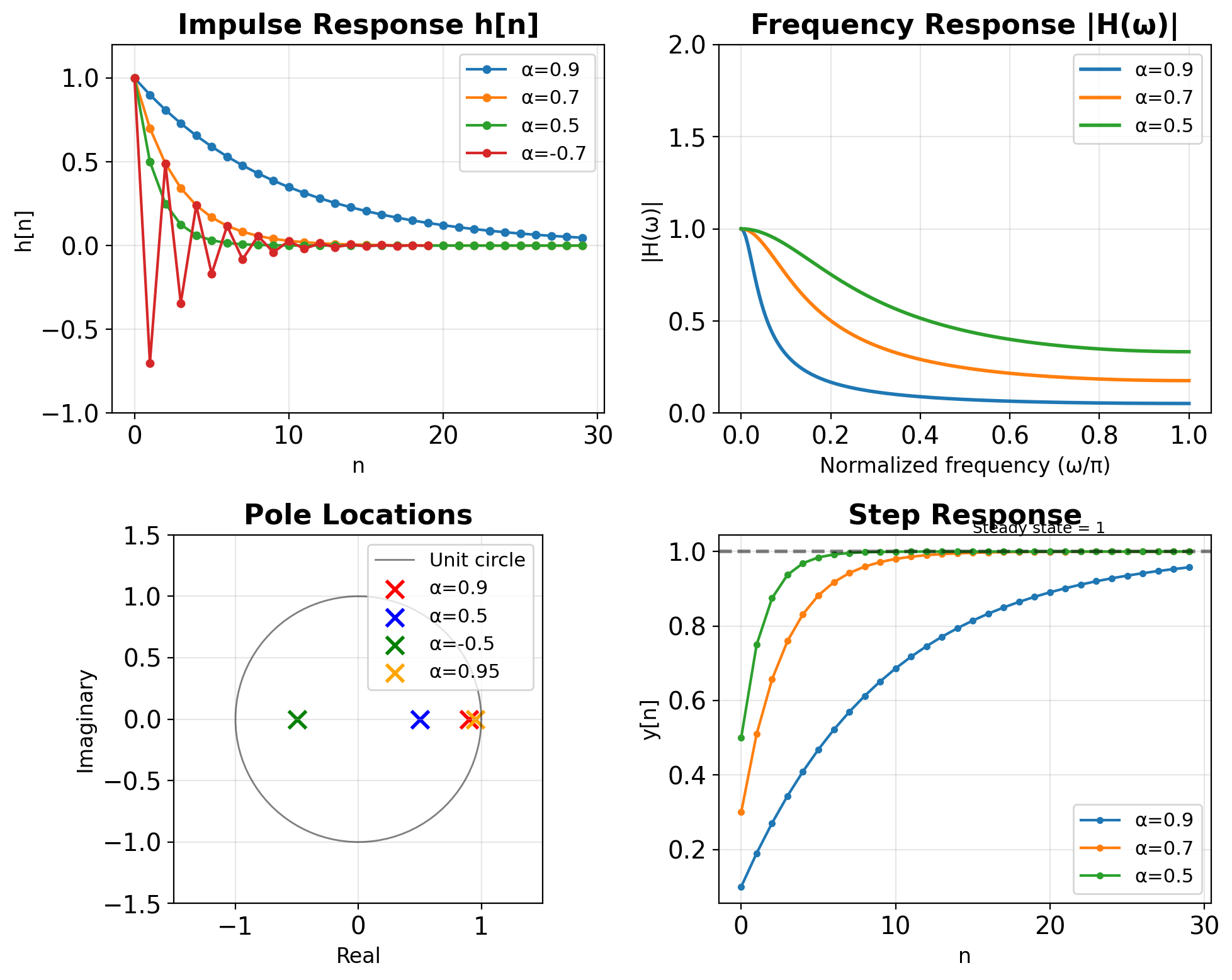

First-Order AR Filter Review

First-order AR filter: \[y[n] = \alpha y[n-1] + \beta x[n]\]

Transfer function: \[H(z) = \frac{\beta}{1 - \alpha z^{-1}}\]

Pole location determines behavior:

- \(|\alpha| < 1\) : Stable (pole inside unit circle)

- \(0 < \alpha < 1\) : Low-pass characteristic

- \(-1 < \alpha < 0\) : Oscillatory response

- \(|\alpha| \geq 1\) : Unstable

DC gain (steady-state response): \[H(1) = \frac{\beta}{1 - \alpha}\]

For unit DC gain: \(\beta = 1 - \alpha\)

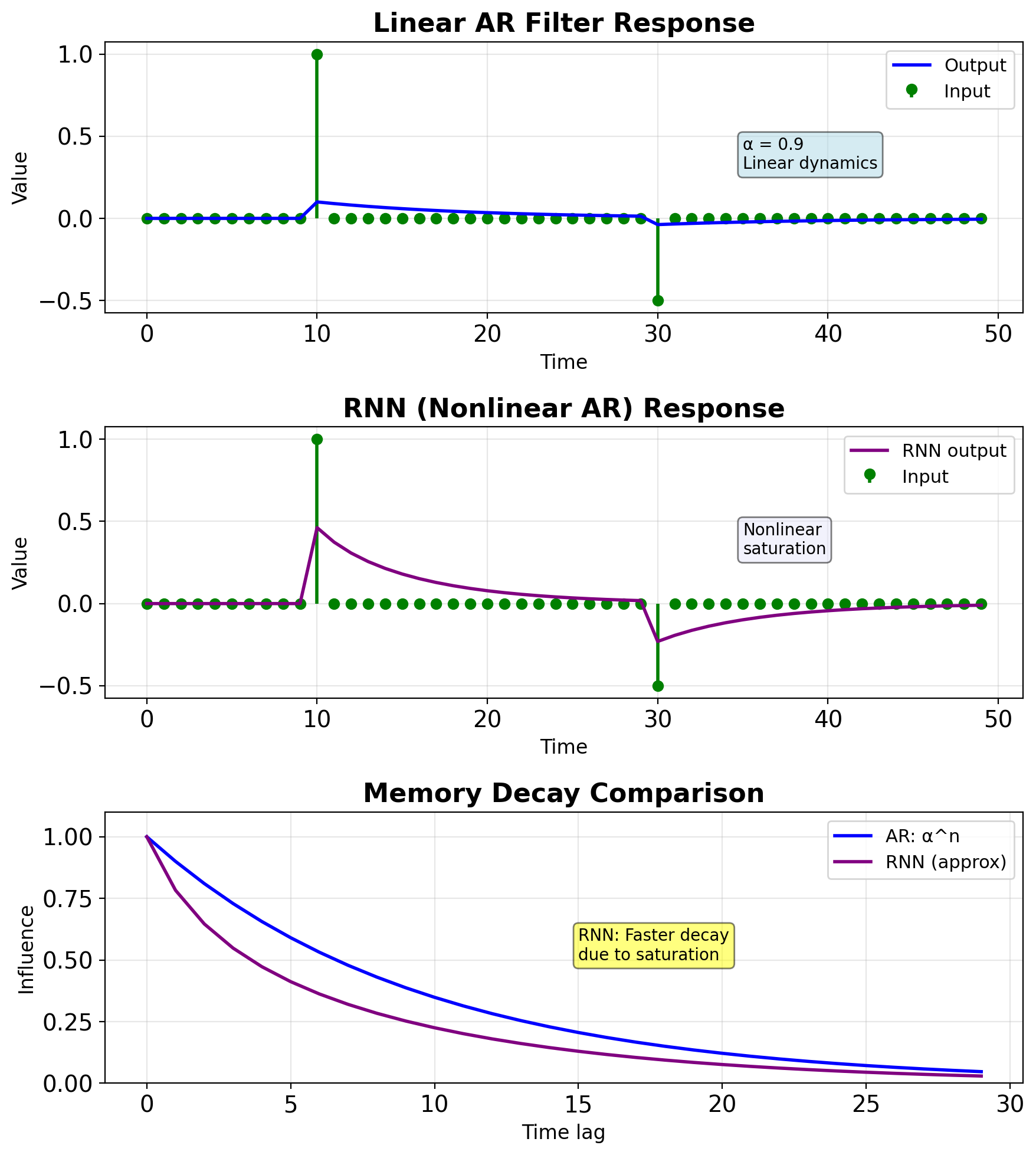

RNN as Nonlinear AR Filter

Linear AR (vector form): \[\mathbf{s}_t = \mathbf{A}\mathbf{s}_{t-1} + \mathbf{B}\mathbf{x}_t\]

Vanilla RNN: \[\mathbf{s}_t = \tanh(\mathbf{W}_{ss}\mathbf{s}_{t-1} + \mathbf{W}_{xs}\mathbf{x}_t + \mathbf{b})\]

Generalizations:

- Nonlinear activation (tanh) vs linear

- Learned coefficients vs designed

- High-dimensional state vs scalar/low-dim

- Data-adaptive vs fixed

Memory mechanism:

- AR filter: Exponential decay \(\alpha^n\)

- RNN: Complex, input-dependent decay

- Both: State provides implicit memory

Stability:

- AR: \(|\alpha| < 1\)

- RNN: \(\rho(\mathbf{W}_{ss}) \cdot |\tanh'| < 1\)

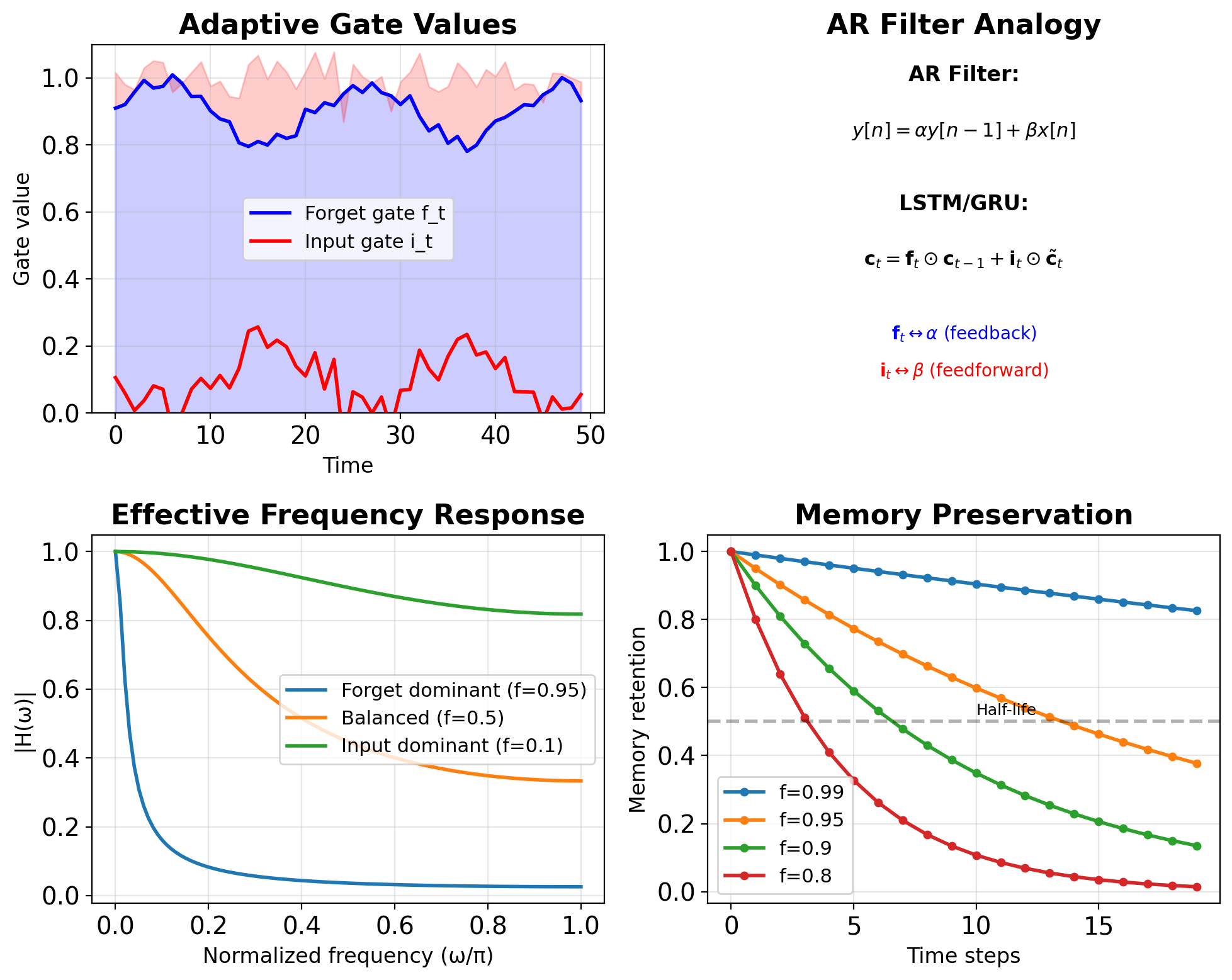

Filter Perspective on Gates

Forget gate (feedback coefficient): \[\mathbf{f}_t = \sigma(\mathbf{W}_f[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_f)\]

- Analogous to \(\alpha\) in AR filter

- Controls memory retention

- Learned per timestep and dimension

Input gate (feedforward coefficient): \[\mathbf{i}_t = \sigma(\mathbf{W}_i[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_i)\]

- Analogous to \(\beta\) in AR filter

- Controls new information integration

Coupled gates for unit DC gain:

- GRU: \(\mathbf{z}_t\) and \((1-\mathbf{z}_t)\)

- Ensures \(\mathbf{f}_t + \mathbf{i}_t \approx 1\)

- Preserves signal magnitude

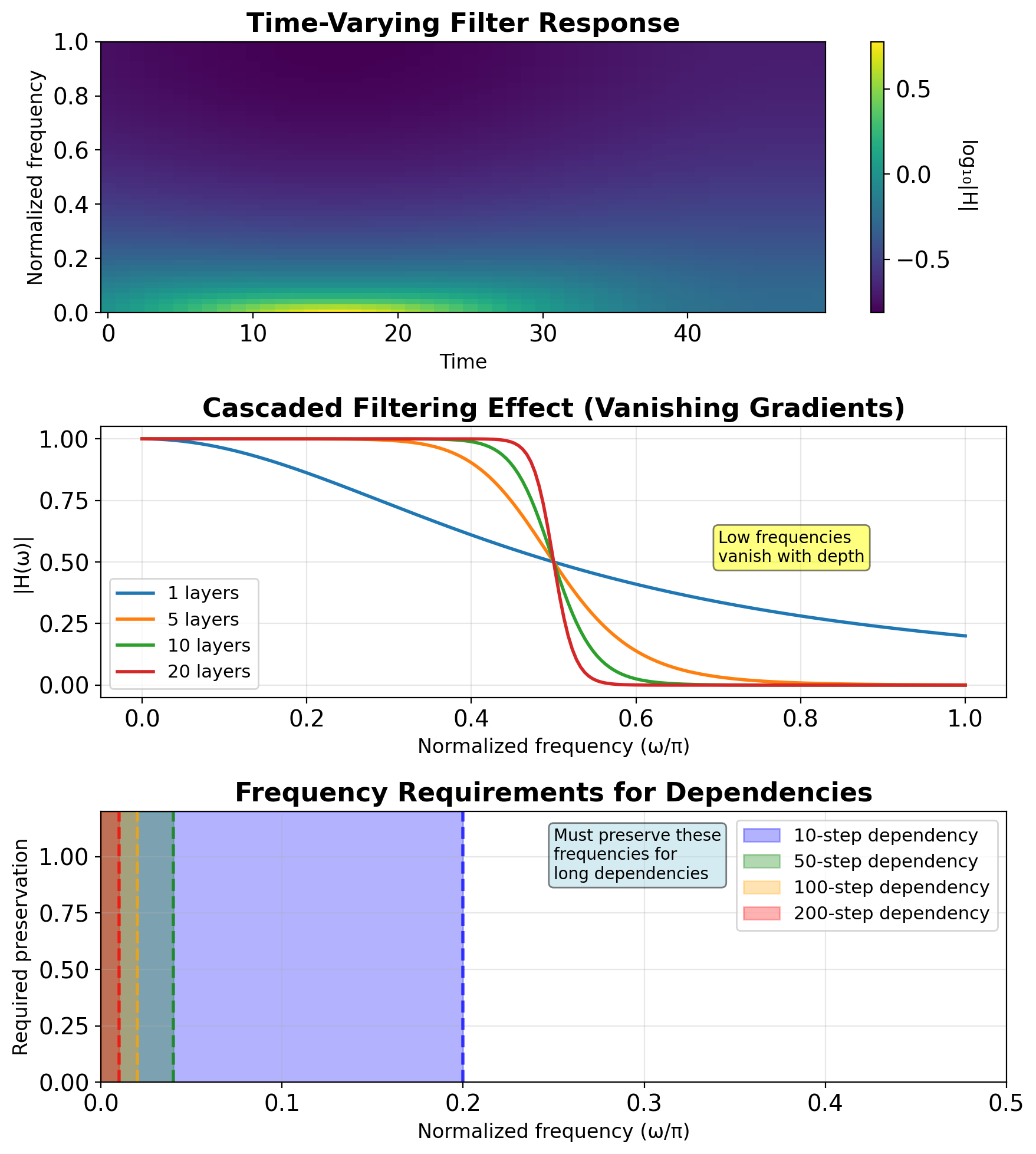

Frequency Domain Intuition

Linear system frequency response:

- Fixed for all inputs

- Determined by poles/zeros

- Time-invariant filtering

RNN frequency response:

- Input-dependent through gates

- Time-varying filter characteristics

- Adaptive bandwidth

Long-term dependencies = Low frequencies

- Need to preserve DC and low freq

- Forget gate ≈ 1 preserves low freq

Vanishing gradients = High-pass filtering

- Each tanh acts as low-pass

- Cascaded effect removes low freq

Gates modulate bandwidth

- High forget gate: Wider bandwidth

- Low forget gate: Narrow, input-focused

IIR vs FIR Analogy

CNNs = FIR Filters

- Finite receptive field (kernel size)

- No feedback connections

- Always stable

- Explicit memory (stored activations)

- Parallel computation possible

RNNs = IIR Filters

- Infinite receptive field (in principle)

- Feedback through hidden state

- Can be unstable

- Implicit memory (compressed state)

- Sequential computation required

Comparison:

- Memory: FIR needs O(K), IIR needs O(1)

- Computation: FIR is O(K) parallel, IIR is O(1) sequential

- Stability: FIR guaranteed, IIR conditional

- Design: FIR straightforward, IIR complex

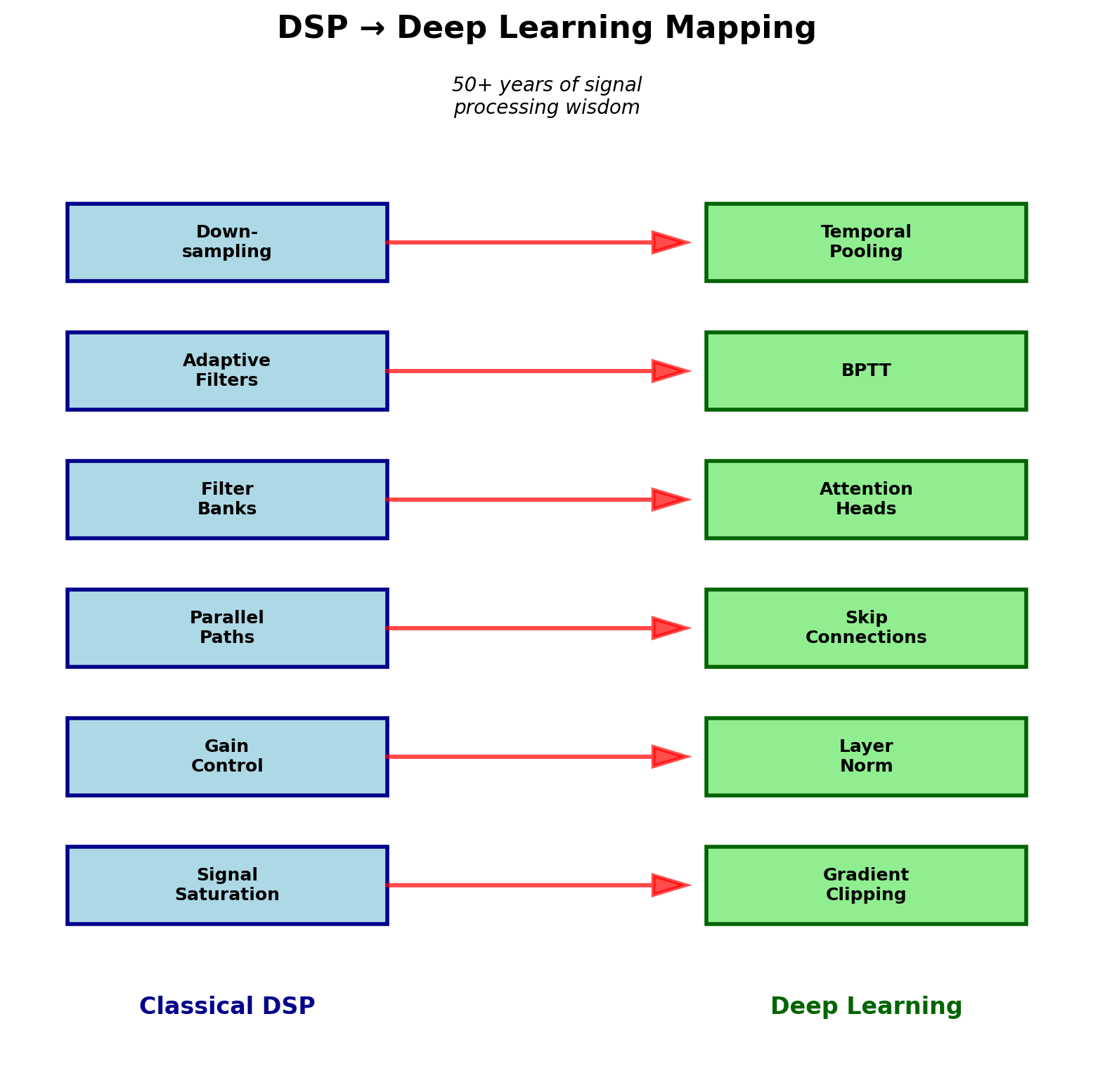

Signal Processing Insights

| DSP Concept | RNN Equivalent | Purpose |

|---|---|---|

| Saturation/Limiting | Gradient clipping | Prevent overflow |

| AGC | Layer norm | Stabilize magnitude |

| Parallel paths | Skip connections | Preserve signal |

| Filter bank | Attention heads | Frequency decomposition |

| Adaptive filtering | BPTT | Learn coefficients |

| Decimation | Pooling in time | Reduce rate |

| Interpolation | Upsampling | Increase rate |

- Many “tricks” in deep learning have DSP origins

- Signal flow analysis helps debug networks

- Frequency domain reveals failure modes

- Classical solutions inspire new architectures

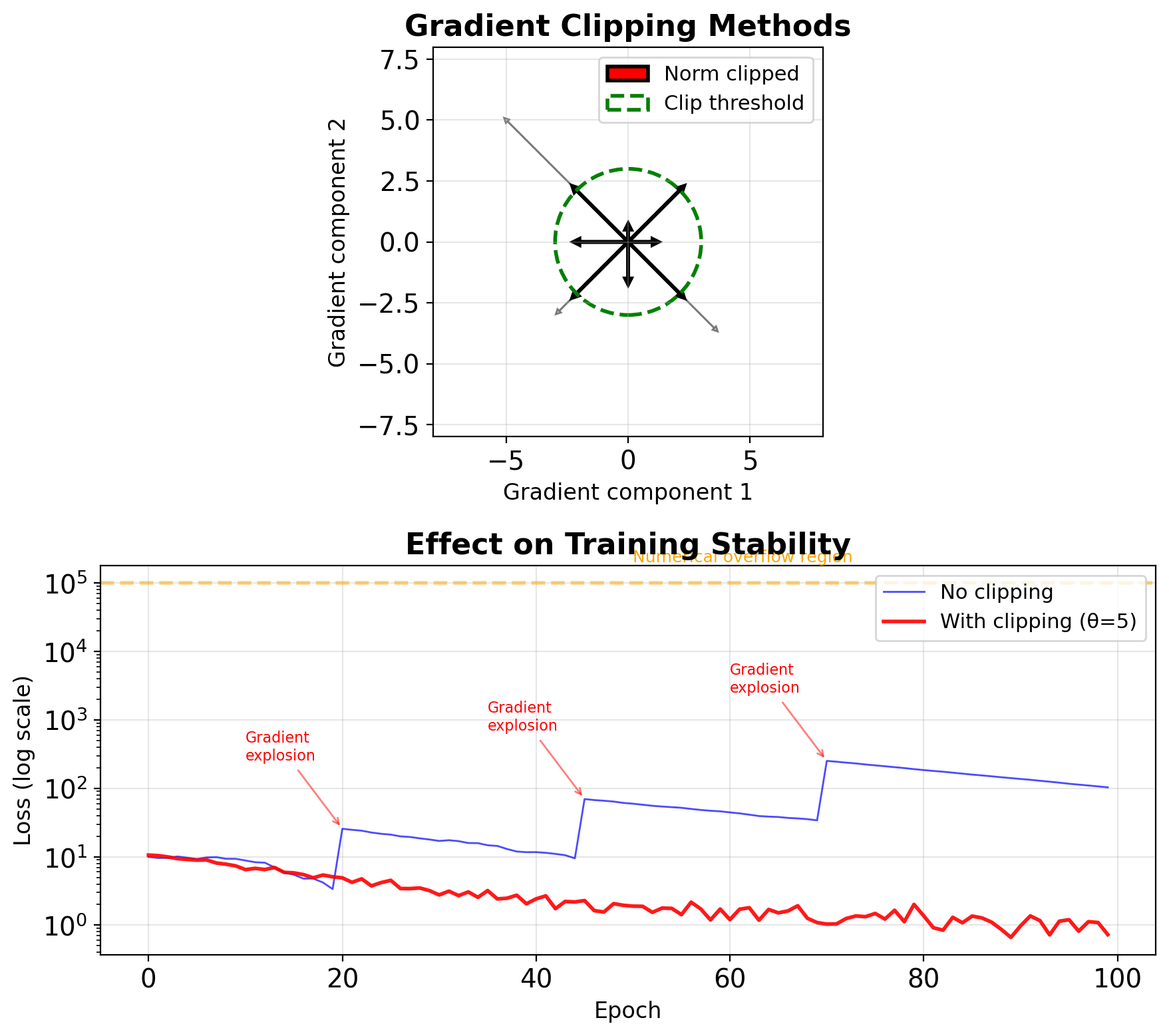

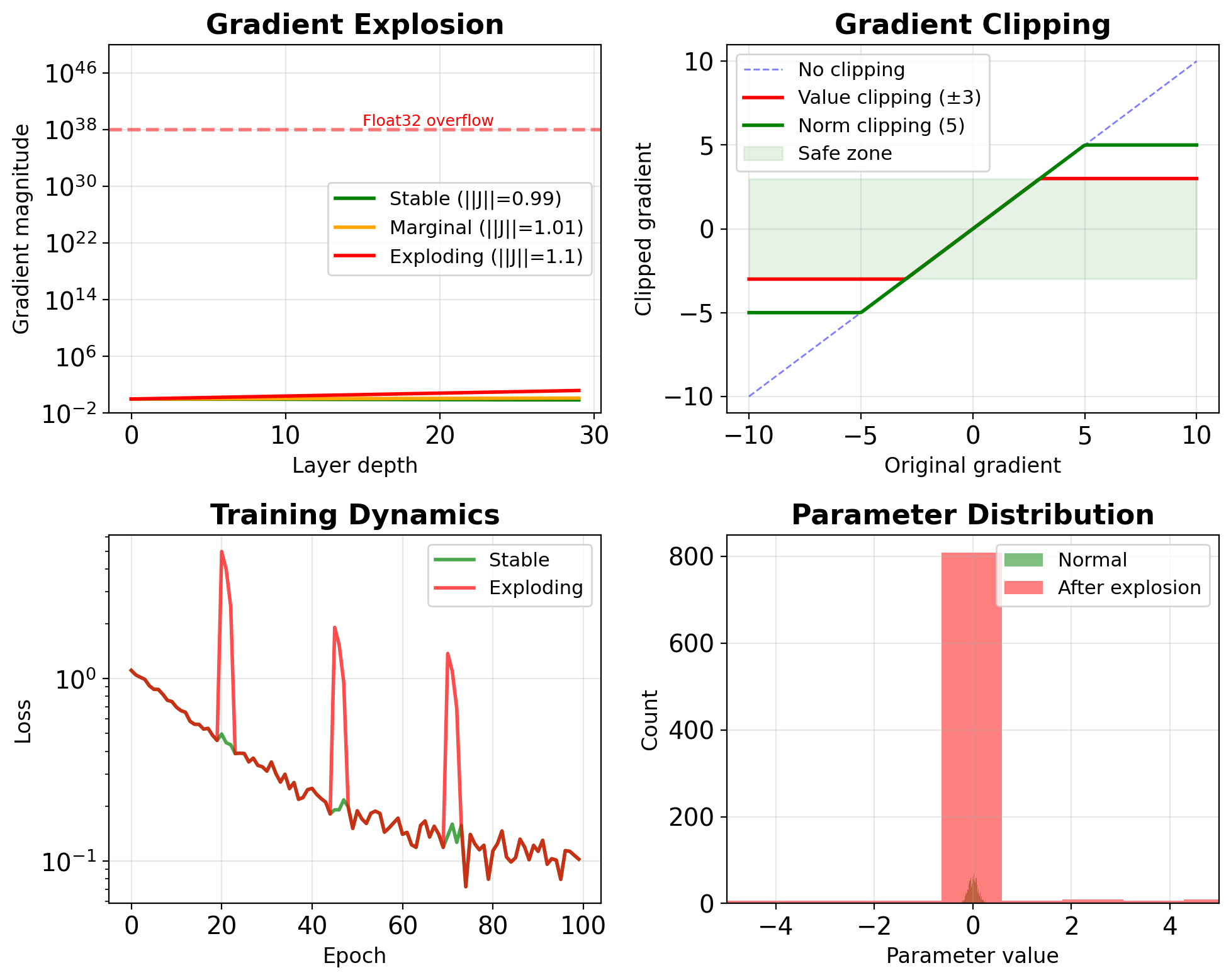

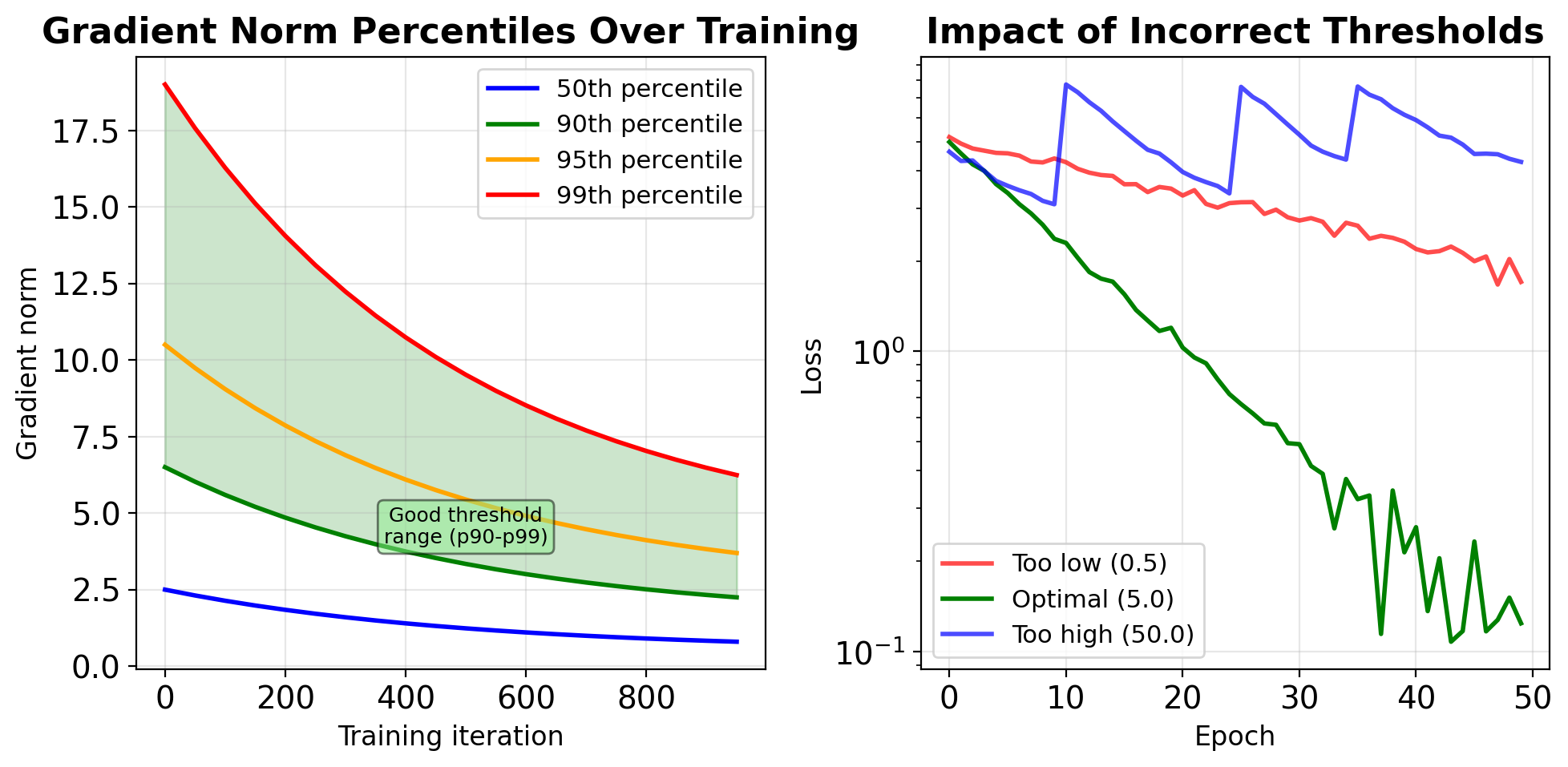

Gradient Clipping as Signal Saturation

Classical DSP: Saturation prevents amplifier damage

- Input exceeds linear range

- Output clipped to safe levels

- Prevents system damage

Deep Learning: Gradient clipping prevents training instability \[\mathbf{g}_{\text{clipped}} = \begin{cases} \mathbf{g} & \text{if } \|\mathbf{g}\| \leq \theta \\ \theta \cdot \frac{\mathbf{g}}{\|\mathbf{g}\|} & \text{if } \|\mathbf{g}\| > \theta \end{cases}\]

Two clipping strategies:

Value clipping: Element-wise bounds

- Simple but can change gradient direction

Norm clipping: Preserves direction

- Standard for RNNs (typical θ = 5-10)

Empirical thresholds (LSTM on PTB):

- No clipping: 38% training failures

- θ = 5: Stable, perplexity 78.4

- θ = 1: Over-constrained, perplexity 92.1

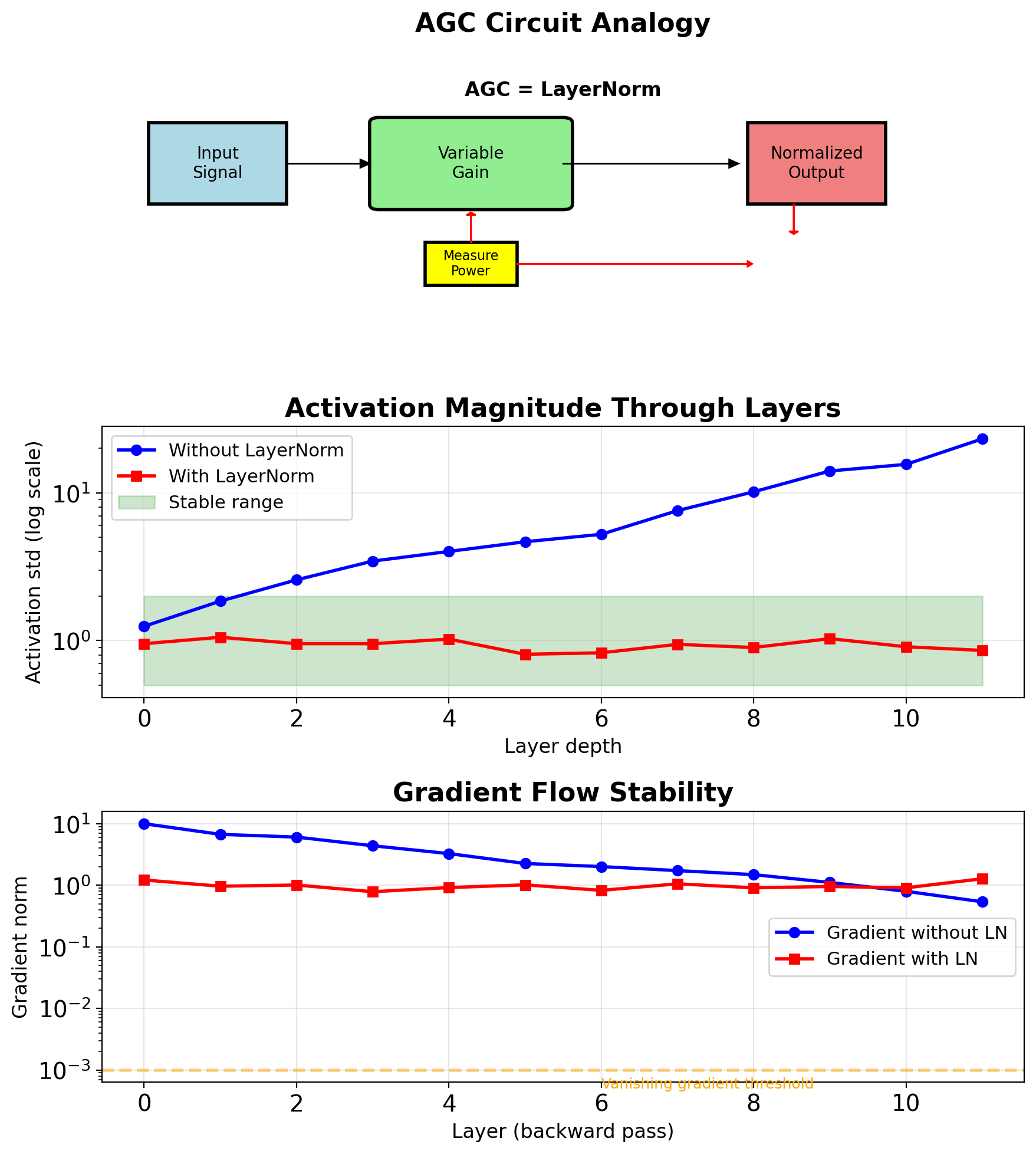

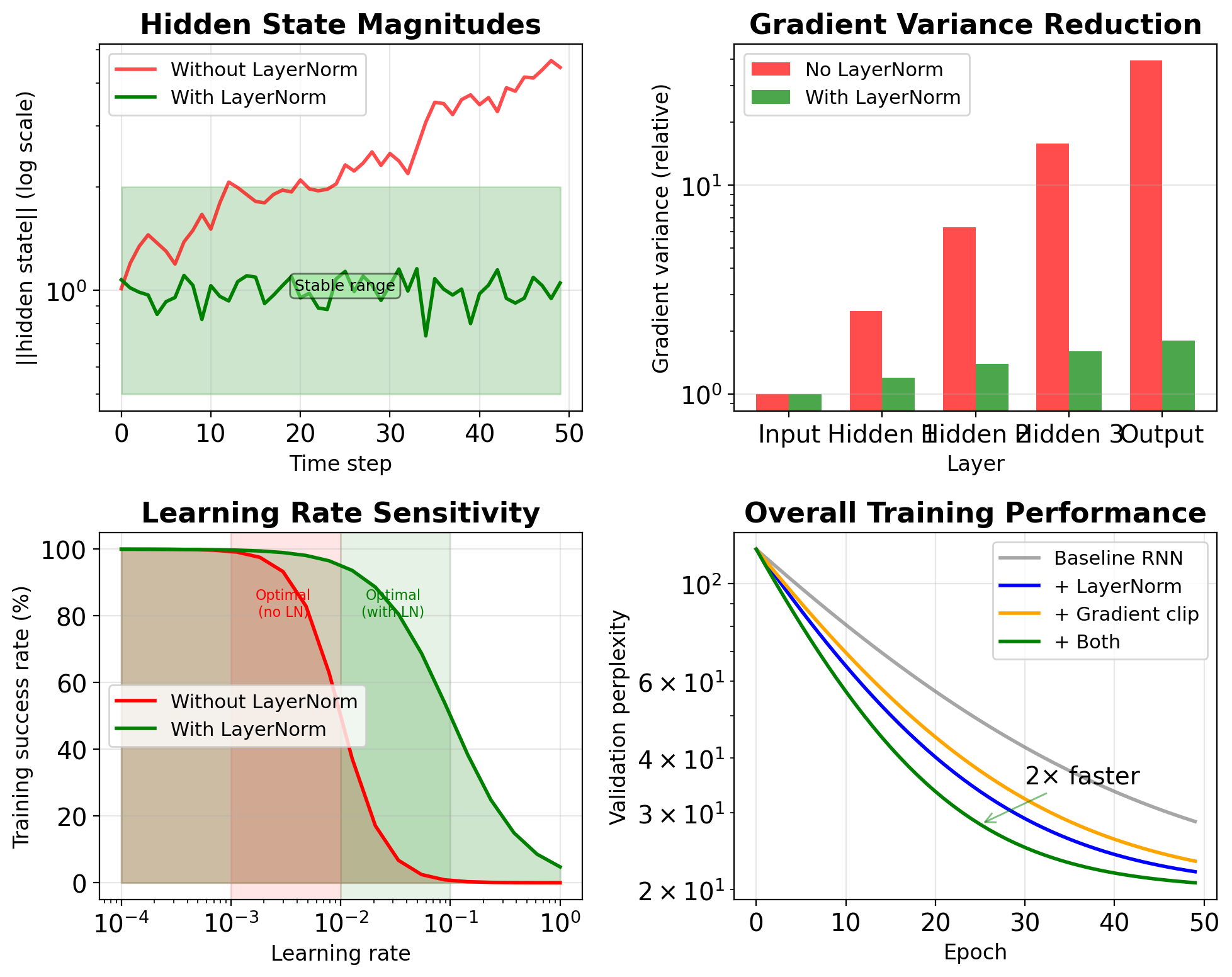

Layer Normalization as Automatic Gain Control

Classical AGC (Automatic Gain Control):

- Maintains constant output level

- Adapts to input signal variations

- Used in radio/audio systems

Layer Normalization: \[\text{LN}(\mathbf{x}) = \gamma \frac{\mathbf{x} - \mu}{\sqrt{\sigma^2 + \epsilon}} + \beta\]

where across feature dimension:

\(\mu = \frac{1}{D}\sum_{i=1}^D x_i\)

\(\sigma^2 = \frac{1}{D}\sum_{i=1}^D (x_i - \mu)^2\)

AGC: Normalizes signal power

LayerNorm: Normalizes activation magnitude

Both prevent saturation in subsequent stages

Measured stabilization (Transformer, 12 layers):

- Without LN: Activation std varies 10²-10⁴

- With LN: Activation std stays in [0.8, 1.2]

- Training speedup: 5.3×

Skip Connections as Parallel Signal Paths

DSP: Parallel signal paths

- Preserve different frequency bands

- Combine for complete signal

- Prevent information loss

ResNet skip connections: \[\mathbf{y} = F(\mathbf{x}, \mathbf{W}) + \mathbf{x}\]

Gradient flow analysis: \[\frac{\partial L}{\partial \mathbf{x}} = \frac{\partial L}{\partial \mathbf{y}} \left(1 + \frac{\partial F}{\partial \mathbf{x}}\right)\]

Even if \(\frac{\partial F}{\partial \mathbf{x}} \approx 0\) (vanishing), gradient still flows through identity path

Frequency domain interpretation:

- Main path: Learned transformation with possible attenuation

- Skip path: All-pass filter (preserves all frequencies)

- Combined: Guaranteed minimum gain of 1

Impact on trainability (ImageNet):

- 34-layer plain net: 28.5% error

- 34-layer ResNet: 25.0% error

- 152-layer ResNet: 23.0% error (deeper is better!)

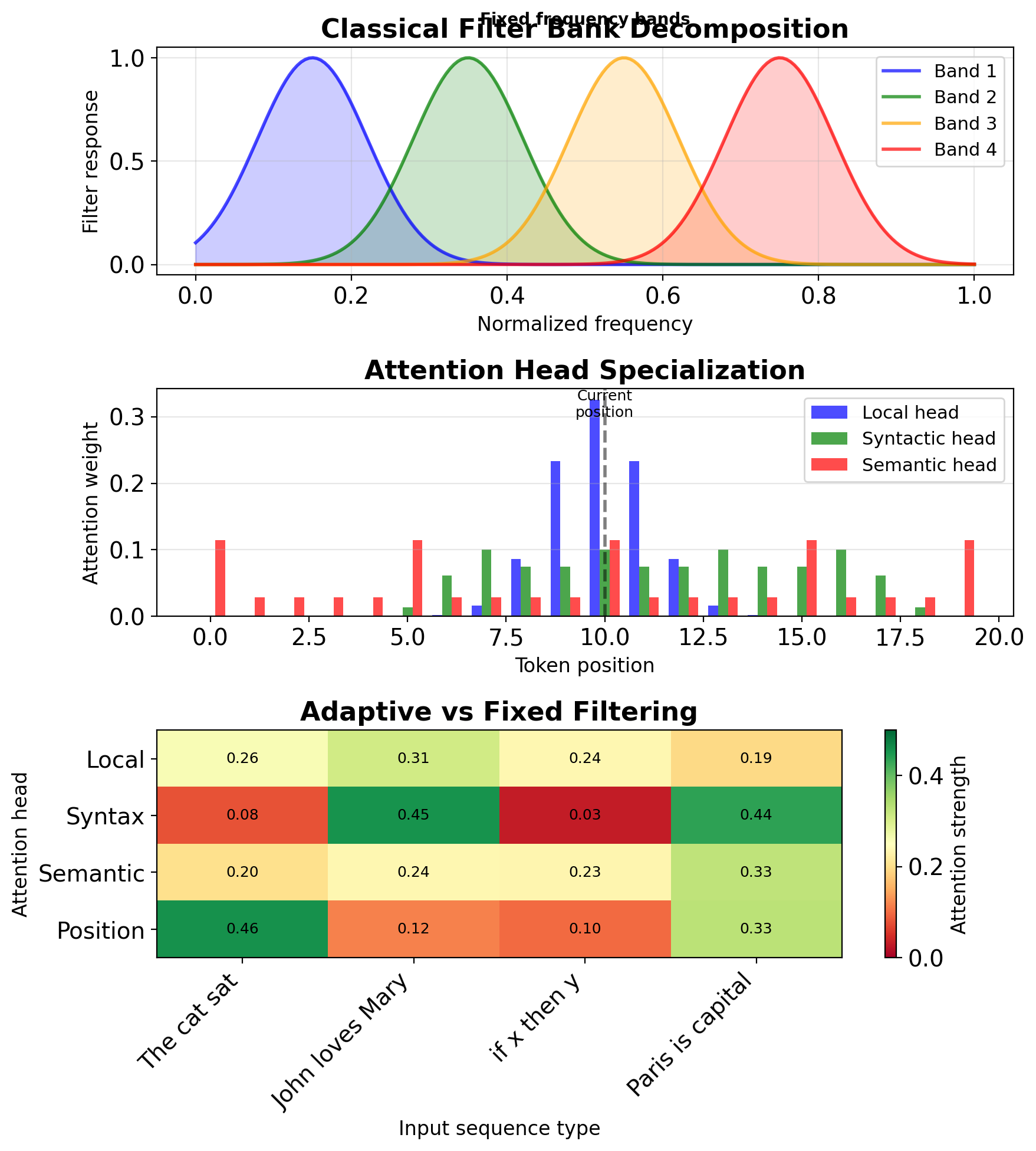

Attention as Adaptive Filter Banks

Filter banks in DSP vs attention mechanisms

Classical filter bank:

- Decompose signal into frequency bands

- Process each band separately

- Reconstruct from components

Multi-head attention as filter bank: \[\text{Attention}(Q,K,V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V\]

Each head captures different “frequency” of relationships:

- Head 1: Local dependencies (high freq)

- Head 2: Syntactic patterns (mid freq)

- Head 3: Semantic relations (low freq)

Adaptive aspect:

- Classical: Fixed frequency bands

- Attention: Data-dependent filtering

- Learned decomposition per input

Measured specialization (BERT-base):

- Heads 1-3: Position encoding (avg distance 2.1 tokens)

- Heads 4-6: Syntax (avg distance 5.7 tokens)

- Heads 7-12: Semantics (avg distance 11.3 tokens)

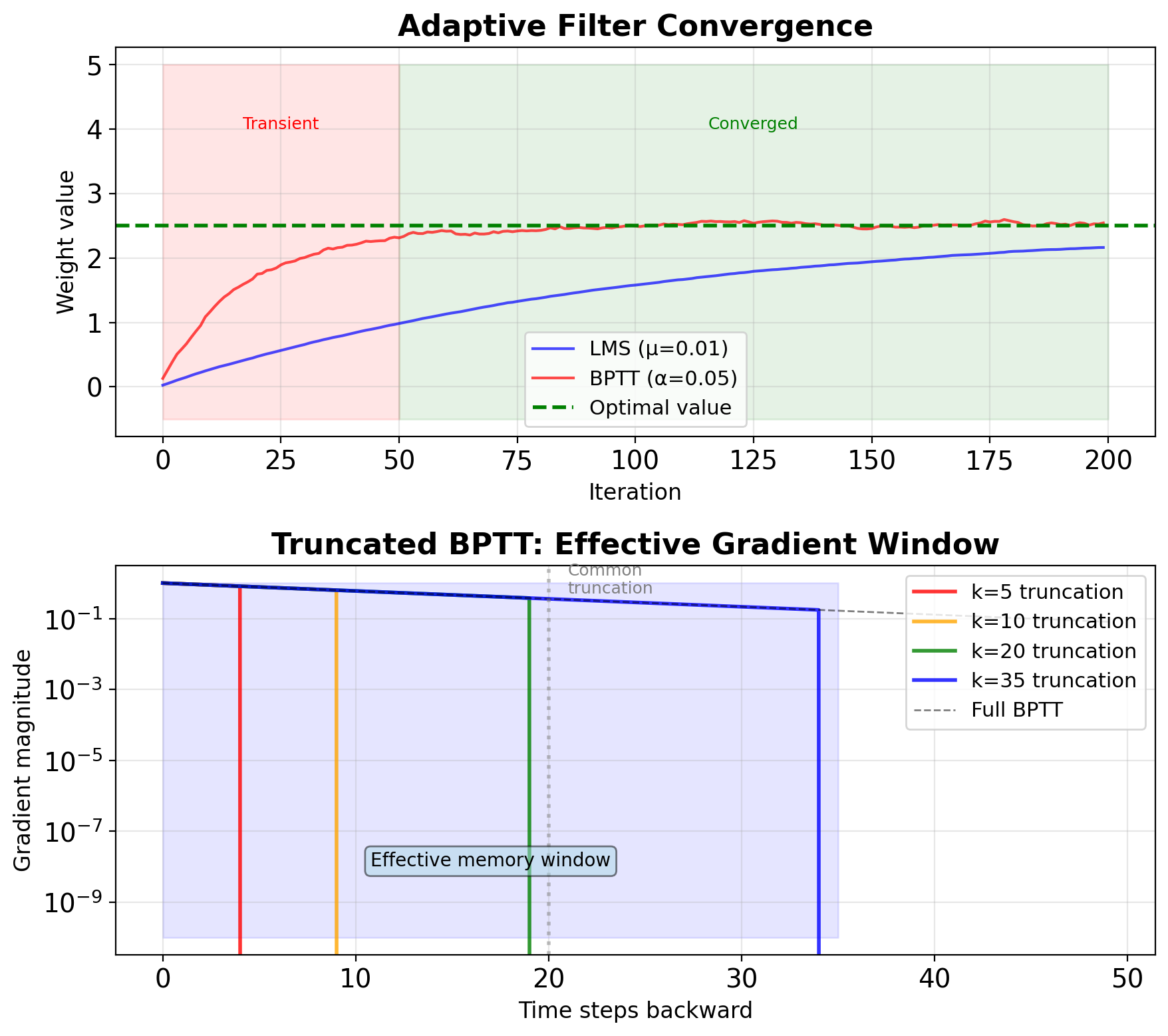

Adaptive Filtering and BPTT

Adaptive filters learn optimal coefficients

Classical adaptive filtering (LMS, RLS):

- Online coefficient updates

- Minimize prediction error

- Converge to optimal filter

BPTT as adaptive filter training: \[\mathbf{W}_{t+1} = \mathbf{W}_t - \alpha \nabla_{\mathbf{W}} L\]

- LMS: \(w_{n+1} = w_n + \mu e_n x_n\)

- BPTT: \(W_{ij}^{(t+1)} = W_{ij}^{(t)} - \alpha \frac{\partial L}{\partial W_{ij}}\)

Both minimize prediction error through iterative updates

Convergence characteristics:

- LMS: \(O(1/\mu)\) iterations, local minima

- BPTT: Depends on sequence length T

- Both sensitive to learning rate/step size

Truncated BPTT = Finite impulse response:

- Limit gradient flow to k steps

- Trade accuracy for stability

- Standard k = 20-35 for RNNs

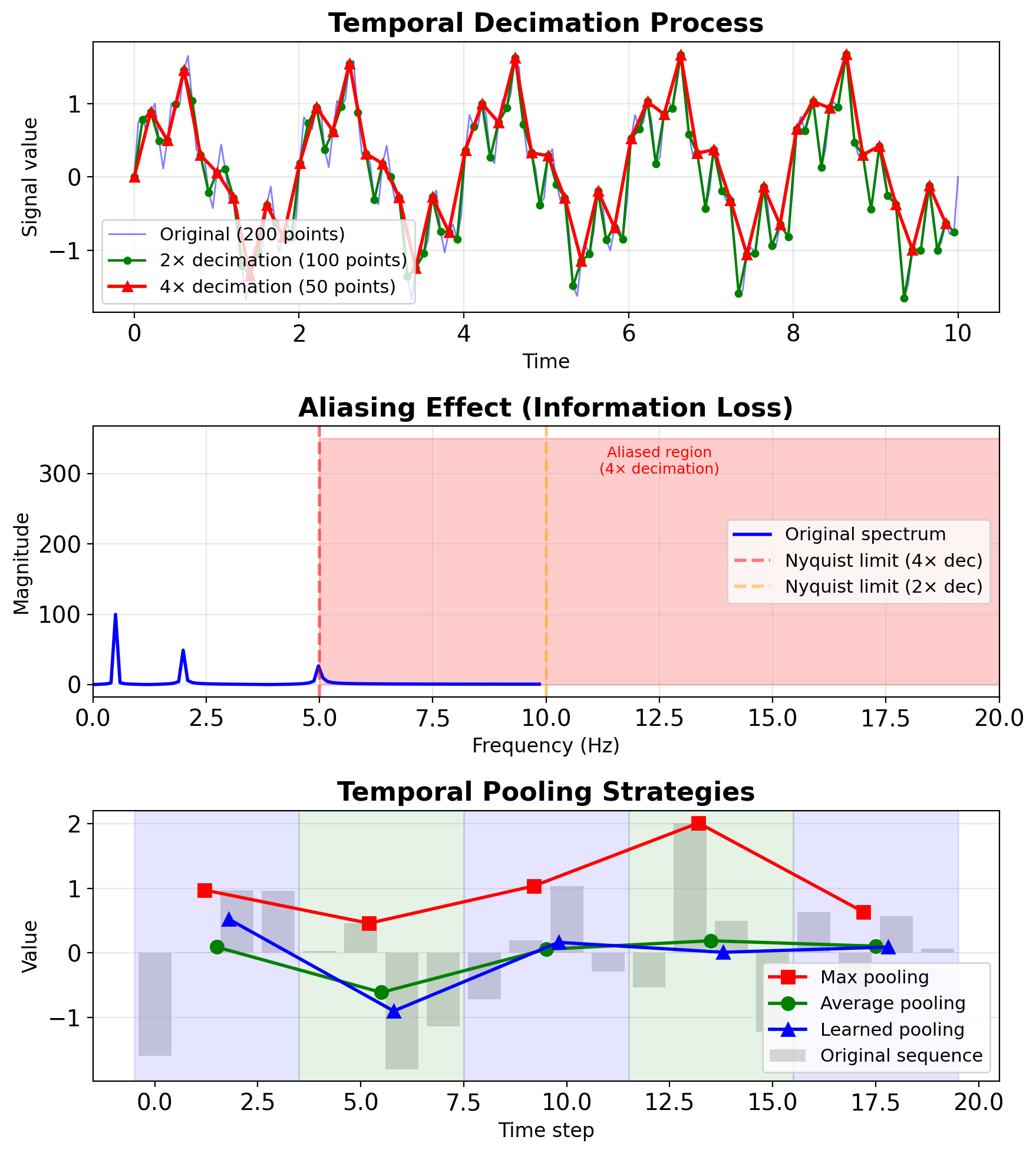

Temporal Decimation and Pooling

Decimation in DSP vs temporal pooling

DSP Decimation:

- Reduce sampling rate by factor M

- Must filter first (prevent aliasing)

- Nyquist criterion: \(f_s > 2f_{max}\)

Temporal pooling in RNNs:

- Reduce sequence length

- Aggregate temporal information

- Lower computational cost

Common strategies:

- Max pooling over time: Keep strongest signal

- Average pooling: Smooth temporal variations

- Strided convolutions: Learned decimation

Computational savings:

- Original: \(O(T \times d^2)\) per layer

- After 2× decimation: \(O(T/2 \times d^2)\)

- Memory reduction: 50%

Performance-memory (speech recognition):

- No pooling: 95.2% accuracy, 8GB memory

- 2× pooling: 94.8% accuracy, 4GB memory

- 4× pooling: 92.1% accuracy, 2GB memory

Chain Rule in Deep Networks

For loss \(L\) and parameters \(\mathbf{W}^{(1)}\) in layer 1: \[\frac{\partial L}{\partial \mathbf{W}^{(1)}} = \frac{\partial L}{\partial \mathbf{a}^{(L)}} \cdot \frac{\partial \mathbf{a}^{(L)}}{\partial \mathbf{a}^{(L-1)}} \cdots \frac{\partial \mathbf{a}^{(2)}}{\partial \mathbf{W}^{(1)}}\]

Product of Jacobians: \[\frac{\partial L}{\partial \mathbf{W}^{(1)}} = \boldsymbol{\delta}^{(L)} \prod_{l=2}^{L} \mathbf{J}^{(l)}\]

where \(\mathbf{J}^{(l)} = \frac{\partial \mathbf{a}^{(l)}}{\partial \mathbf{a}^{(l-1)}}\)

Gradient magnitude depends on product of L-1 matrices

For RNNs: Replace depth with time

- \(L\) layers → \(T\) timesteps

- Spatial depth → Temporal depth

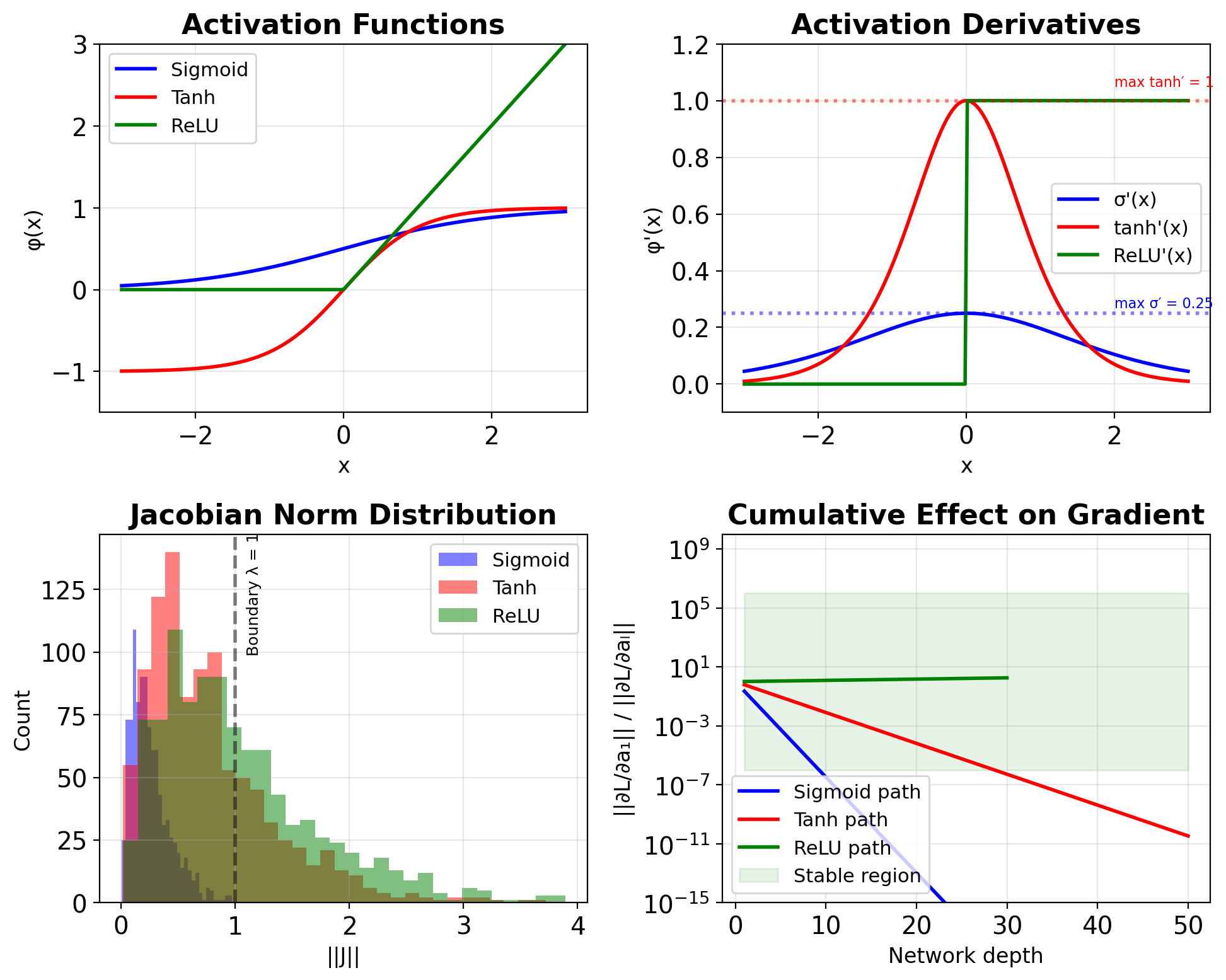

Jacobian Matrix Norms

For layer with activation \(\phi\): \[\mathbf{a}^{(l)} = \phi(\mathbf{W}^{(l)}\mathbf{a}^{(l-1)} + \mathbf{b}^{(l)})\]

Jacobian: \[\mathbf{J}^{(l)} = \frac{\partial \mathbf{a}^{(l)}}{\partial \mathbf{a}^{(l-1)}} = \text{diag}(\phi'(\mathbf{z}^{(l)})) \cdot \mathbf{W}^{(l)}\]

Norm bounds: \[\|\mathbf{J}^{(l)}\| \leq \|\phi'\|_{\infty} \cdot \|\mathbf{W}^{(l)}\|\]

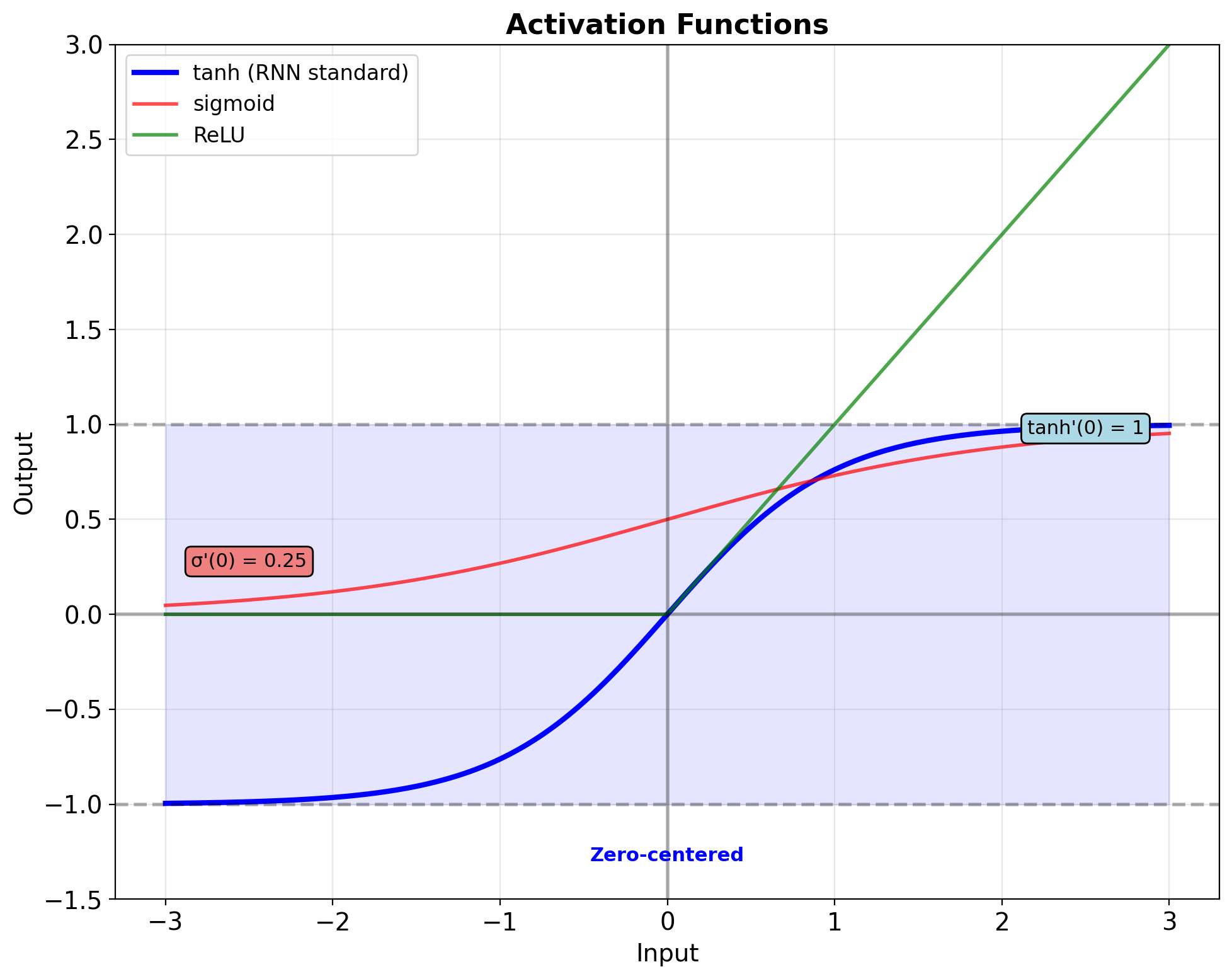

Activation derivatives:

- Sigmoid: \(\sigma'(x) \leq 0.25\)

- Tanh: \(\tanh'(x) \leq 1\)

- ReLU: \(\text{ReLU}'(x) \in \{0, 1\}\)

- Leaky ReLU: \(\text{LReLU}'(x) \in \{\alpha, 1\}\)

Gradient Magnitude Evolution

Starting from loss gradient \(\nabla_{\mathbf{a}^{(L)}} L\): \[\|\nabla_{\mathbf{W}^{(1)}} L\| \approx \|\nabla_{\mathbf{a}^{(L)}} L\| \cdot \prod_{l=2}^{L} \|\mathbf{J}^{(l)}\|\]

Controlling factors:

- Activation function choice

- Weight initialization scale

- Network depth/sequence length

Exponential behavior:

- If \(\prod \|\mathbf{J}\| < 1\): Vanishing (\(e^{-\alpha L}\))

- If \(\prod \|\mathbf{J}\| = 1\): Preserved

- If \(\prod \|\mathbf{J}\| > 1\): Exploding (\(e^{\alpha L}\))

Effective gradient horizon: \[L_{\text{eff}} \approx \frac{\log(\epsilon)}{\log(\|\mathbf{J}\|)}\]

where \(\epsilon\) is numerical precision threshold

The Vanishing Gradient Problem

Sigmoid saturation: \[\sigma'(x) = \sigma(x)(1-\sigma(x)) \leq 0.25\]

Tanh saturation: \[\tanh'(x) = 1 - \tanh^2(x) \leq 1\]

For deep networks:

- 10 layers: \((0.25)^{10} \approx 10^{-6}\)

- 20 layers: \((0.25)^{20} \approx 10^{-12}\)

- 50 layers: \((0.25)^{50} \approx 10^{-30}\)

Consequences:

- Early layers stop learning

- Long-term dependencies lost

- Network depth limited

- Training becomes ineffective

This motivated: ResNets, LSTMs, normalization techniques

The Exploding Gradient Problem

Causes:

- \(\|\mathbf{W}\| > 1\) with ReLU activation

- Positive feedback loops in RNNs

- Poor initialization

Mathematical analysis: \[\|\nabla\| \approx \|\mathbf{W}\|^L \cdot \|\phi'\|^L\]

For ReLU with \(\|\mathbf{W}\| = 1.5\):

- 10 layers: \((1.5)^{10} \approx 58\)

- 20 layers: \((1.5)^{20} \approx 3,300\)

- 30 layers: \((1.5)^{30} \approx 192,000\)

Consequences:

- Numerical overflow (NaN/Inf)

- Unstable training

- Loss divergence

- Parameter corruption

Solutions: Gradient clipping, careful initialization, normalization

Implications for Temporal Networks

RNN unrolled for T steps = T-layer deep network

Gradient path length: O(T) \[\frac{\partial L}{\partial \mathbf{s}_0} = \prod_{t=1}^T \frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\]

Challenges scale with sequence length:

- T=10: Manageable

- T=100: Severe vanishing/exploding

- T=1000: Gradient ≈ 0.9^1000 ≈ 10^-46 (underflows)

Effective memory horizon: \[T_{\text{eff}} \approx \frac{1}{1 - \|\mathbf{J}_{\text{RNN}}\|}\]

This motivates:

- Gating mechanisms (LSTM/GRU)

- Attention mechanisms (skip connections in time)

- Truncated BPTT (limit gradient flow)

- Gradient clipping (prevent explosion)

Vanilla RNN Architecture

RNN Recurrence Equation

Hidden state update: \[\mathbf{s}_t = \tanh(\mathbf{W}_{ss}\mathbf{s}_{t-1} + \mathbf{W}_{xs}\mathbf{x}_t + \mathbf{b}_s)\]

Output computation: \[\mathbf{y}_t = \mathbf{W}_{sy}\mathbf{s}_t + \mathbf{b}_y\]

- Shared parameters across all timesteps

- Nonlinear state transition via tanh

- Linear output projection

Tanh activation properties:

- Bounded output: [-1, 1] prevents explosion

- Zero-centered: Better gradient flow than sigmoid

- Maximum gradient at origin: tanh’(0) = 1

- Historically standard for RNN stability

Tensor Dimensions Throughout the RNN Network

| Component | Dimension | Description |

|---|---|---|

| \(\mathbf{x}_t\) | \(\mathbb{R}^D\) | Input at time \(t\) |

| \(\mathbf{s}_t\) | \(\mathbb{R}^H\) | Hidden state |

| \(\mathbf{y}_t\) | \(\mathbb{R}^K\) | Output at time \(t\) |

| \(\mathbf{W}_{xs}\) | \(\mathbb{R}^{H \times D}\) | Input-to-hidden |

| \(\mathbf{W}_{ss}\) | \(\mathbb{R}^{H \times H}\) | Hidden-to-hidden |

| \(\mathbf{W}_{sy}\) | \(\mathbb{R}^{K \times H}\) | Hidden-to-output |

| \(\mathbf{b}_s\) | \(\mathbb{R}^H\) | Hidden bias |

| \(\mathbf{b}_y\) | \(\mathbb{R}^K\) | Output bias |

Total parameters: \[N_{\text{params}} = HD + H^2 + KH + H + K\]

Example configuration:

- \(D = 300\) (word embedding)

- \(H = 512\) (hidden size)

- \(K = 10000\) (vocabulary)

- Total: ~5.4M parameters

Parameter breakdown:

Input→Hidden (W_xs): 300 × 512 = 153K

Hidden→Hidden (W_ss): 512 × 512 = 262K

Hidden→Output (W_sy): 10000 × 512 = 5.12M

Biases: 10.5K

────────────────────────────────────────

Total: 5.54MOutput projection dominates (93%) when vocabulary is large, while hidden-to-hidden remains fixed regardless of vocabulary.

- Parameter sharing makes this tractable:

- Without sharing: 5.54M × T parameters

- With sharing: 5.54M total (constant)

Memory footprint (float32):

- Parameters: 5.54M × 4 bytes = 22MB

- Gradients (training): +22MB

- Optimizer state (Adam): +44MB

- Total training memory: ~88MB + activations

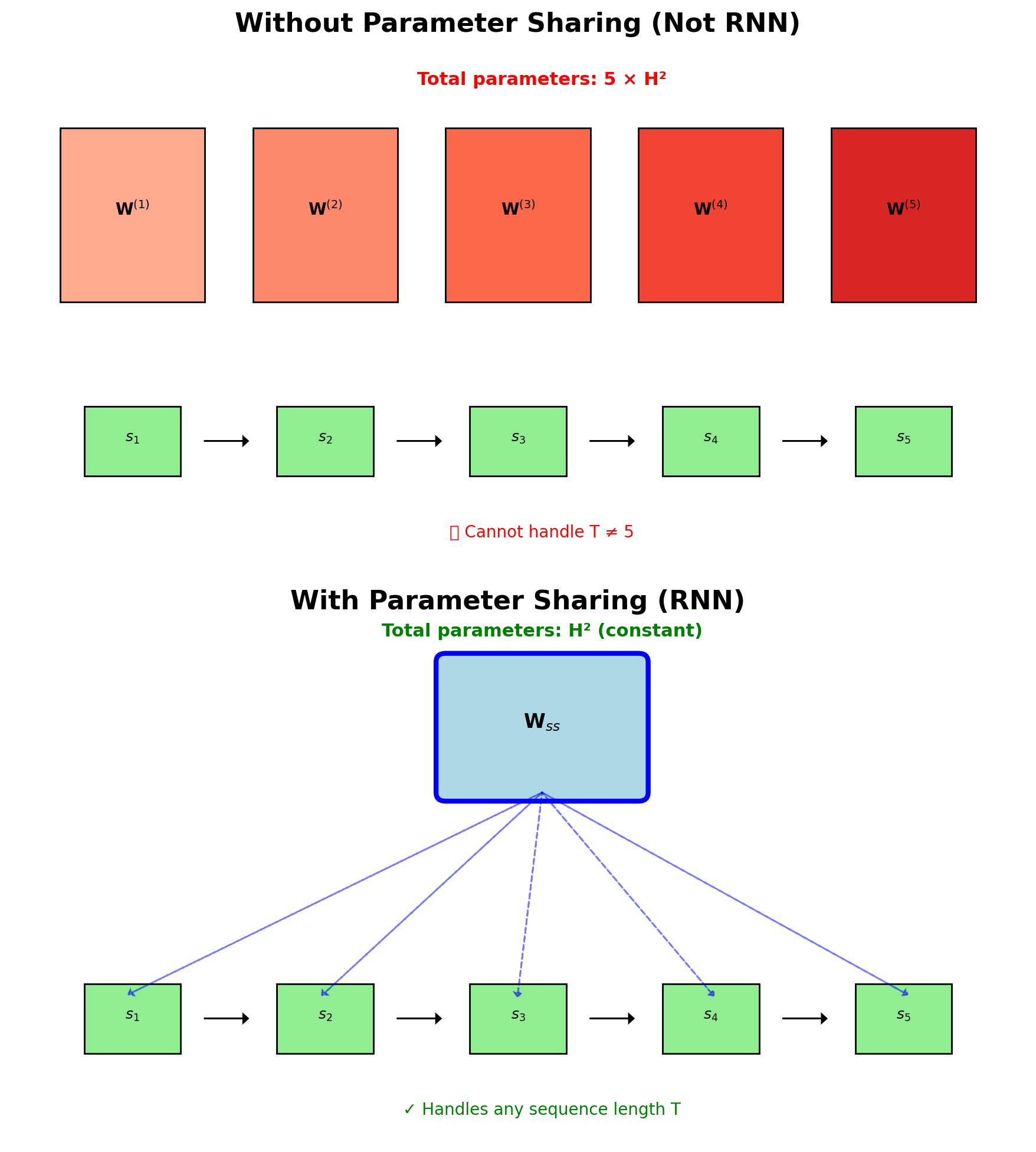

RNNs Share Parameters Across All Timesteps

Without sharing:

- Different weights per timestep: \(\mathbf{W}^{(t)}_{ss}\)

- Parameters grow with sequence: \(O(T \times H^2)\)

- Cannot handle variable length

- No generalization across positions

With sharing (RNN):

- Same weights all timesteps: \(\mathbf{W}_{ss}\)

- Fixed parameters: \(O(H^2)\)

- Handles any sequence length

- Captures position-invariant patterns

CNN shares weights across space; RNN shares across time

- Training: Gradients accumulated across time

- Inference: Can process infinite streams

- Memory: State carries information, not parameters

Unrolled Computation Graph

- Each timestep uses same RNN cell (shared parameters)

- Hidden state flows forward carrying temporal information

- Outputs generated independently at each timestep

- Graph depth = sequence length (implications for gradients)

RNNs Support Different Input-Output Configurations

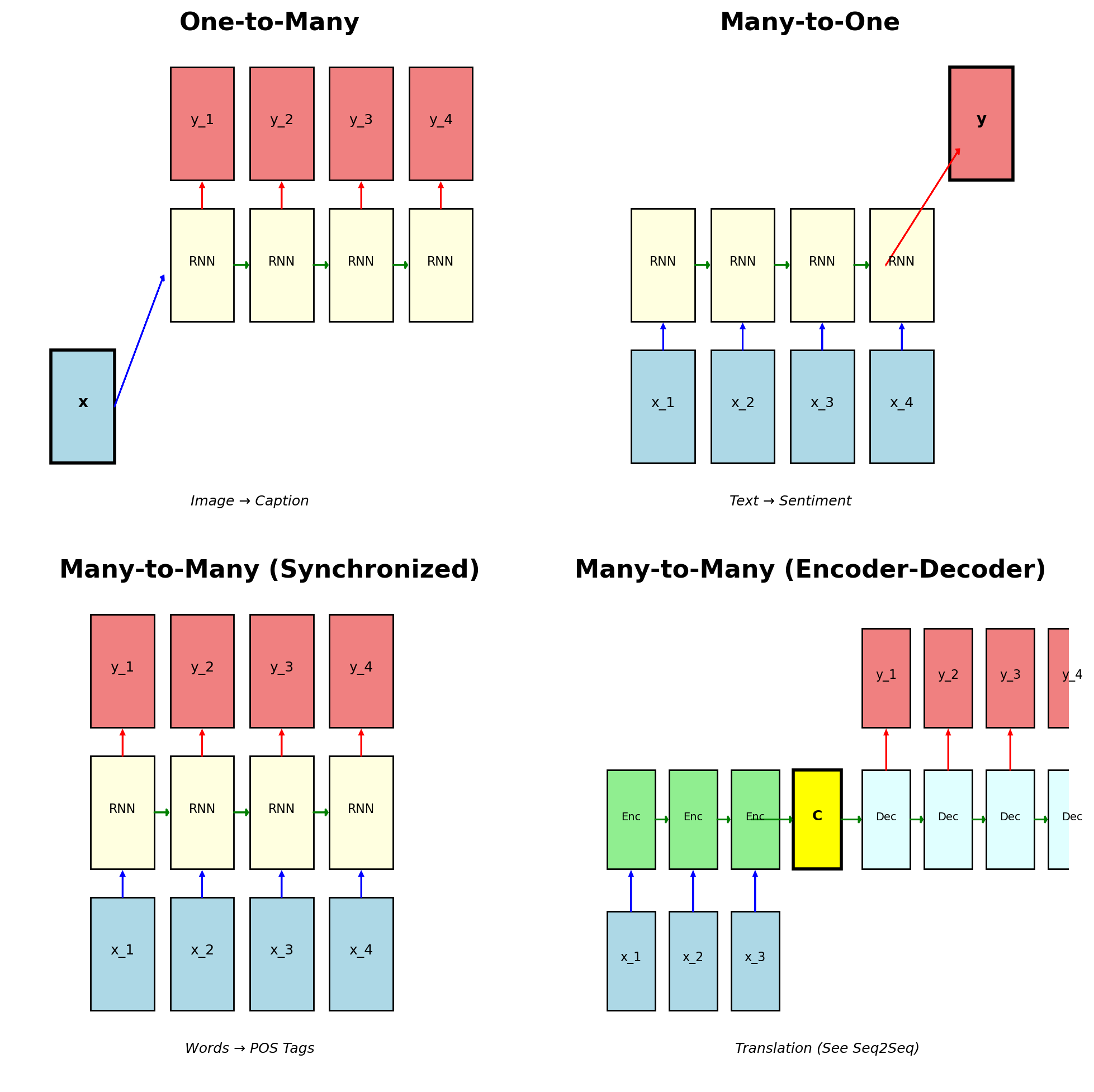

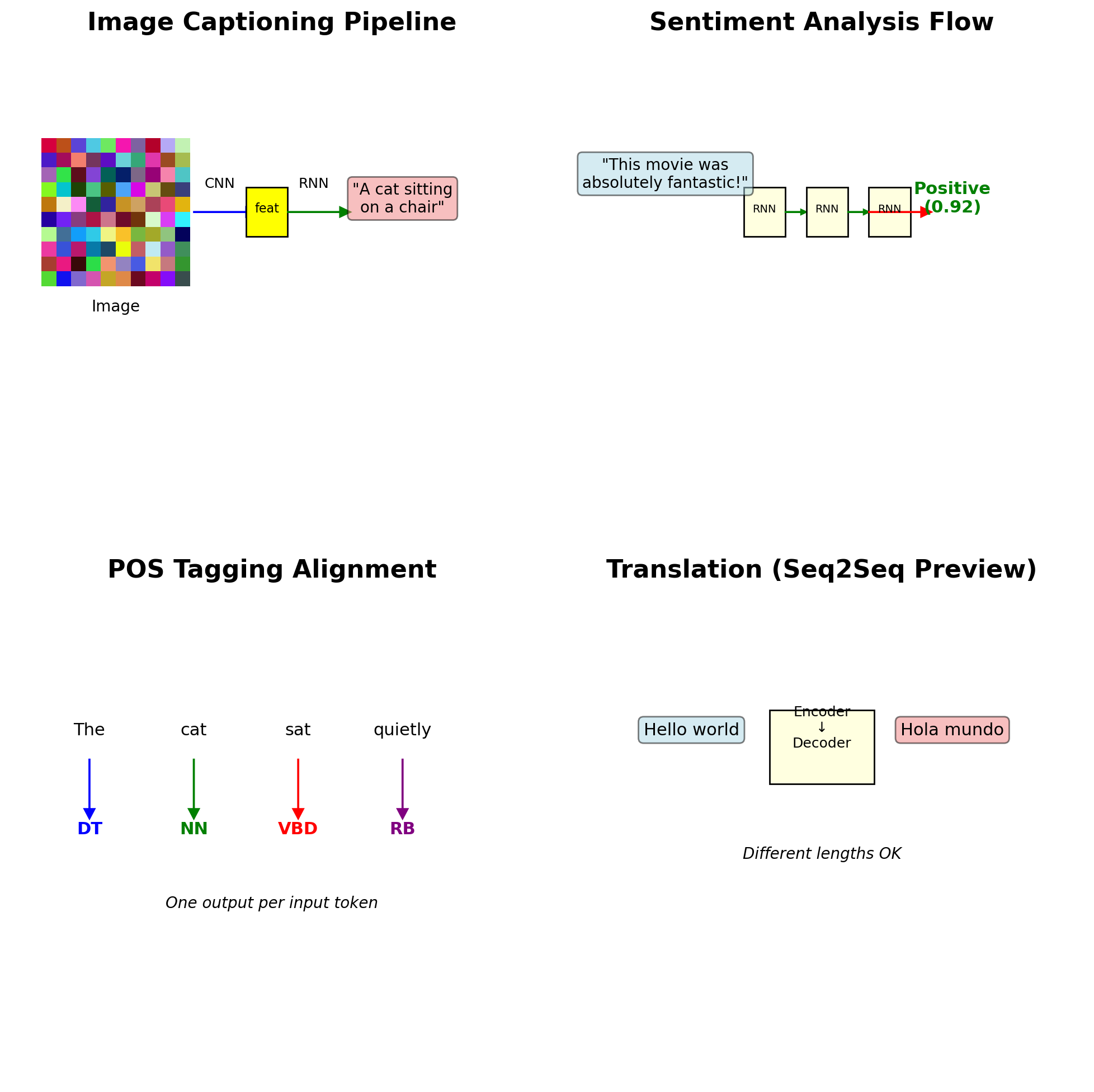

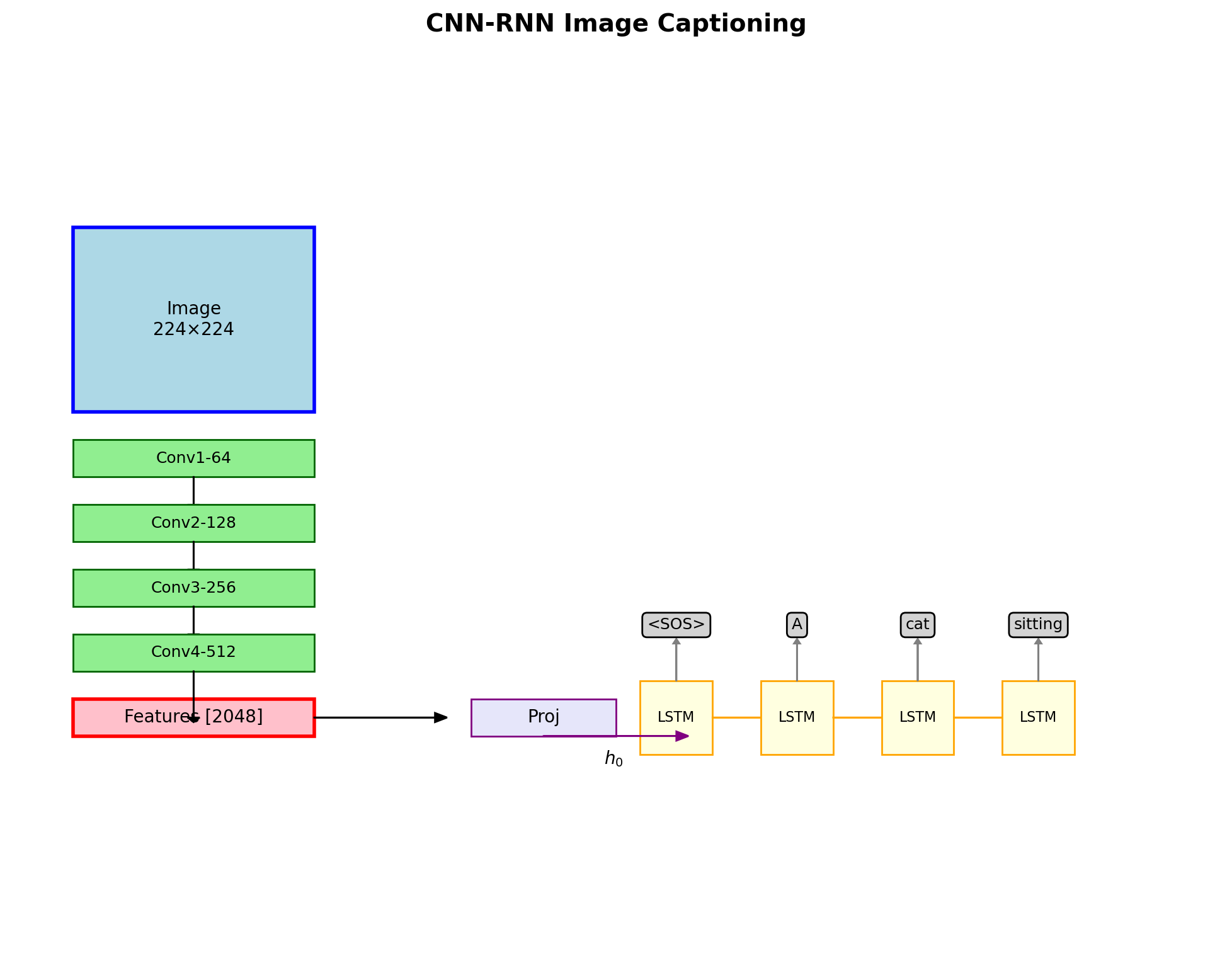

One-to-Many (e.g., image captioning)

- Single input → sequence output

- \(\mathbf{x} \rightarrow \{\mathbf{y}_1, \mathbf{y}_2, ..., \mathbf{y}_T\}\)

Many-to-One (e.g., sentiment analysis)

- Sequence input → single output

- \(\{\mathbf{x}_1, \mathbf{x}_2, ..., \mathbf{x}_T\} \rightarrow \mathbf{y}\)

Many-to-Many (Synchronized) (e.g., POS tagging)

- Aligned input-output sequences

- \(\{\mathbf{x}_t\} \rightarrow \{\mathbf{y}_t\}\), same length

Many-to-Many (Encoder-Decoder) (e.g., translation)

- Different length sequences

- \(\{\mathbf{x}_1, ..., \mathbf{x}_S\} \rightarrow \{\mathbf{y}_1, ..., \mathbf{y}_T\}\)

Encoder-decoder architecture covered in Seq2Seq section

Each RNN Mode Has Specific Real-World Applications

One-to-Many Applications:

- Image captioning: CNN features → word sequence

- Music generation: seed note → melody

- Video description: frame → narrative

Many-to-One Applications:

- Sentiment classification: review → positive/negative

- Spam detection: email → spam/ham

- Activity recognition: sensor sequence → activity

Many-to-Many (Synchronized):

- Part-of-speech tagging: word → POS tag

- Named entity recognition: token → entity type

- Speech recognition: audio frames → phonemes

Many-to-Many (Seq2Seq):

- Machine translation: source → target language

- Summarization: document → summary

- Dialog systems: query → response

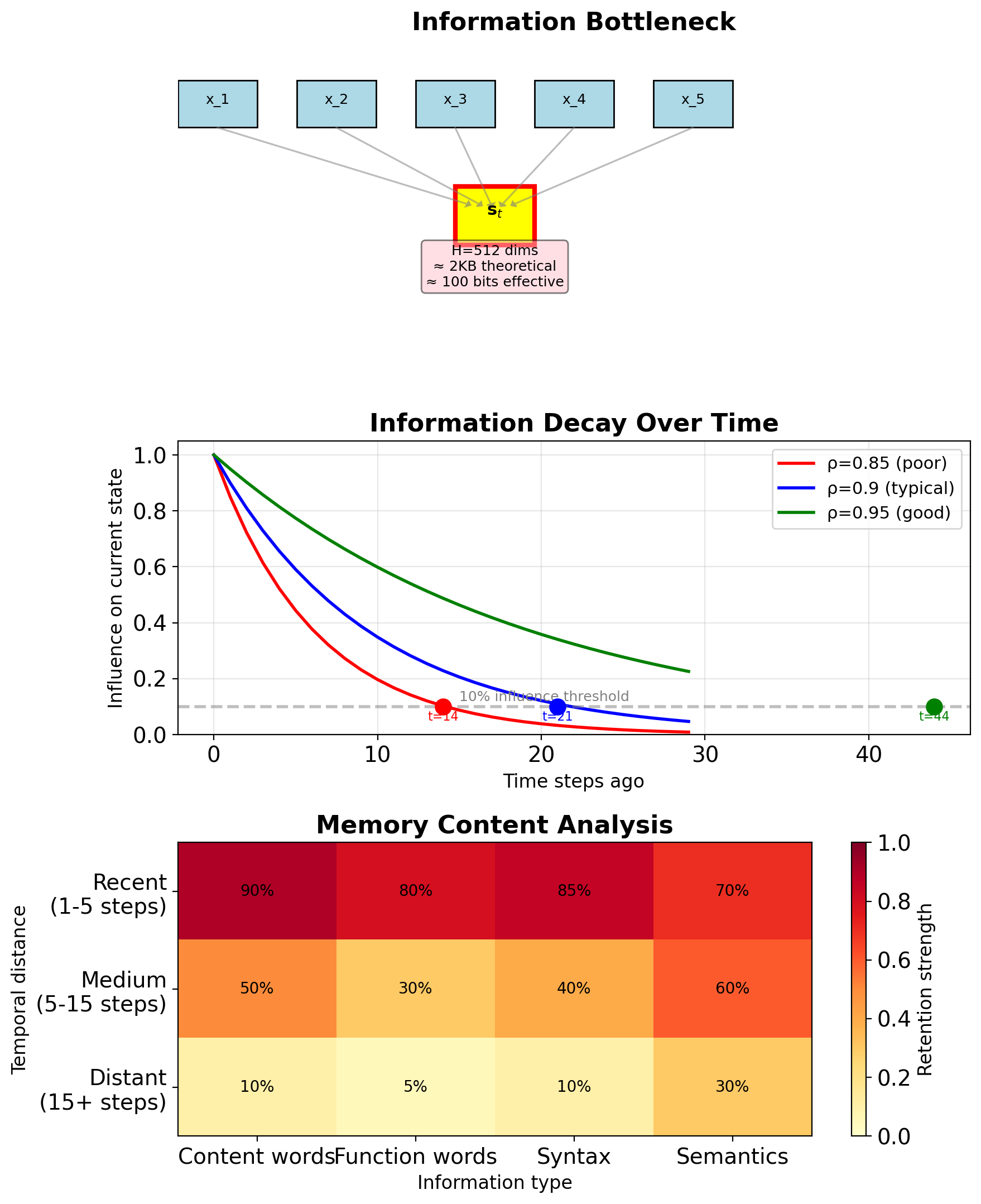

Hidden State Compresses Information Over Time

Theoretical capacity:

- Hidden state: \(\mathbf{s}_t \in \mathbb{R}^H\)

- Float32 precision: 32 bits per dimension

- Total: \(H \times 32\) bits

- Example (H=512): 16,384 bits ≈ 2KB

Practical capacity much less:

- Information bottleneck at each step

- Lossy compression through tanh

- Gradient vanishing limits optimization

- Effective capacity ≈ \(O(\log H)\) bits

Memory content:

- Recent inputs (last 10-20 steps)

- Salient patterns (high activation)

- Task-relevant features (learned)

Forgetting curve:

- Exponential decay: \(\text{influence}(t-k) \propto \rho^k\)

- \(\rho \approx 0.9-0.95\)

- After 20 steps: \(0.9^{20} \approx 0.12\) influence

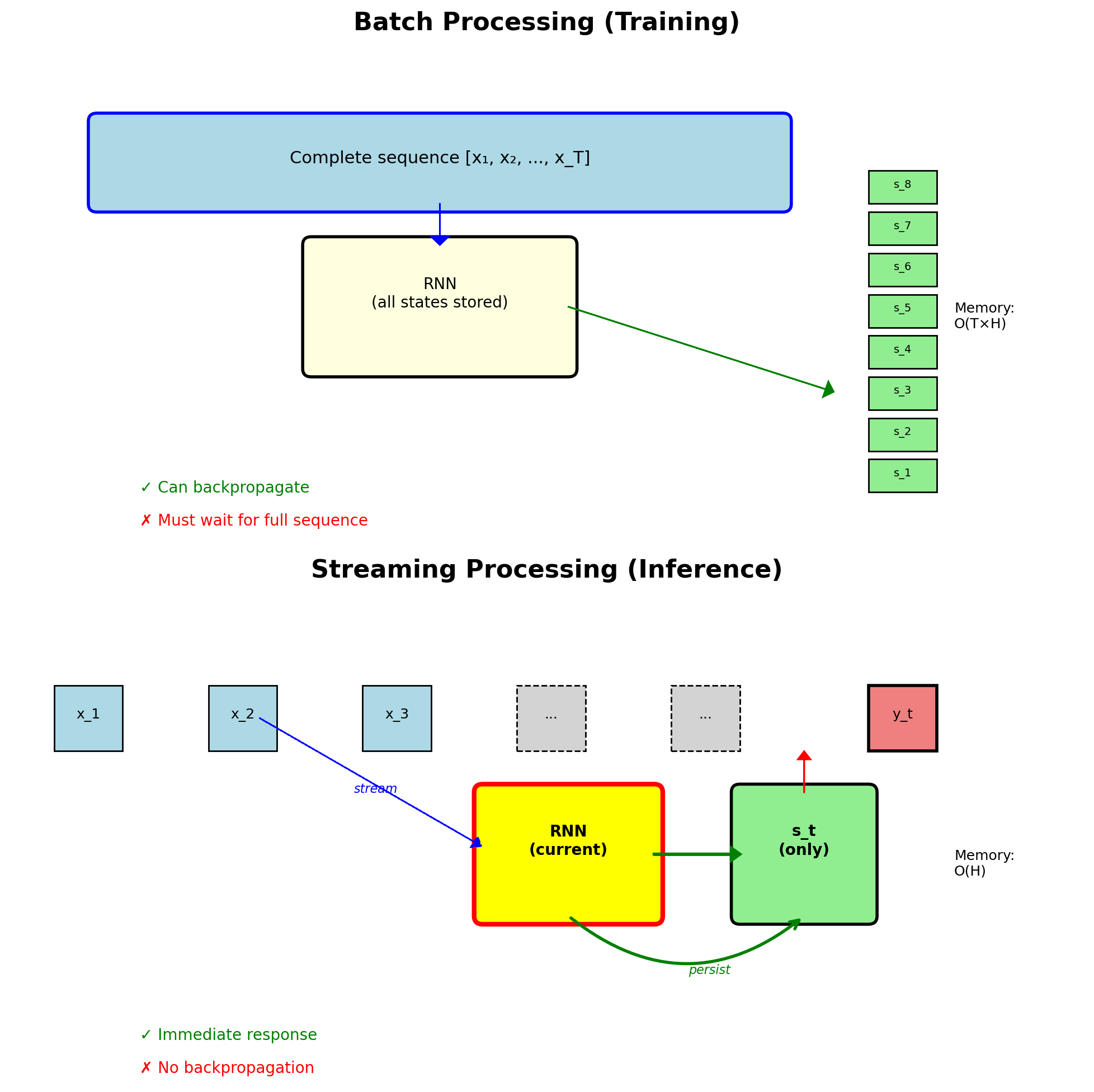

Batch Processing Converts to Streaming Inference

Training mode (fixed length):

- Process complete sequence

- Batch multiple sequences

- Store all hidden states

- Backprop through entire sequence

Streaming mode (infinite length):

- Process one token at a time

- Maintain persistent hidden state

- No storage of history

- Real-time response

Implementation pattern:

# Training (batch)

states = []

for x in sequence:

h = rnn_cell(h, x)

states.append(h)

# Streaming (online)

class StreamingRNN:

def __init__(self):

self.hidden = None

def step(self, x):

if self.hidden is None:

self.hidden = zeros(H)

self.hidden = rnn_cell(self.hidden, x)

return output(self.hidden)Applications: Live transcription, real-time translation, online monitoring

PyTorch RNN Implementation

PyTorch RNN layers

# Basic RNN layer

rnn = torch.nn.RNN(

input_size=D, # Input dimension

hidden_size=H, # Hidden dimension

num_layers=1, # Number of stacked RNNs

nonlinearity='tanh', # or 'relu'

bias=True,

batch_first=False, # (seq, batch, features)

dropout=0.0, # Between layers (if num_layers > 1)

bidirectional=False

)

# Forward pass

output, h_n = rnn(input, h_0)Input/Output dimensions:

# Shapes (batch_first=False):

input: (T, B, D) # seq_len, batch, input_size

h_0: (L, B, H) # num_layers, batch, hidden_size

output:(T, B, H) # seq_len, batch, hidden_size

h_n: (L, B, H) # num_layers, batch, hidden_size

# If bidirectional=True:

output:(T, B, 2*H)

h_n: (2*L, B, H)RNN Cell (single timestep):

LSTM Implementation:

lstm = torch.nn.LSTM(

input_size=D,

hidden_size=H,

num_layers=1,

bias=True,

batch_first=False,

dropout=0.0,

bidirectional=False,

proj_size=0 # v1.8+: projection layer

)

# Returns tuple: (h_n, c_n)

output, (h_n, c_n) = lstm(input, (h_0, c_0))

# Shapes:

# c_0, c_n: (L, B, H) # cell state

# All others same as RNNGRU Implementation:

gru = torch.nn.GRU(

input_size=D,

hidden_size=H,

num_layers=1,

bias=True,

batch_first=False,

dropout=0.0,

bidirectional=False

)

output, h_n = gru(input, h_0)

# Same shapes as RNNComparing parameters:

- RNN: H(H+D+1) parameters

- LSTM: 4H(H+D+1) parameters (4 gates)

- GRU: 3H(H+D+1) parameters (3 gates)

Stacking layers:

# Multi-layer RNN

deep_rnn = torch.nn.RNN(

input_size=D,

hidden_size=H,

num_layers=3, # Stack 3 RNNs

dropout=0.2 # Between layers 1→2, 2→3

)Bidirectional:

RNN Forward Pass Processes Sequences Step-by-Step

# Simplified pseudocode

def rnn_forward(x_sequence, Wss, Wxs, Wsy):

s = zeros(H) # Initial state

outputs = []

for x_t in x_sequence:

# Update hidden state

s = tanh(Wss @ s + Wxs @ x_t + bs)

# Compute output

y_t = Wsy @ s + by

outputs.append(y_t)

return outputs- Sequential: h_t depends on h_{t-1} (cannot parallelize across time)

- Stateful: Each step depends on previous

- Markovian: Only needs previous hidden state

Sequential requirement:

- State dependency: \(\mathbf{s}_t\) requires \(\mathbf{s}_{t-1}\)

- No skip connections in vanilla RNN

- Architectural limitation

Full PyTorch implementation

import torch

import torch.nn as nn

class VanillaRNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super().__init__()

self.hidden_size = hidden_size

# Weight matrices

self.Wxs = nn.Linear(input_size, hidden_size)

self.Wss = nn.Linear(hidden_size, hidden_size, bias=False)

self.Wsy = nn.Linear(hidden_size, output_size)

def forward(self, x, s0=None):

batch_size, seq_len, _ = x.shape

if s0 is None:

s = torch.zeros(batch_size, self.hidden_size)

else:

s = s0

outputs = []

states = []

for t in range(seq_len):

s = torch.tanh(

self.Wss(s) + self.Wxs(x[:, t, :])

)

y = self.Wsy(s)

states.append(s)

outputs.append(y)

return torch.stack(outputs, dim=1), torch.stack(states, dim=1)Backpropagation Requires Storing All Hidden States

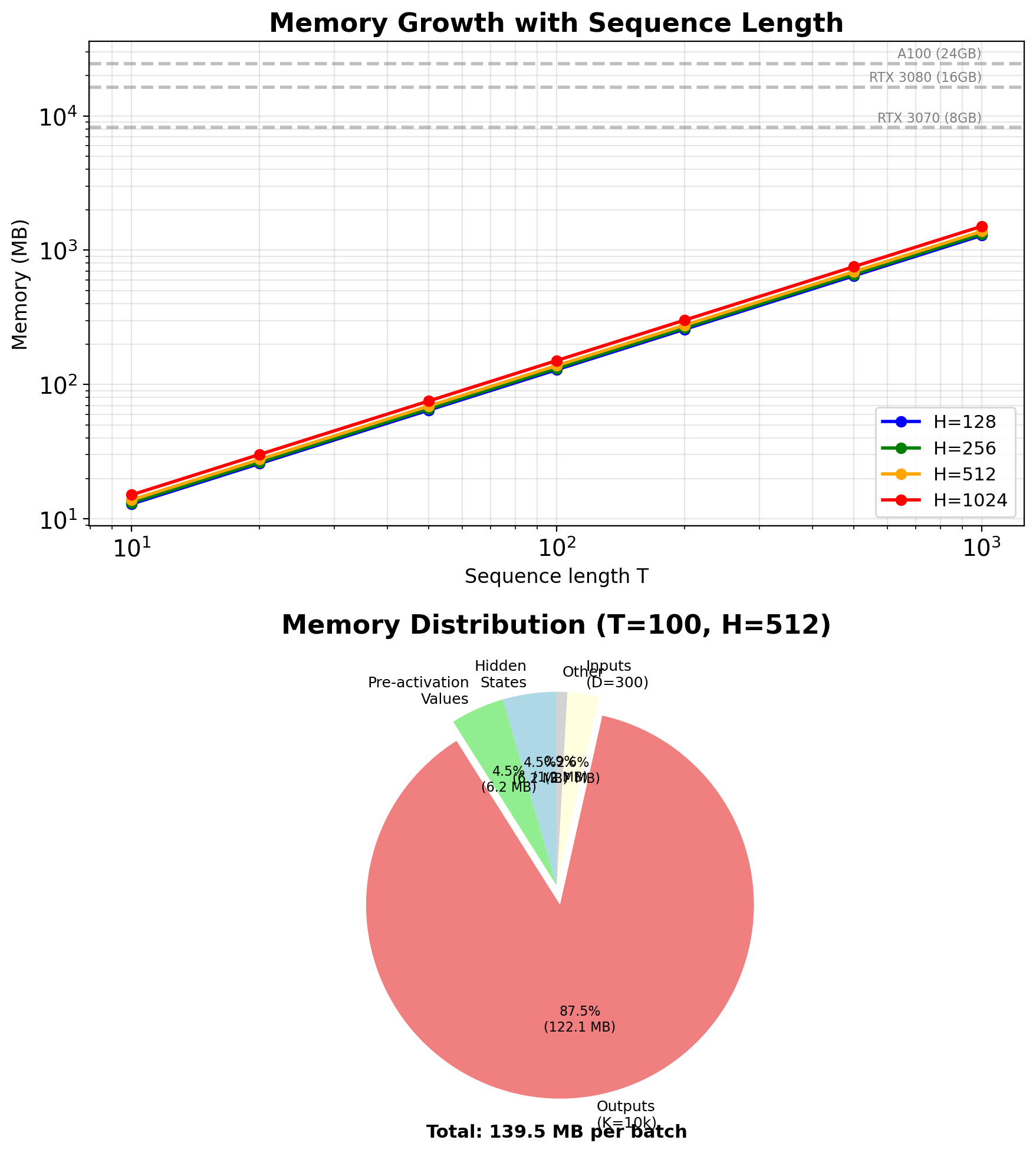

Forward pass must store:

- All hidden states: \(\{\mathbf{s}_0, \mathbf{s}_1, ..., \mathbf{s}_T\}\)

- All activations (pre-tanh): \(\{\mathbf{z}_1, ..., \mathbf{z}_T\}\)

- Input sequence: \(\{\mathbf{x}_1, ..., \mathbf{x}_T\}\)

- Output sequence: \(\{\mathbf{y}_1, ..., \mathbf{y}_T\}\)

Memory complexity:

- States: \(O(T \times H)\)

- Activations: \(O(T \times H)\)

- Inputs: \(O(T \times D)\)

- Outputs: \(O(T \times K)\)

- Total: \(O(T \times (2H + D + K))\)

Example (batch_size=32):

- T=100, H=512, D=300, K=10000

- States: 100 × 512 × 32 × 4 bytes = 6.5 MB

- Outputs: 100 × 10000 × 32 × 4 bytes = 128 MB

- Total per batch: ~140 MB

Memory optimization techniques:

- Gradient checkpointing (recompute vs store)

- Truncated BPTT (limit history)

- Mixed precision (FP16 vs FP32)

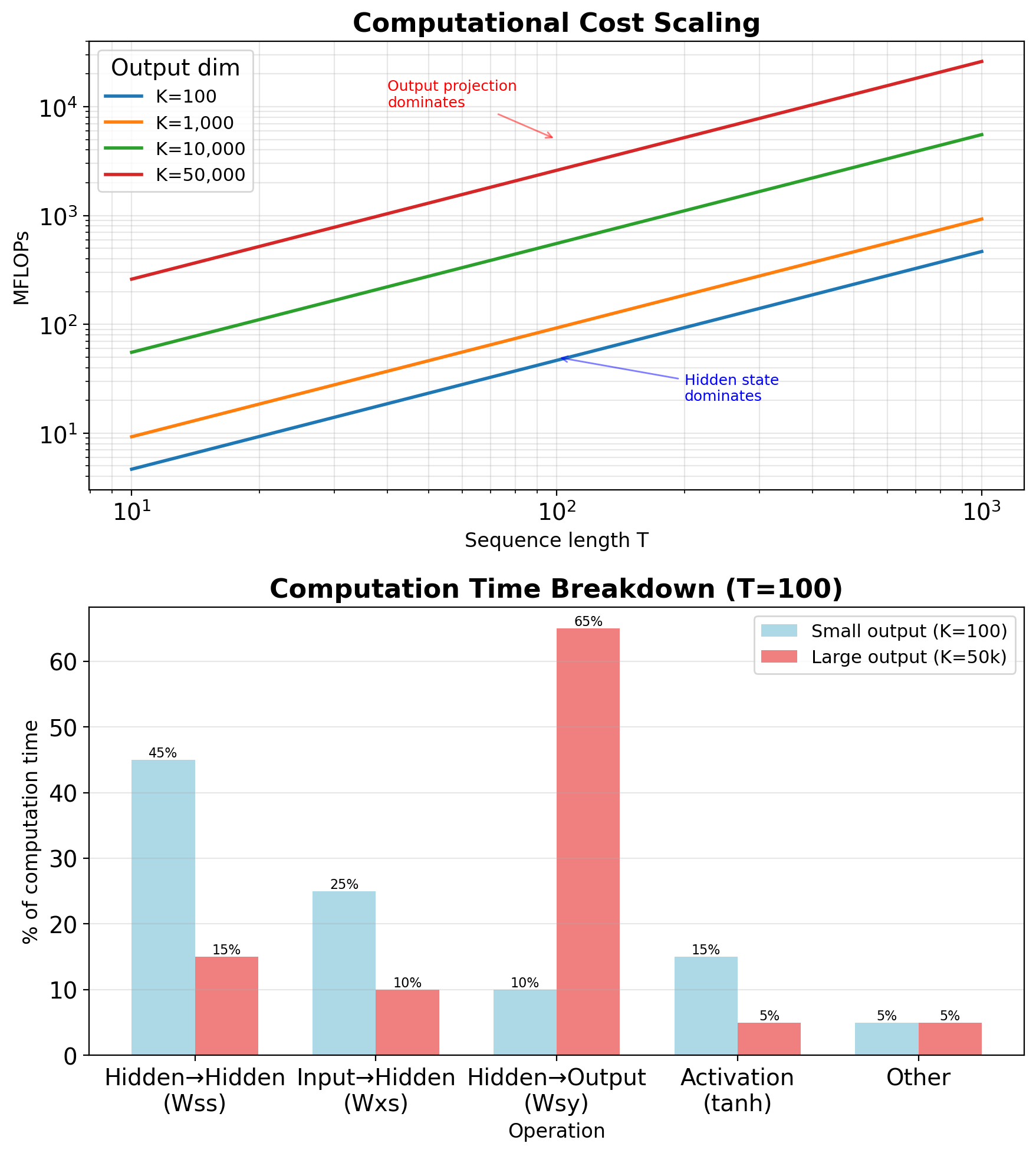

RNN Complexity Scales Linearly with Sequence Length

Matrix multiplications:

- \(\mathbf{W}_{ss} \mathbf{s}_{t-1}\): \(O(H^2)\)

- \(\mathbf{W}_{xs} \mathbf{x}_t\): \(O(HD)\)

- \(\mathbf{W}_{sy} \mathbf{s}_t\): \(O(KH)\)

Per timestep total: \(O(H^2 + HD + KH)\)

Full sequence: \(O(T \times (H^2 + HD + KH))\)

Bottlenecks by task:

- Language modeling (K=50k): Output projection dominates

- Sequence tagging (K=50): Hidden-to-hidden dominates

- Feature extraction (no output): \(H^2\) term dominates

Comparison with Transformers:

- RNN: \(O(T \times H^2)\) sequential

- Transformer: \(O(T^2 \times H)\) parallel

- Crossover point: T ≈ H

Parallelization constraints:

- Each step needs previous state

- No independence across time

- GPU underutilized (low parallelism)

Initial Hidden State Affects Network Behavior

Standard approach: Zero initialization

- Simple and unbiased

- No information at t=0

- Training optimizes for

Learned initial state:

self.s_0 = nn.Parameter(torch.randn(1, H))

# Broadcast for batch

s_0 = self.s_0.expand(batch_size, -1)- Adds H learnable parameters

- Can encode prior knowledge

- Same initialization for all sequences

Random initialization:

- Breaks symmetry

- Can help exploration during training

- Different for each sequence

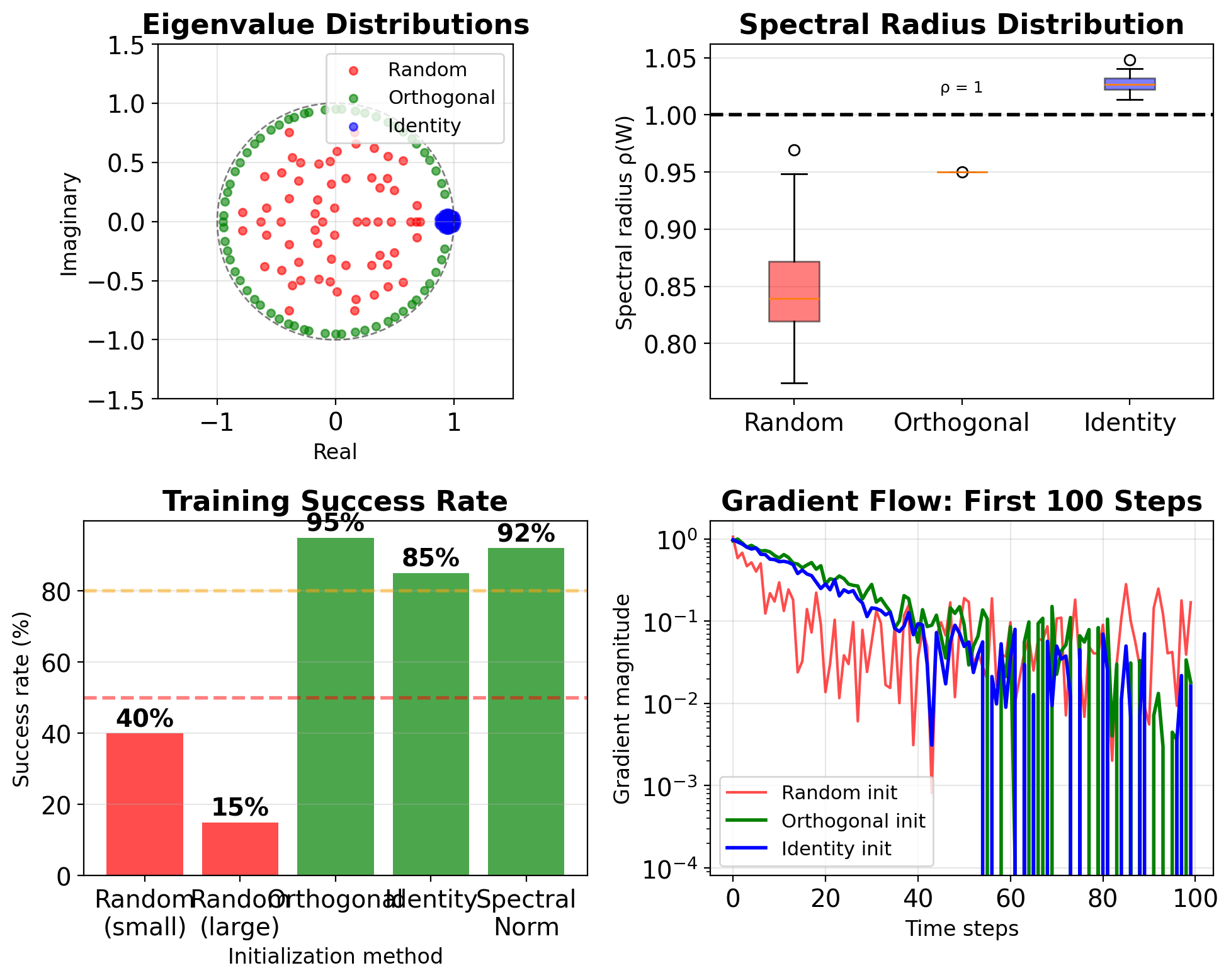

Weight initialization (specific to RNNs):

- Orthogonal \(\mathbf{W}_{ss}\): Preserves gradient norm

- Identity initialization: Start with copying behavior

- Spectral normalization: Control eigenvalues

Impact: Mainly affects first ~10 timesteps

PyTorch Provides Built-In RNN Modules

import torch

import torch.nn as nn

# Standard API usage

rnn = nn.RNN(

input_size=300,

hidden_size=512,

num_layers=1,

batch_first=True, # (B, T, D) format

dropout=0.0, # No dropout for single layer

bidirectional=False

)

# Input: (batch, seq_len, input_size)

x = torch.randn(32, 100, 300)

# Initial hidden: (num_layers, batch, hidden_size)

h_0 = torch.zeros(1, 32, 512)

# Forward pass

output, h_n = rnn(x, h_0)

# output: (32, 100, 512) - all hidden states

# h_n: (1, 32, 512) - final hidden state

# For variable length sequences

from torch.nn.utils.rnn import pack_padded_sequence

packed = pack_padded_sequence(

x, lengths, batch_first=True

)

output_packed, h_n = rnn(packed, h_0)- Optimized CUDA kernels

- Handles batching efficiently

- Built-in dropout support

Custom RNN cell (for debugging)

class CustomRNNCell(nn.Module):

"""Single RNN cell for step-by-step processing"""

def __init__(self, input_size, hidden_size):

super().__init__()

# Combined weight matrix for efficiency

self.weight_ih = nn.Linear(input_size, hidden_size)

self.weight_hh = nn.Linear(hidden_size, hidden_size)

def forward(self, x_t, h_prev):

"""Single timestep forward"""

h_new = torch.tanh(

self.weight_ih(x_t) + self.weight_hh(h_prev)

)

return h_new

# Usage in custom loop

cell = CustomRNNCell(300, 512)

h = torch.zeros(32, 512)

outputs = []

for t in range(seq_len):

h = cell(x[:, t, :], h)

outputs.append(h)

# Can add custom logic here:

# - Attention computation

# - Gradient clipping per step

# - Visualization/debugging

outputs = torch.stack(outputs, dim=1)Custom implementation use cases:

- Understanding/teaching

- Custom architectures

- Step-wise operations

- Debugging gradient flow

Backpropagation Through Time

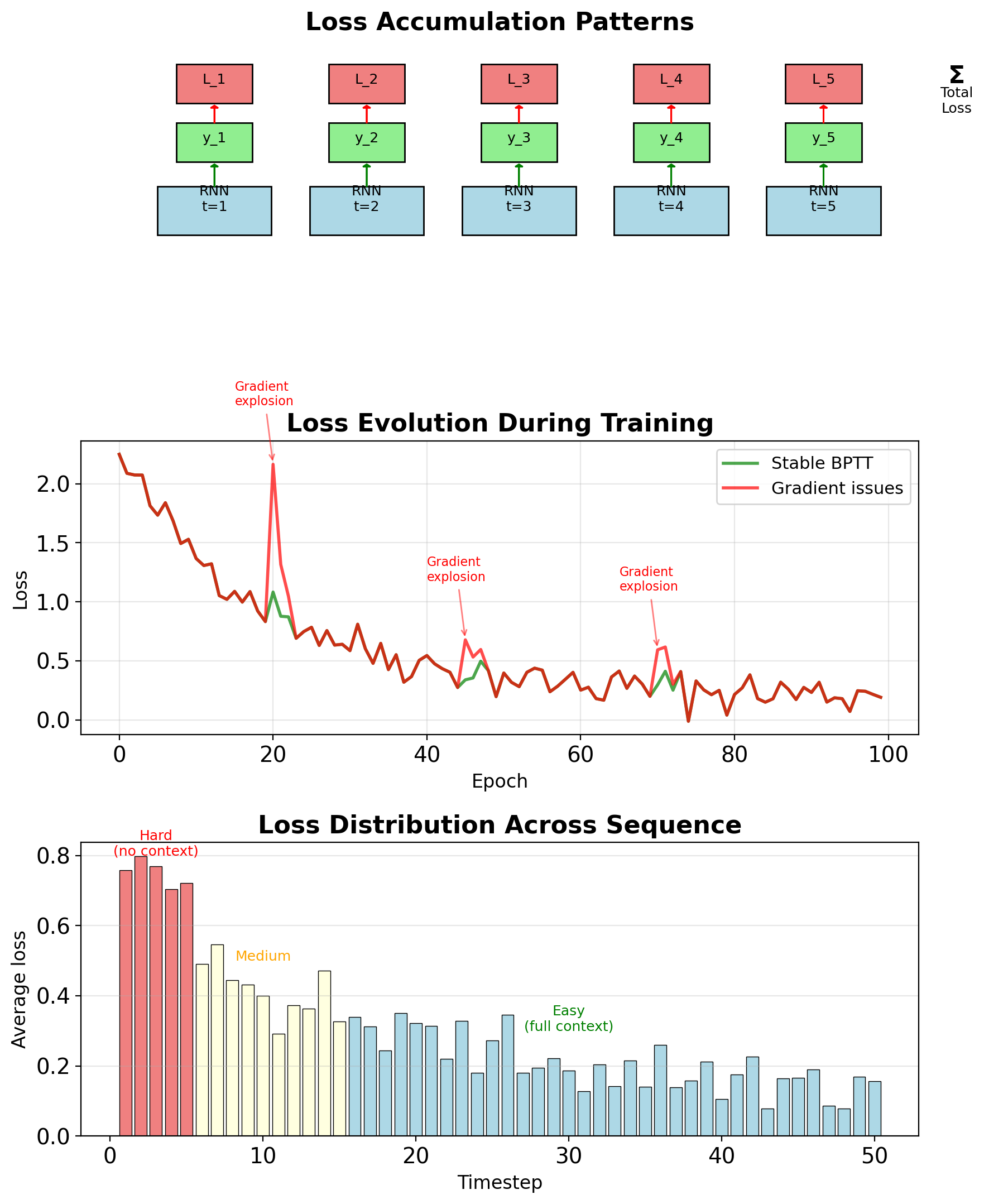

Sequence-Level Loss Computation

Total loss: \[L = \sum_{t=1}^T L_t(\mathbf{y}_t, \hat{\mathbf{y}}_t)\]

Common loss functions:

Classification: Cross-entropy per timestep \[L_t = -\sum_{k=1}^K y_{tk} \log \hat{y}_{tk}\]

Regression: MSE per timestep \[L_t = \|\mathbf{y}_t - \hat{\mathbf{y}}_t\|^2\]

Language modeling: Perplexity \[\text{PPL} = \exp\left(\frac{1}{T}\sum_t L_t\right)\]

Loss accumulation patterns:

- All timesteps (many-to-many): Sum all \(L_t\)

- Final timestep (many-to-one): Only \(L_T\)

- Masked positions: \(\sum_t m_t L_t\) where \(m_t \in \{0,1\}\)

Gradient starts from: \[\frac{\partial L}{\partial \mathbf{y}_t} = \frac{\partial L_t}{\partial \mathbf{y}_t}\]

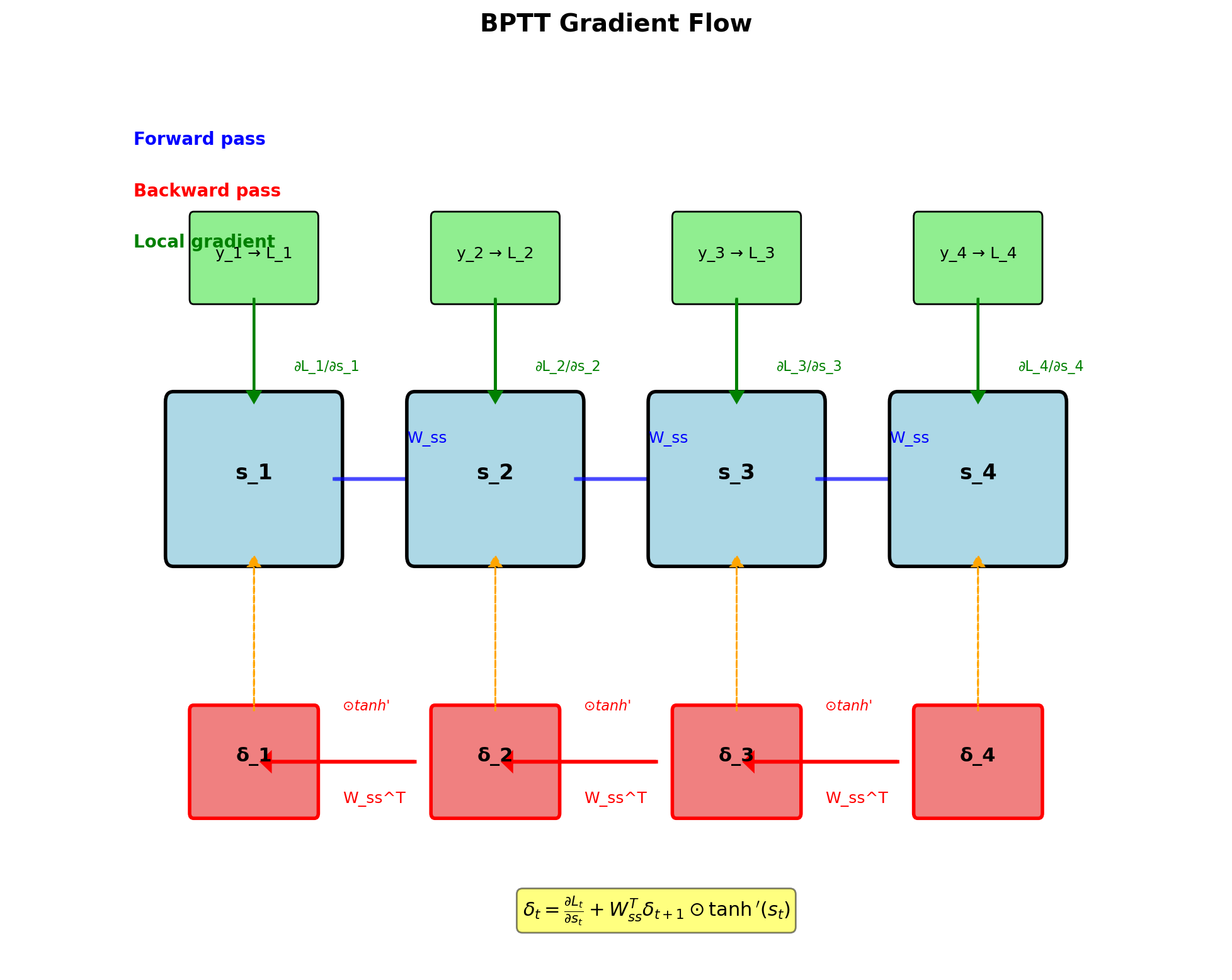

BPTT Gradient Recursion

Error signal at hidden state: \[\boldsymbol{\delta}_t = \frac{\partial L}{\partial \mathbf{s}_t}\]

Recursive computation: \[\boldsymbol{\delta}_t = \frac{\partial L_t}{\partial \mathbf{s}_t} + \mathbf{W}_{ss}^T \boldsymbol{\delta}_{t+1} \odot \tanh'(\mathbf{s}_t)\]

Components:

- Local gradient: \(\frac{\partial L_t}{\partial \mathbf{s}_t} = \mathbf{W}_{sy}^T \frac{\partial L_t}{\partial \mathbf{y}_t}\)

- Future gradient: \(\mathbf{W}_{ss}^T \boldsymbol{\delta}_{t+1}\)

- Activation derivative: \(\tanh'(\mathbf{s}_t) = 1 - \tanh^2(\mathbf{s}_t)\)

Boundary condition: \[\boldsymbol{\delta}_T = \frac{\partial L_T}{\partial \mathbf{s}_T}\]

Gradient flows backward through same path as forward state flow

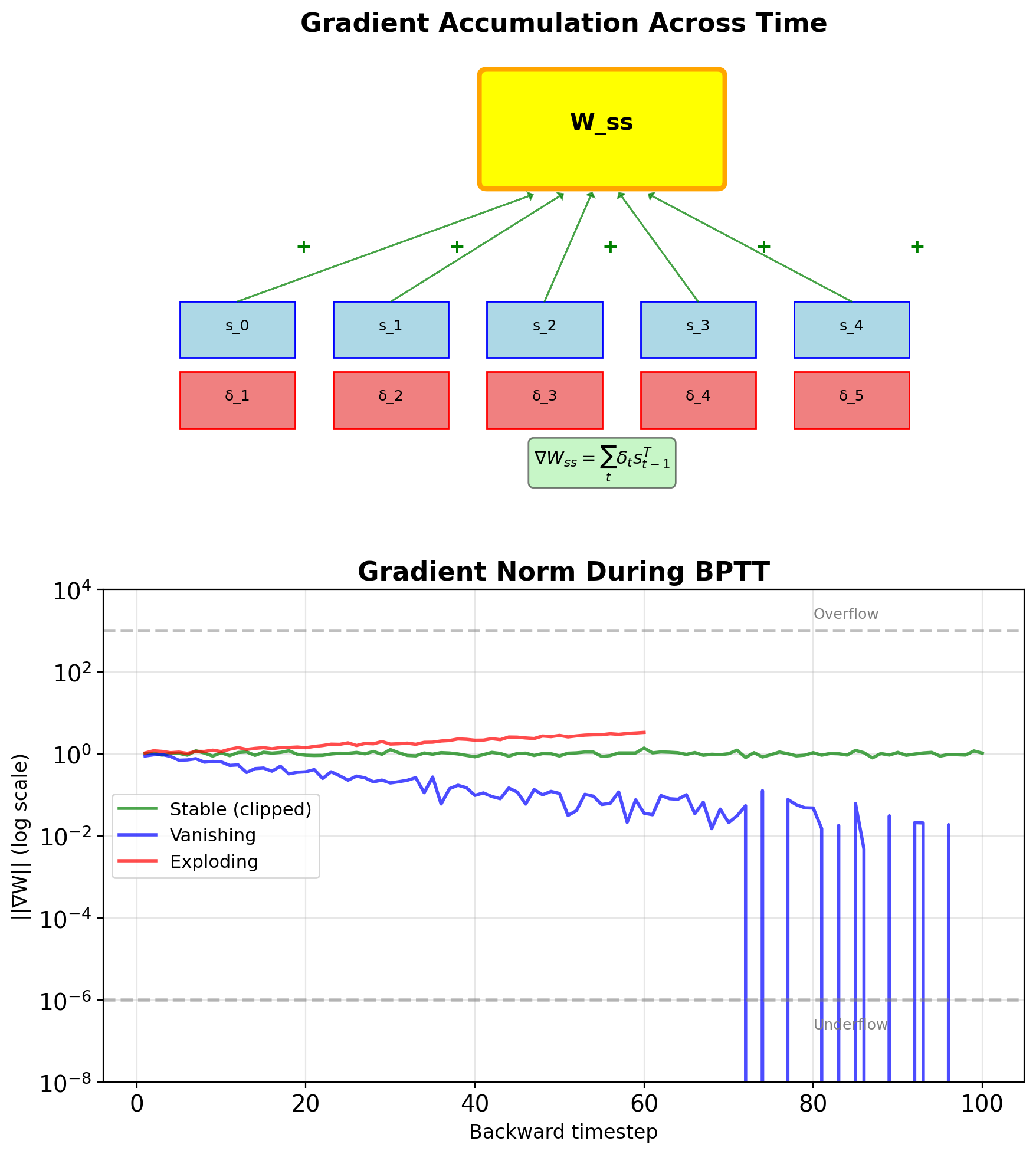

Gradient w.r.t. Weights

Weight gradient accumulation

Hidden-to-hidden weights: \[\frac{\partial L}{\partial \mathbf{W}_{ss}} = \sum_{t=1}^T \boldsymbol{\delta}_t \mathbf{s}_{t-1}^T\]

Input-to-hidden weights: \[\frac{\partial L}{\partial \mathbf{W}_{xs}} = \sum_{t=1}^T \boldsymbol{\delta}_t \mathbf{x}_t^T\]

Hidden-to-output weights: \[\frac{\partial L}{\partial \mathbf{W}_{sy}} = \sum_{t=1}^T \frac{\partial L_t}{\partial \mathbf{y}_t} \mathbf{s}_t^T\]

- Gradients sum across all timesteps

- Same weights receive gradients from entire sequence

- Long sequences → many gradient contributions

- Can lead to gradient explosion if not controlled

Computational pattern:

Computational Graph for BPTT

Truncated BPTT

Limiting gradient flow for efficiency

Motivation:

- Full BPTT: O(T²) memory and time

- Gradient vanishing makes long BPTT ineffective

- Need to process very long sequences

Truncated BPTT algorithm:

- Forward pass: Process full sequence

- Backward pass: Only last k steps

- Detach gradient at truncation point

def truncated_bptt(sequence, k=20):

states = []

for t in range(len(sequence)):

if t % k == 0:

# Detach: stop gradient flow

state = state.detach()

state = rnn_cell(state, sequence[t])

states.append(state)

if t % k == k-1:

# Backprop through last k steps

loss = compute_loss(states[-k:])

loss.backward()Trade-offs:

- k small (5-10): Fast but poor long-range

- k medium (20-35): Good balance

- k large (50+): Slow, diminishing returns

TBPTT Implementation

Chunking sequences for efficiency

def train_with_tbptt(model, sequence, chunk_size=35):

"""Truncated BPTT with chunking"""

hidden = None

total_loss = 0

# Process in chunks

for i in range(0, len(sequence), chunk_size):

# Get chunk

chunk = sequence[i:i+chunk_size]

# Detach hidden state (stop gradient)

if hidden is not None:

hidden = hidden.detach()

# Forward pass on chunk

output, hidden = model(chunk, hidden)

# Compute loss on chunk

loss = criterion(output, targets[i:i+chunk_size])

# Backward pass (only through chunk)

loss.backward()

# Apply gradient clipping

torch.nn.utils.clip_grad_norm_(

model.parameters(), max_norm=5.0

)

# Update weights

optimizer.step()

optimizer.zero_grad()

total_loss += loss.item()

return total_lossImplementation details:

- Hidden state carries forward

- Gradient detached at boundaries

- Each chunk updates independently

Advanced TBPTT strategies

class StatefulRNN:

"""Maintains hidden state across batches"""

def __init__(self, model, batch_size):

self.model = model

self.hidden = None

self.batch_size = batch_size

def reset_hidden(self):

"""Reset at epoch boundaries"""

self.hidden = None

def forward_chunk(self, chunk, backprop_steps):

"""Process chunk with controlled BPTT"""

# Detach old hidden state

if self.hidden is not None:

# Keep last k steps for gradient

if backprop_steps > 0:

# Allow gradient through last k

self.hidden = self.hidden[-backprop_steps:]

else:

# Full detach

self.hidden = self.hidden.detach()

# Forward pass

output, self.hidden = self.model(chunk, self.hidden)

return output

# Usage for language modeling

model = StatefulRNN(rnn, batch_size=32)

for epoch in range(num_epochs):

model.reset_hidden()

for batch in dataloader:

output = model.forward_chunk(batch, k=35)

loss = compute_loss(output, targets)

loss.backward()PyTorch RNN Implementation Details

Built-in features for BPTT

import torch

import torch.nn as nn

# Standard RNN with PyTorch

class LanguageModel(nn.Module):

def __init__(self, vocab_size, hidden_size):

super().__init__()

self.embedding = nn.Embedding(vocab_size, hidden_size)

self.rnn = nn.RNN(hidden_size, hidden_size,

batch_first=True)

self.output = nn.Linear(hidden_size, vocab_size)

def forward(self, x, hidden=None):

embed = self.embedding(x)

rnn_out, hidden = self.rnn(embed, hidden)

output = self.output(rnn_out)

return output, hidden

# Training with gradient clipping

model = LanguageModel(10000, 512)

optimizer = torch.optim.Adam(model.parameters())

for batch in dataloader:

# Forward pass

output, _ = model(batch.input)

loss = F.cross_entropy(

output.reshape(-1, 10000),

batch.target.reshape(-1)

)

# Backward pass

loss.backward()

# Gradient clipping - standard for RNN stability

torch.nn.utils.clip_grad_norm_(

model.parameters(), max_norm=5.0

)

optimizer.step()

optimizer.zero_grad()Debugging gradient flow

def check_gradient_health(model, loss):

"""Monitor gradient statistics"""

loss.backward(retain_graph=True)

grad_stats = {}

for name, param in model.named_parameters():

if param.grad is not None:

grad = param.grad.data

grad_stats[name] = {

'mean': grad.mean().item(),

'std': grad.std().item(),

'max': grad.max().item(),

'min': grad.min().item(),

'norm': grad.norm().item()

}

# Check for issues

for name, stats in grad_stats.items():

# Vanishing

if stats['norm'] < 1e-6:

print(f"WARNING: Vanishing gradient in {name}")

# Exploding

if stats['norm'] > 100:

print(f"WARNING: Exploding gradient in {name}")

# NaN/Inf

if torch.isnan(grad).any() or torch.isinf(grad).any():

print(f"ERROR: NaN/Inf in {name}")

return grad_stats

# Visualize gradient flow

import matplotlib.pyplot as plt

def plot_gradient_flow(named_parameters):

ave_grads = []

layers = []

for n, p in named_parameters:

if p.grad is not None:

layers.append(n)

ave_grads.append(p.grad.abs().mean().cpu())

plt.plot(ave_grads, alpha=0.7)

plt.hlines(0, 0, len(ave_grads)+1, linewidth=1)

plt.xticks(range(len(ave_grads)), layers, rotation="vertical")

plt.xlim(xmin=0, xmax=len(ave_grads))

plt.xlabel("Layers")

plt.ylabel("Average gradient")

plt.title("Gradient flow")

plt.grid(True)Bidirectional RNNs

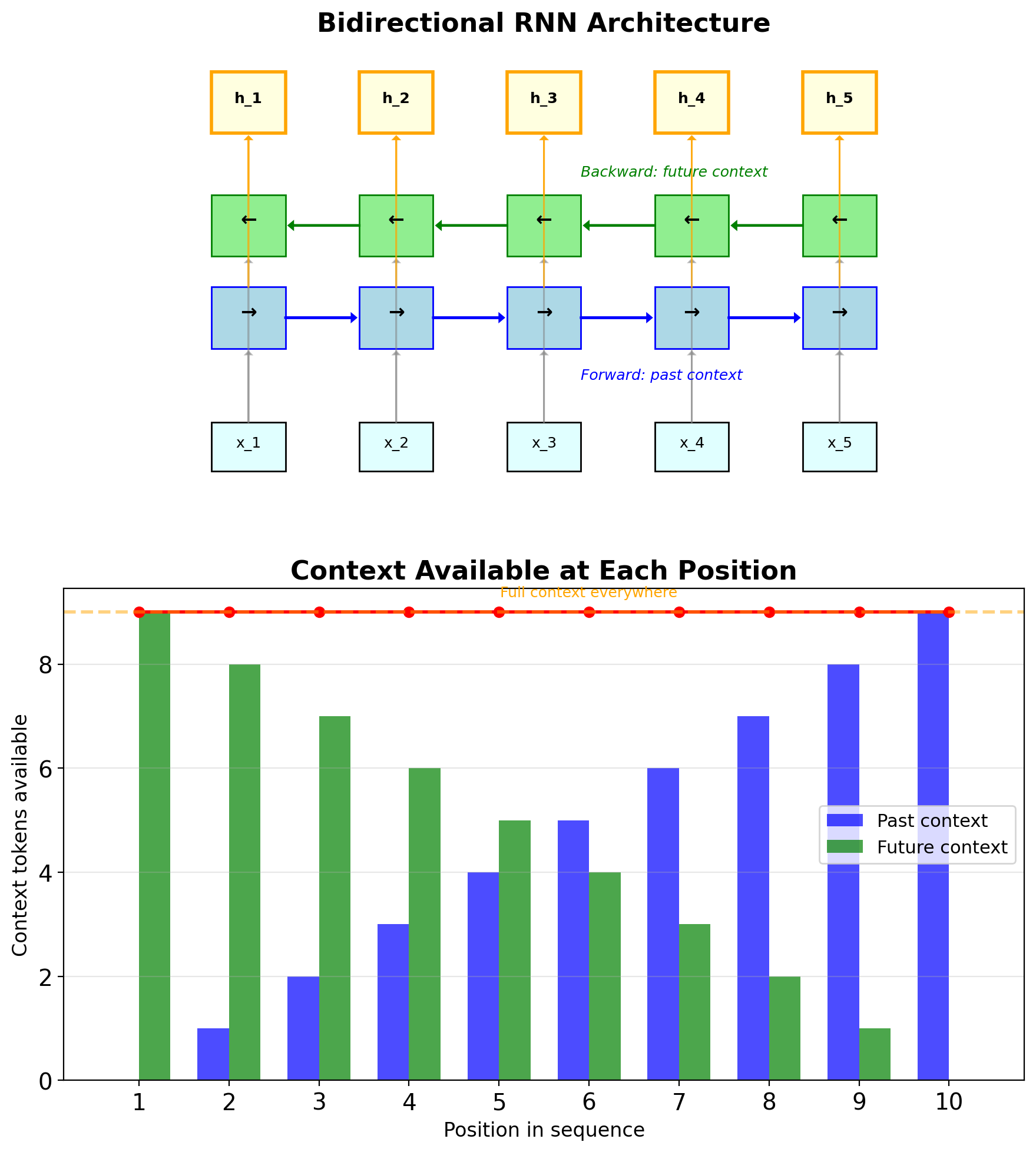

Processing sequences in both directions

Architecture:

- Forward RNN: Processes \(x_1, x_2, ..., x_T\)

- Backward RNN: Processes \(x_T, x_{T-1}, ..., x_1\)

- Concatenate or sum hidden states

\[\vec{\mathbf{s}}_t = \text{RNN}_{\rightarrow}(x_t, \vec{\mathbf{s}}_{t-1})\] \[\overleftarrow{\mathbf{s}}_t = \text{RNN}_{\leftarrow}(x_t, \overleftarrow{\mathbf{s}}_{t+1})\] \[\mathbf{s}_t = [\vec{\mathbf{s}}_t; \overleftarrow{\mathbf{s}}_t]\]

- Full context at every position

- Better for sequence labeling

- 2× parameters and computation

Limitations:

- Cannot use for online/streaming

- Need full sequence before processing

- Not suitable for generation tasks

# PyTorch implementation

birnn = nn.RNN(input_size, hidden_size,

bidirectional=True,

batch_first=True)

# Output size: (batch, seq_len, 2*hidden_size)Common applications:

- Named entity recognition

- Part-of-speech tagging

- Speech recognition (offline)

Gradient Flow Analysis

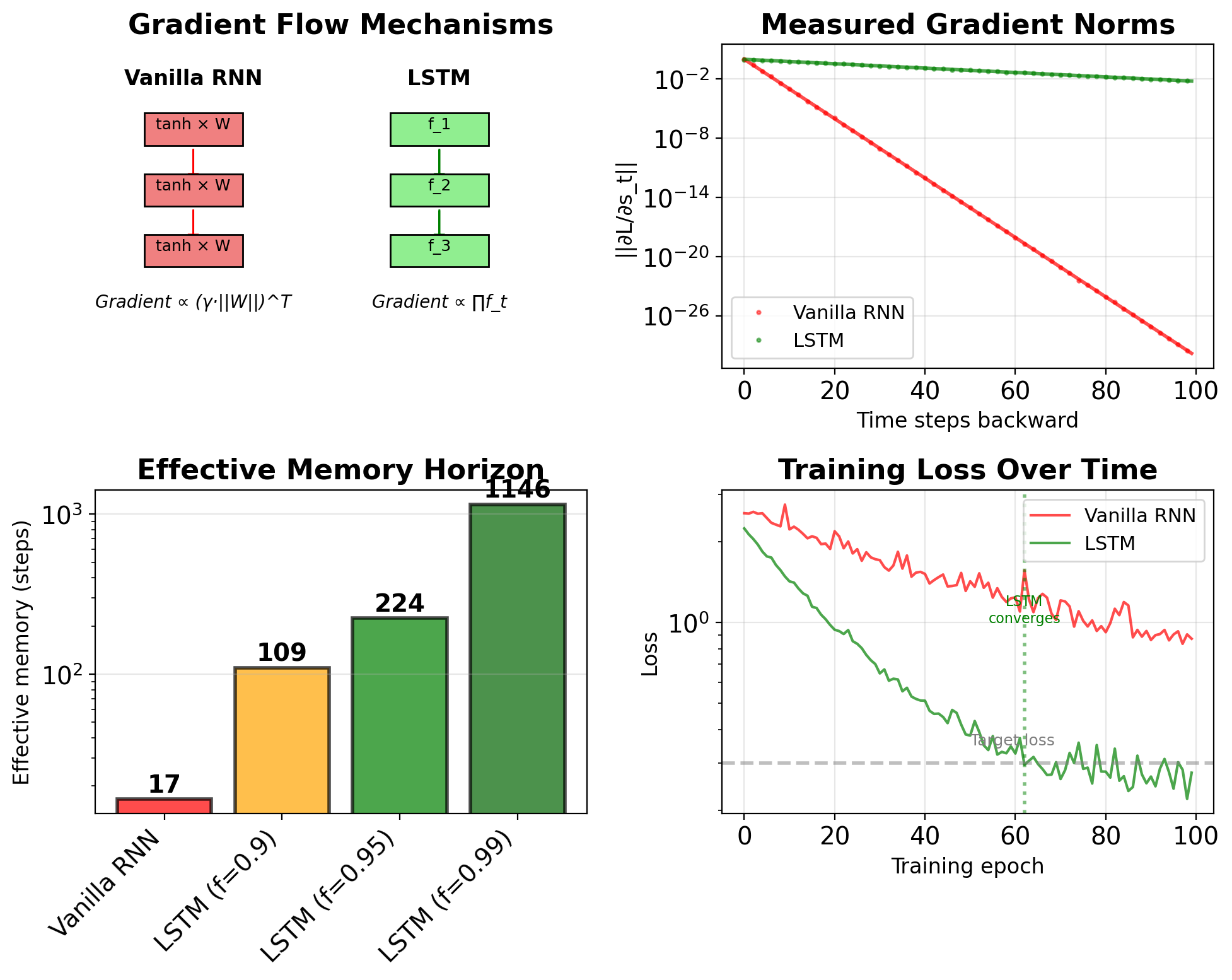

Vanishing Gradients in RNNs

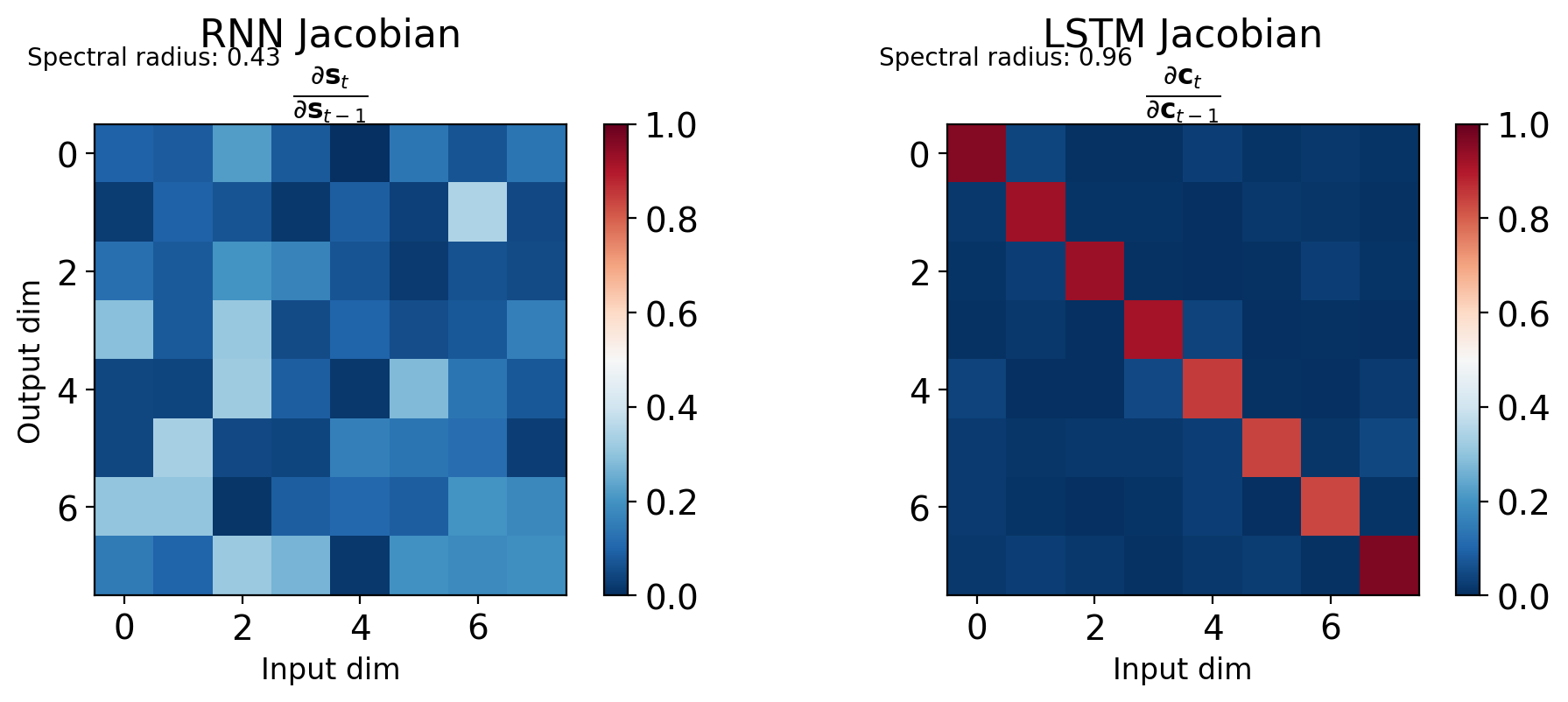

BPTT Gradient Product Expansion

For loss at time T, gradient to time k: \[\frac{\partial L}{\partial \mathbf{s}_k} = \frac{\partial L}{\partial \mathbf{s}_T} \prod_{t=k+1}^{T} \frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\]

Each temporal Jacobian: \[\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}} = \text{diag}(\tanh'(\mathbf{z}_t)) \cdot \mathbf{W}_{ss}\]

where \(\mathbf{z}_t = \mathbf{W}_{ss}\mathbf{s}_{t-1} + \mathbf{W}_{xs}\mathbf{x}_t + \mathbf{b}\)

Product of T-k Jacobians: \[\prod_{t=k+1}^{T} \left[\text{diag}(\tanh'(\mathbf{z}_t)) \cdot \mathbf{W}_{ss}\right]\]

Each factor shrinks gradient:

- \(|\tanh'(z)| \leq 1\) always

- Need \(\|\mathbf{W}_{ss}\| < 1\) for stability

- Product → 0 exponentially

Gradient decay scenarios:

| Configuration | \(\gamma = \|\mathbf{W}\| \cdot \max(\tanh')\) | Gradient after T steps | Effective memory |

|---|---|---|---|

| Strong decay | 0.72 | \(0.72^T\) | ~7 steps |

| Typical RNN | 0.86 | \(0.86^T\) | ~15 steps |

| Well-initialized | 0.93 | \(0.93^T\) | ~30 steps |

| Marginal stability | 0.99 | \(0.99^T\) | ~100 steps |

Thresholds:

- Machine precision: \(\approx 10^{-6}\) (float32)

- 1% gradient strength: Practical learning limit

- Underflow at T=20: \(0.86^{20} \approx 5 \times 10^{-2}\)

- Underflow at T=50: \(0.86^{50} \approx 8 \times 10^{-4}\)

Empirical measurements (Bengio et al., 1994):

- Simple tasks: 10-15 steps maximum

- Language modeling: 5-10 words effective context

- Without careful initialization: 5-7 steps typical

Formal Bound on Gradient Norm

Starting from: \[\left\|\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\right\| = \|\text{diag}(\tanh'(\mathbf{z}_t)) \cdot \mathbf{W}_{ss}\|\]

Using submultiplicativity: \[\left\|\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\right\| \leq \|\text{diag}(\tanh'(\mathbf{z}_t))\| \cdot \|\mathbf{W}_{ss}\|\]

Bounds:

- \(\|\text{diag}(\tanh'(\mathbf{z}_t))\| = \max_i |\tanh'(z_i)| \leq 1\)

- Define \(\gamma = \|\mathbf{W}_{ss}\| \cdot \max_t \|\text{diag}(\tanh'(\mathbf{z}_t))\|\)

Final bound: \[\left\|\frac{\partial L}{\partial \mathbf{s}_k}\right\| \leq \gamma^{T-k} \left\|\frac{\partial L}{\partial \mathbf{s}_T}\right\|\]

Gradient behavior depends on \(\gamma^{T-k}\):

- \(\gamma < 1\): Gradients decay as \(\gamma^{T-k} \rightarrow 0\)

- \(\gamma > 1\): Gradients grow as \(\gamma^{T-k} \rightarrow \infty\)

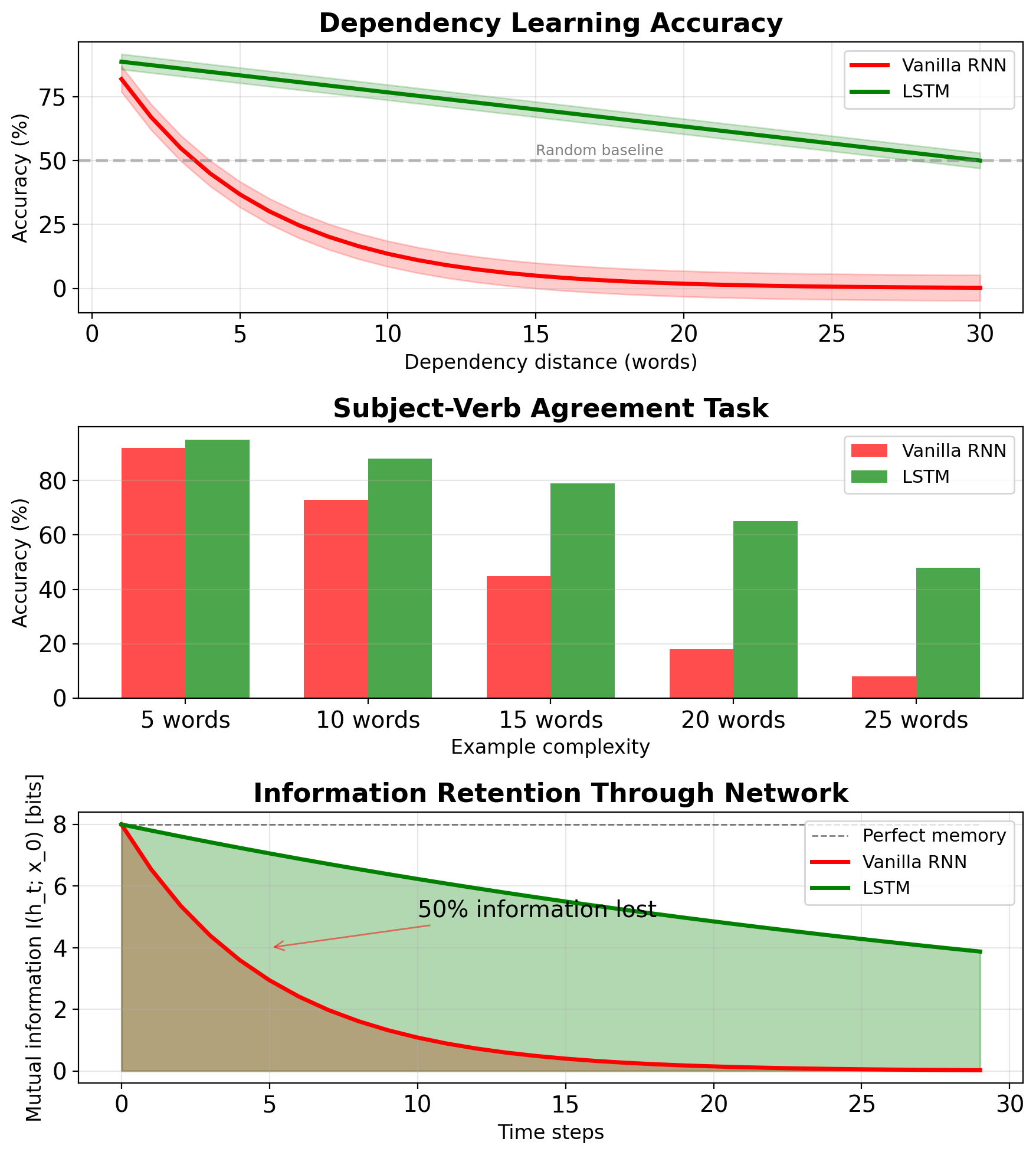

- Effective memory: \(T_{eff} \approx \log(\epsilon)/\log(\gamma)\) steps

Empirically measured:

- Vanilla RNN: 10-20 steps effective memory

- Copy task: Performance drops to chance after ~15 steps

- Language modeling: Effective range 10-20 words (Bengio et al., 1994)

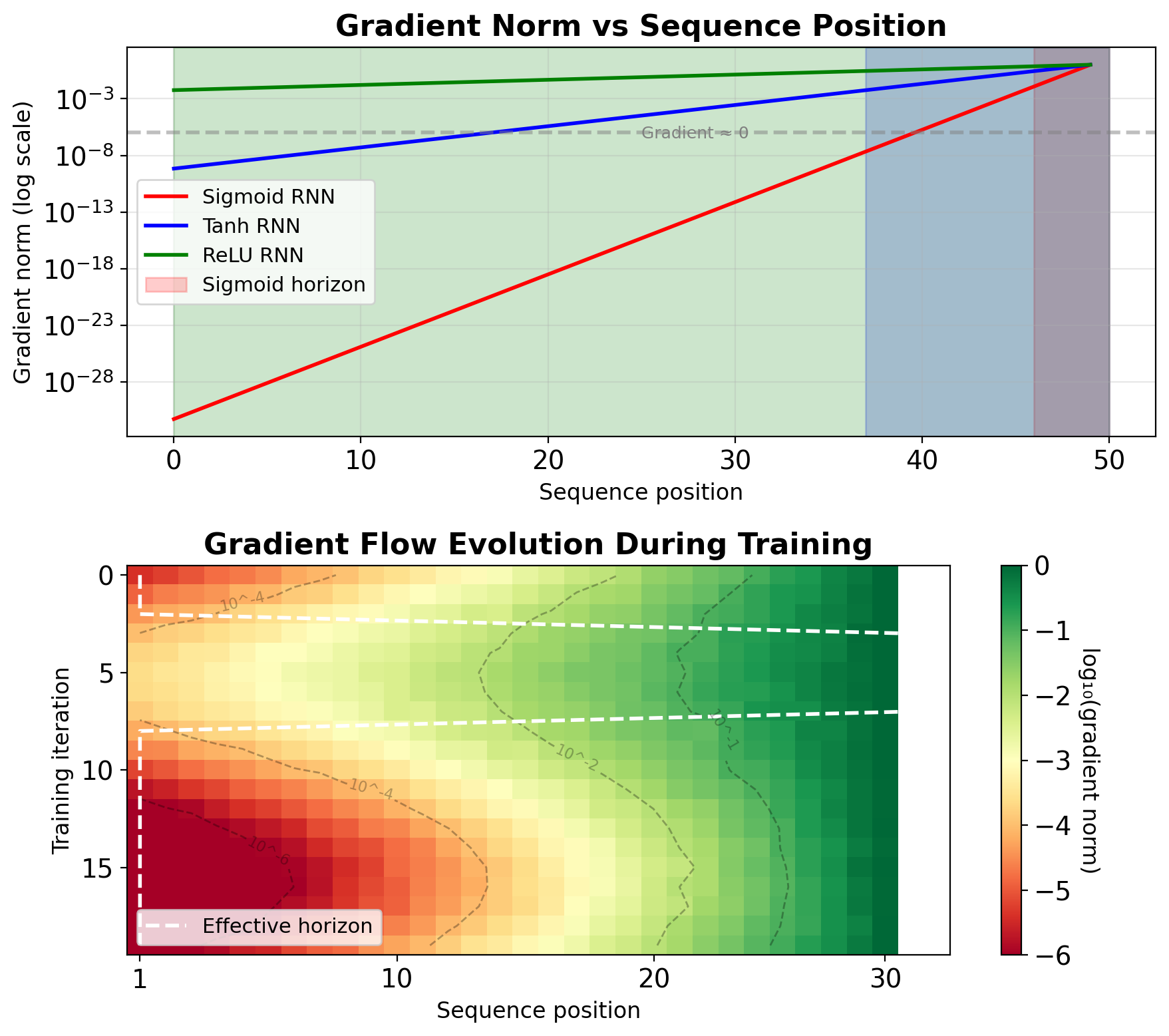

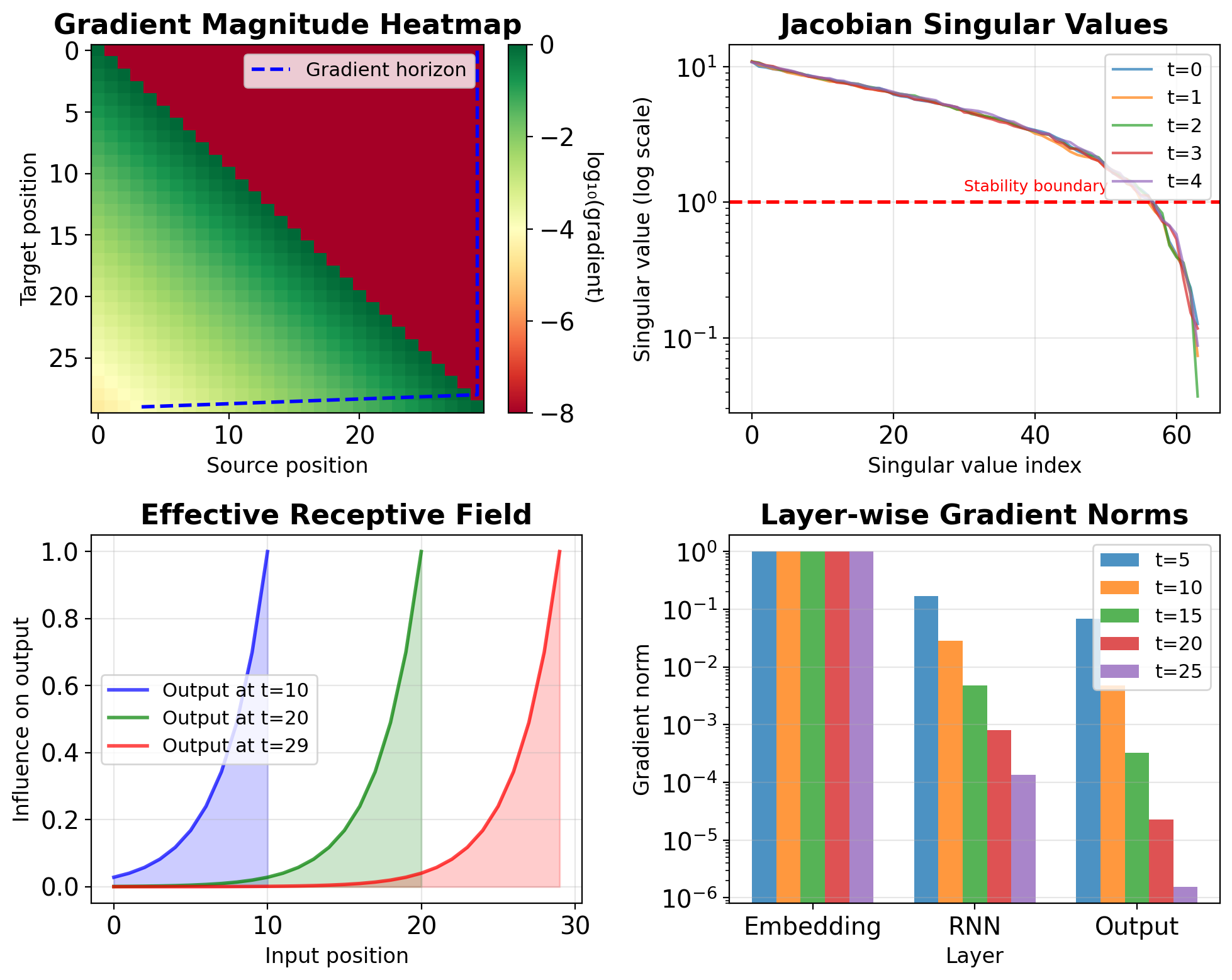

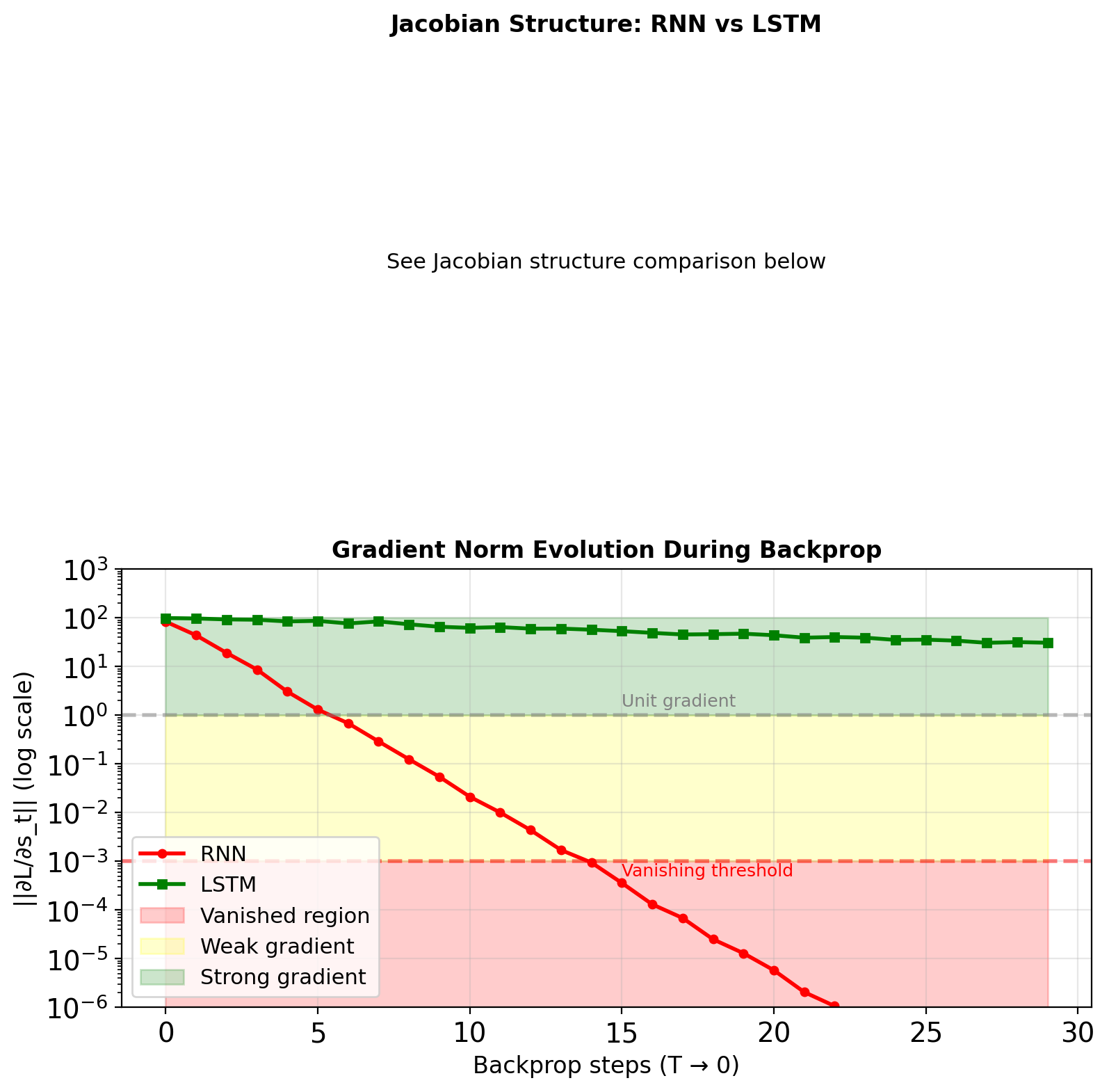

Gradients Decay Exponentially with Time Steps

Experiment setup:

- Train RNN on sequence length T=100

- Measure gradient norm at each position

- Track over training iterations

- Distance effect: \(\|\nabla_{\mathbf{s}_k}\| \propto \gamma^{T-k}\)

- Effective horizon: ~10-20 steps for vanilla RNN

- Network ignores early inputs

Measured decay rates:

- Sigmoid RNN: \(\gamma \approx 0.23\) → T_eff ≈ 4 steps

- Tanh RNN: \(\gamma \approx 0.65\) → T_eff ≈ 13 steps

- ReLU RNN: \(\gamma \approx 0.9\) → T_eff ≈ 63 steps (but unstable)

Practical implication: Cannot learn dependencies beyond T_eff

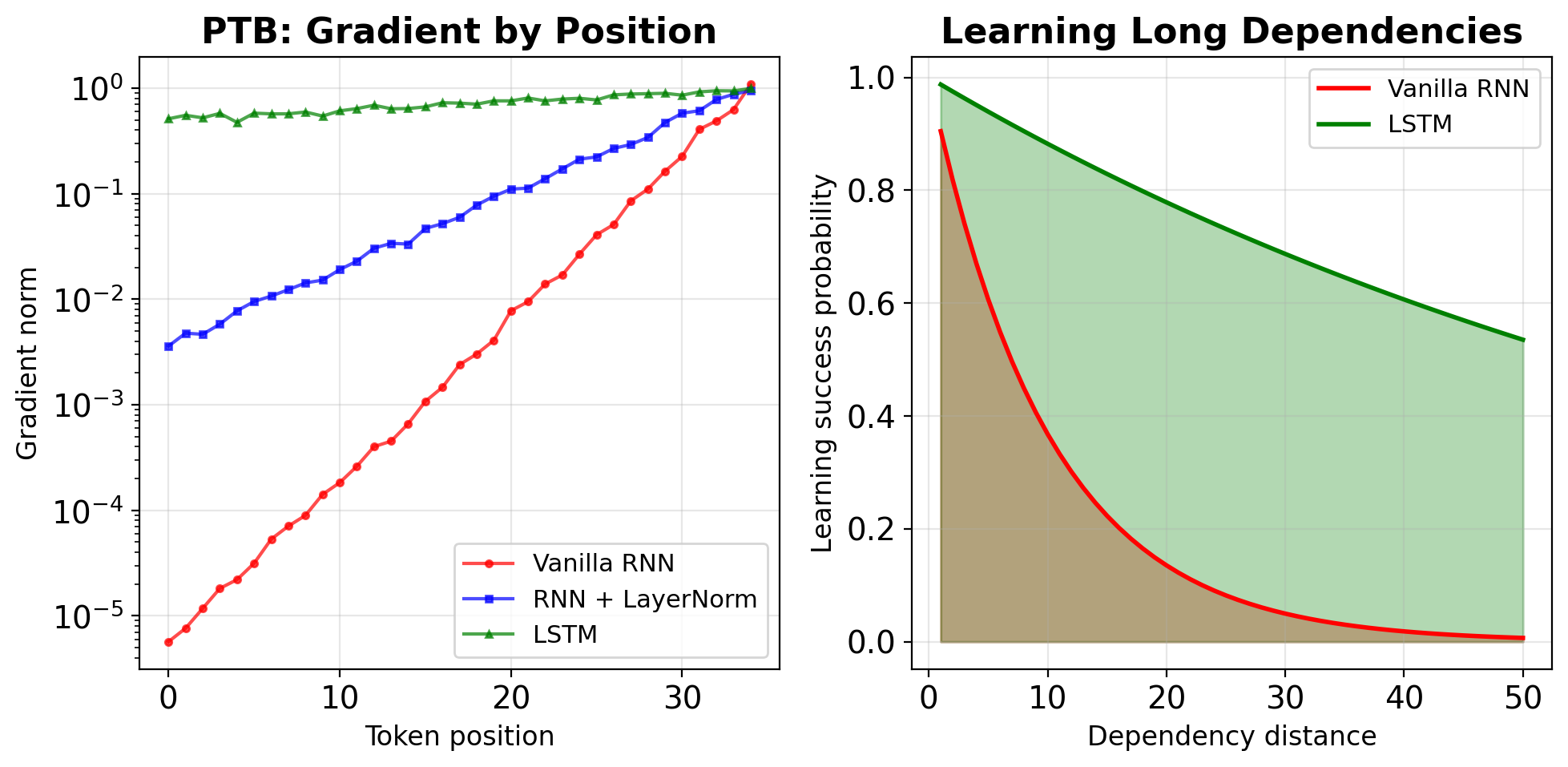

Training Dynamics Show Rapid Gradient Attenuation

Setup: Penn Treebank language modeling

# Model configuration

hidden_size = 128

num_layers = 1

sequence_length = 35

vocab_size = 10000

# Training

batch_size = 32

learning_rate = 1.0Gradient measurements:

- Average over 100 batches

- Track position-wise gradient norms

- Compare different architectures

Results:

- Vanilla RNN: 90% gradient loss after 15 steps

- Layer norm helps: Extends to ~25 steps

- Still inadequate: Language needs 50+ steps

- LSTM necessary: Maintains gradients 100+ steps

Vanishing Gradients Limit Long-Term Dependencies

Observable behaviors:

- Recency bias: Recent inputs dominate

- Ignored prefixes: Early tokens have no impact

- Short-range patterns only: N-grams, not syntax

Quantified impact (word prediction task):

Dependency | Vanilla RNN | LSTM

-----------|-------------|------

1-5 words | 85% acc | 87% acc

5-10 words | 45% acc | 78% acc

10-20 words| 12% acc | 65% acc

20+ words | ~random | 43% accFailure modes:

- Cannot track subject-verb agreement > 15 words

- Forgets context in long sentences

- Cannot maintain coherent topic

Compensation strategies (inadequate):

- Increase hidden size → Memory cost

- Reduce sequence length → Lose context

- Curriculum learning → Limited improvement

Gradient Flow Visualization Reveals Bottlenecks

Techniques for visualization:

- Gradient magnitude heatmaps

- Jacobian singular values

- Effective receptive field

- Layer-wise gradient norms

Gradient behavior:

- Gradient cliff: Sharp drop after 10-15 steps

- Dead zones: Positions with ||∇|| < 1e-6

- Asymmetric flow: Later positions dominate

Diagnostic metrics: \[\text{Gradient ratio} = \frac{\|\nabla_{\mathbf{s}_0}\|}{\|\nabla_{\mathbf{s}_T}\|}\]

Typical values:

- Vanilla RNN: 10^(-6) to 10^(-10)

- With gradient clipping: 10^(-4) to 10^(-6)

- LSTM: 10^(-1) to 10^(-3)

Interpretation: Information cannot flow backward beyond the gradient horizon

Exploding Gradients and Solutions

Gradients Explode When Spectral Radius Exceeds Unity

\[\left\|\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\right\| = \|\text{diag}(f'(\mathbf{z}_t)) \cdot \mathbf{W}_{ss}\| > 1\]

When this happens:

- Large weight initialization: \(\|\mathbf{W}_{ss}\| > 1\)

- ReLU with positive feedback: No saturation to limit growth

- Adversarial parameter combinations: Gradients explode despite proper initialization

Explosion characteristics:

- Sudden onset (fine → explosion in 1 step)

- Numerical overflow: NaN/Inf values

- Loss spikes to infinity

- Training completely fails

Measured explosion rates:

- \(\|\mathbf{J}\| = 1.1\): Explodes after ~70 steps

- \(\|\mathbf{J}\| = 1.5\): Explodes after ~15 steps

- \(\|\mathbf{J}\| = 2.0\): Explodes after ~8 steps

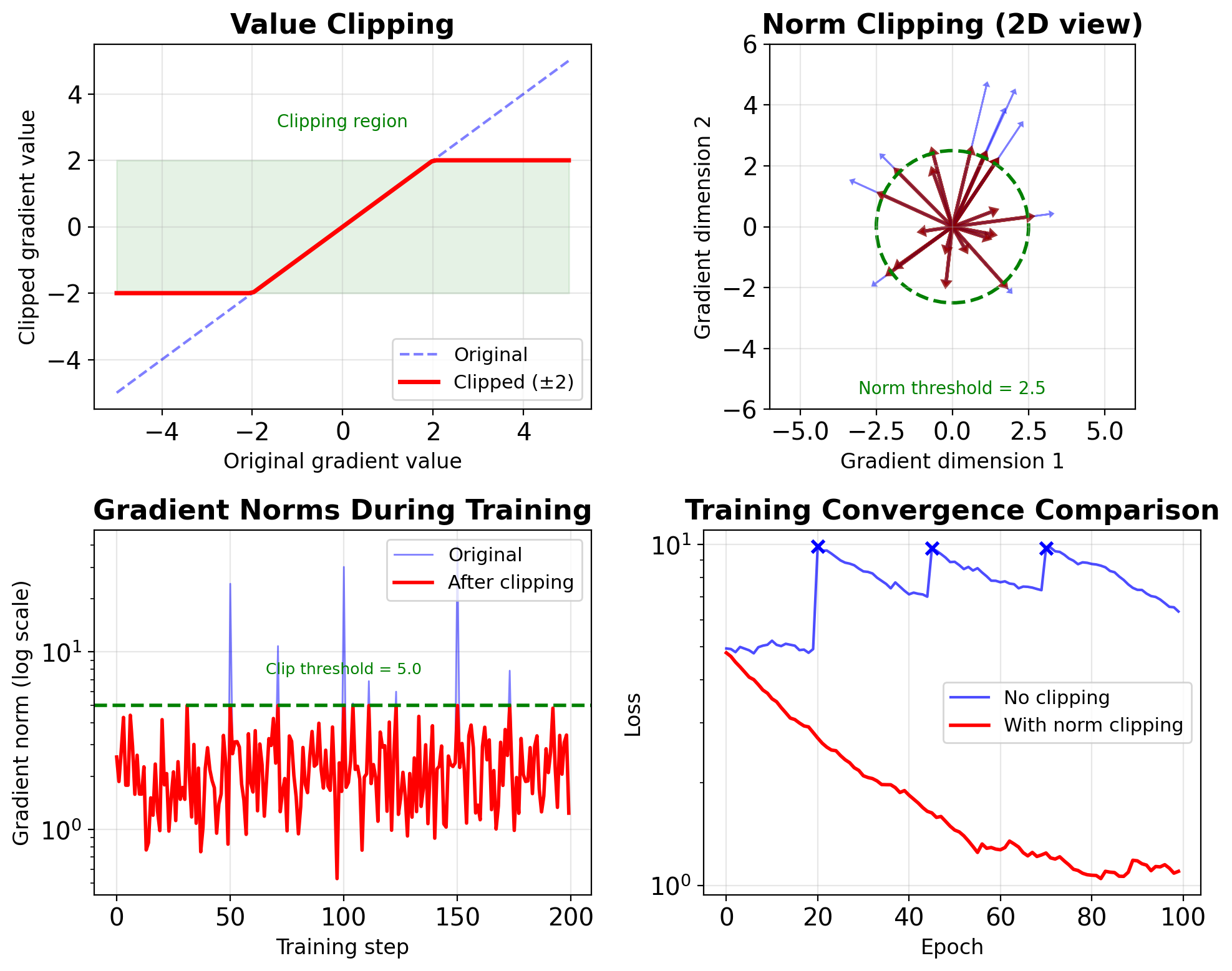

Two Standard Methods for Gradient Clipping

1. Value clipping (element-wise):

2. Norm clipping (preserve direction):

def clip_grad_norm(grads, max_norm):

total_norm = torch.sqrt(

sum(g.norm()**2 for g in grads))

clip_coef = max_norm / (total_norm + 1e-6)

clip_coef = min(clip_coef, 1.0)

for g in grads:

g.mul_(clip_coef)

return total_normComparison:

- Value: Simple, can distort direction

- Norm: Preserves direction, standard for RNNs

- Adaptive: Threshold adjusts during training

Norm Clipping Rescales Gradient Direction

Basic norm clipping:

Complete training loop:

def train_step(model, data, target, clip_val=5.0):

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

# Clip gradients

total_norm = torch.nn.utils.clip_grad_norm_(

model.parameters(), clip_val)

# Monitor gradient explosion

if total_norm > clip_val:

print(f"Clipped: {total_norm:.2f} -> {clip_val}")

optimizer.step()

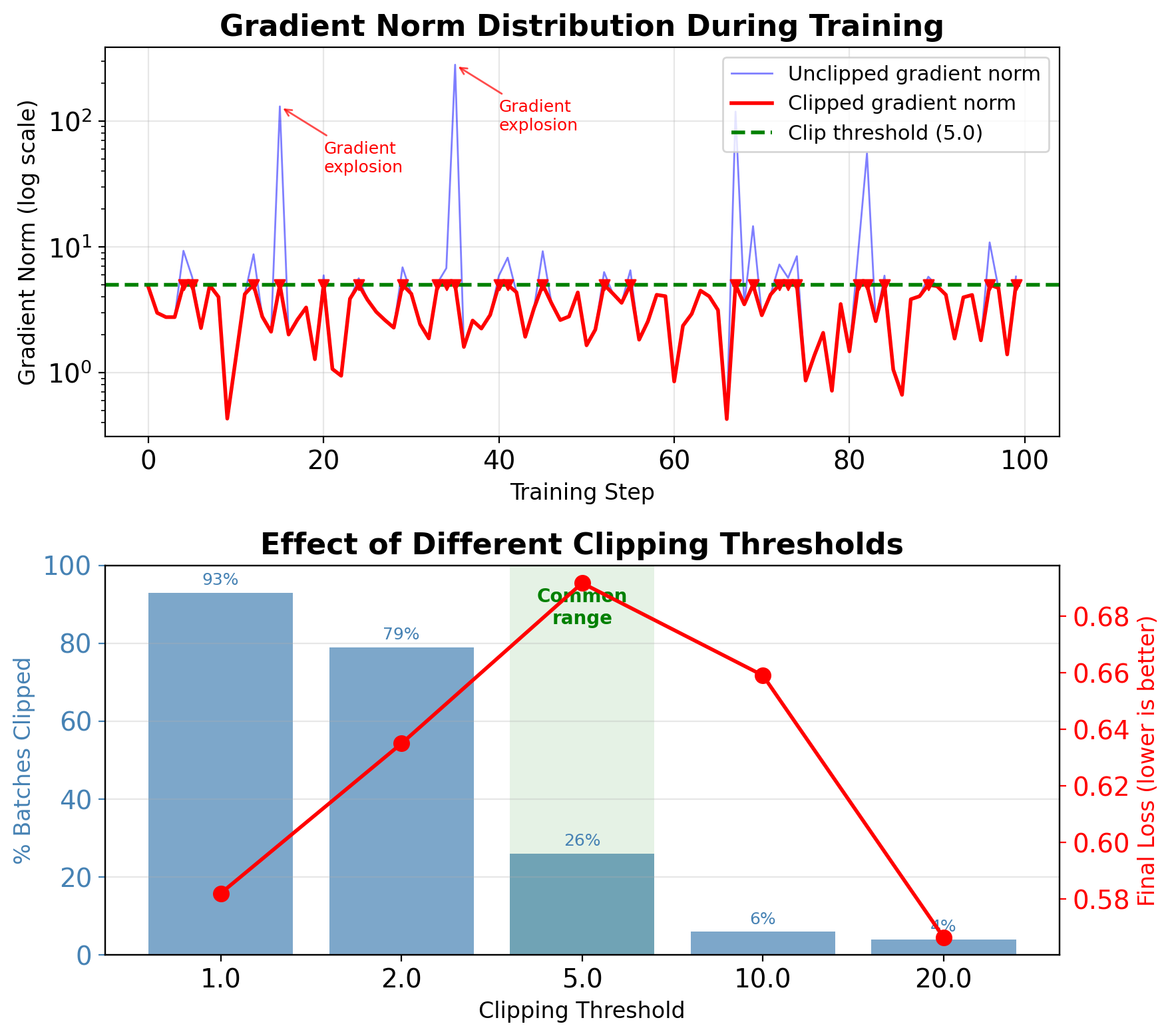

return loss.item(), total_norm.item()Adaptive clipping:

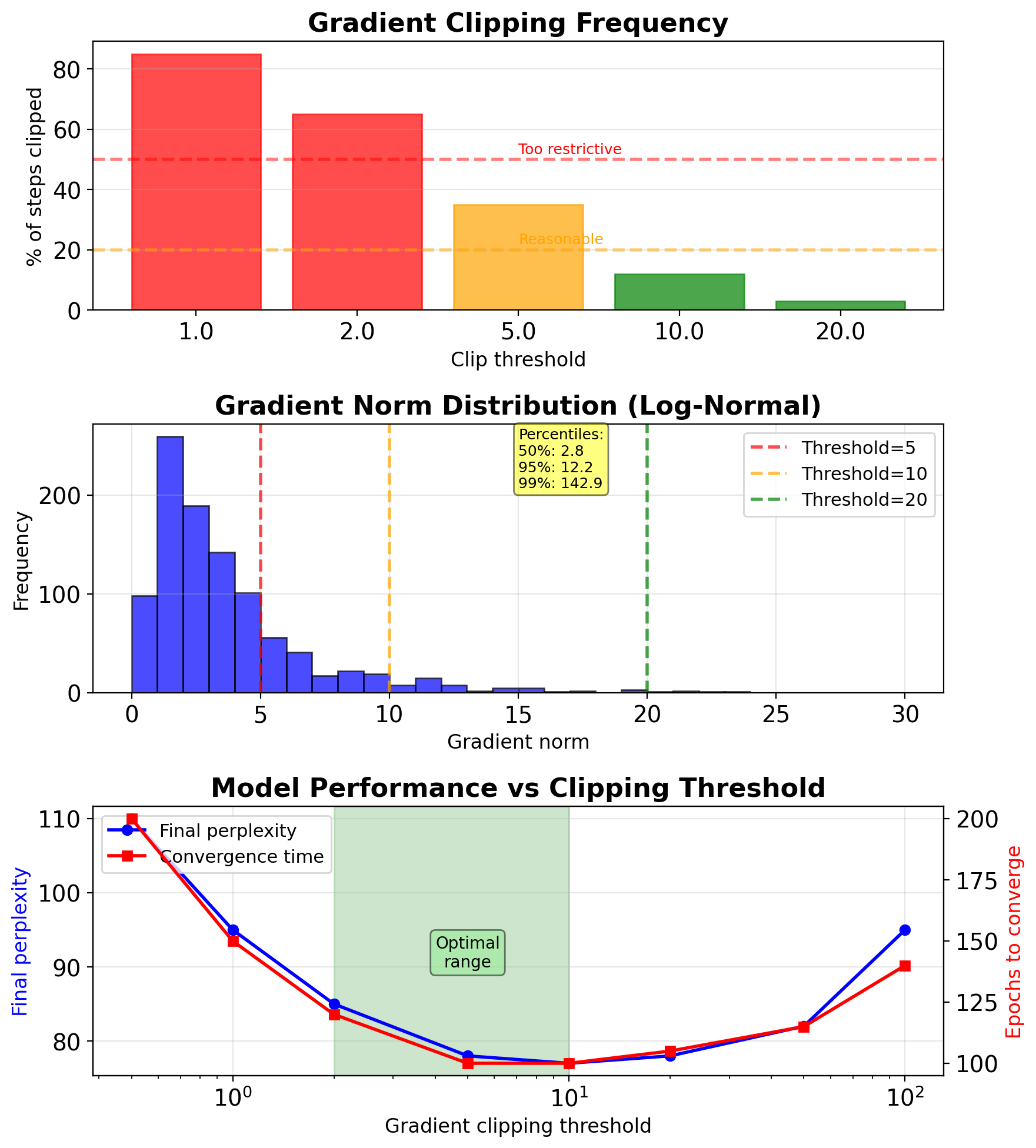

Clipping Threshold Requires Empirical Tuning

Empirical guidelines:

- Start with 5.0-10.0 for most tasks

- Language models: 1.0-5.0

- Sequence-to-sequence: 5.0-10.0

- RL tasks: 0.5-1.0 (more sensitive)

Monitoring strategy:

# Track clipping statistics

clip_stats = {

'total_steps': 0,

'clipped_steps': 0,

'max_norm_seen': 0,

'avg_norm': 0

}

# Log percentage clipped

if total_norm > max_norm:

clip_stats['clipped_steps'] += 1Warning signs:

50% steps clipped → threshold too low

- <1% steps clipped → threshold too high

- Sudden spike in clipping → check learning rate

Adaptive strategies:

- Percentile-based: Set to 95th percentile

- Moving average: 3× running average

- Schedule: Decrease over training

RNN Initialization Prevents Early Divergence

1. Orthogonal initialization:

def orthogonal_init(weight):

nn.init.orthogonal_(weight)

# Ensure spectral radius < 1

with torch.no_grad():

weight *= 0.952. Identity initialization (IRNN):

3. Spectral normalization:

def spectral_normalize(weight, target=0.95):

with torch.no_grad():

s = torch.svd(weight).S[0]

weight *= target / s- Orthogonal: Preserves gradient norm initially

- Identity: Simple dynamics, easier debugging

- Spectral: Direct control of largest eigenvalue

Empirical results (Penn Treebank):

- Random init: 60% training failures

- Orthogonal: 5% failures

- Identity: 10% failures

Layer Normalization Stabilizes Hidden State Magnitude

Standard RNN with LayerNorm:

class LayerNormRNN(nn.Module):

def __init__(self, input_size, hidden_size):

super().__init__()

self.rnn = nn.RNN(input_size, hidden_size)

self.ln = nn.LayerNorm(hidden_size)

def forward(self, x, h0=None):

# Apply LayerNorm to hidden states

output, hn = self.rnn(x, h0)

output = self.ln(output)

return output, hnLayer-wise normalization:

# Normalize pre-activation

z = W_ih @ x + W_hh @ h

z_norm = layer_norm(z) # Before activation

h_new = tanh(z_norm)Benefits:

- Stabilizes hidden state magnitudes

- Reduces gradient variance

- Allows higher learning rates

- BUT: Doesn’t solve vanishing gradient problem

Measured improvements:

- Gradient variance: 10× reduction

- Effective memory: 15→25 steps (limited gain)

- Training speed: 2-3× faster convergence

Part 4: LSTM Architecture

Gate Mechanisms

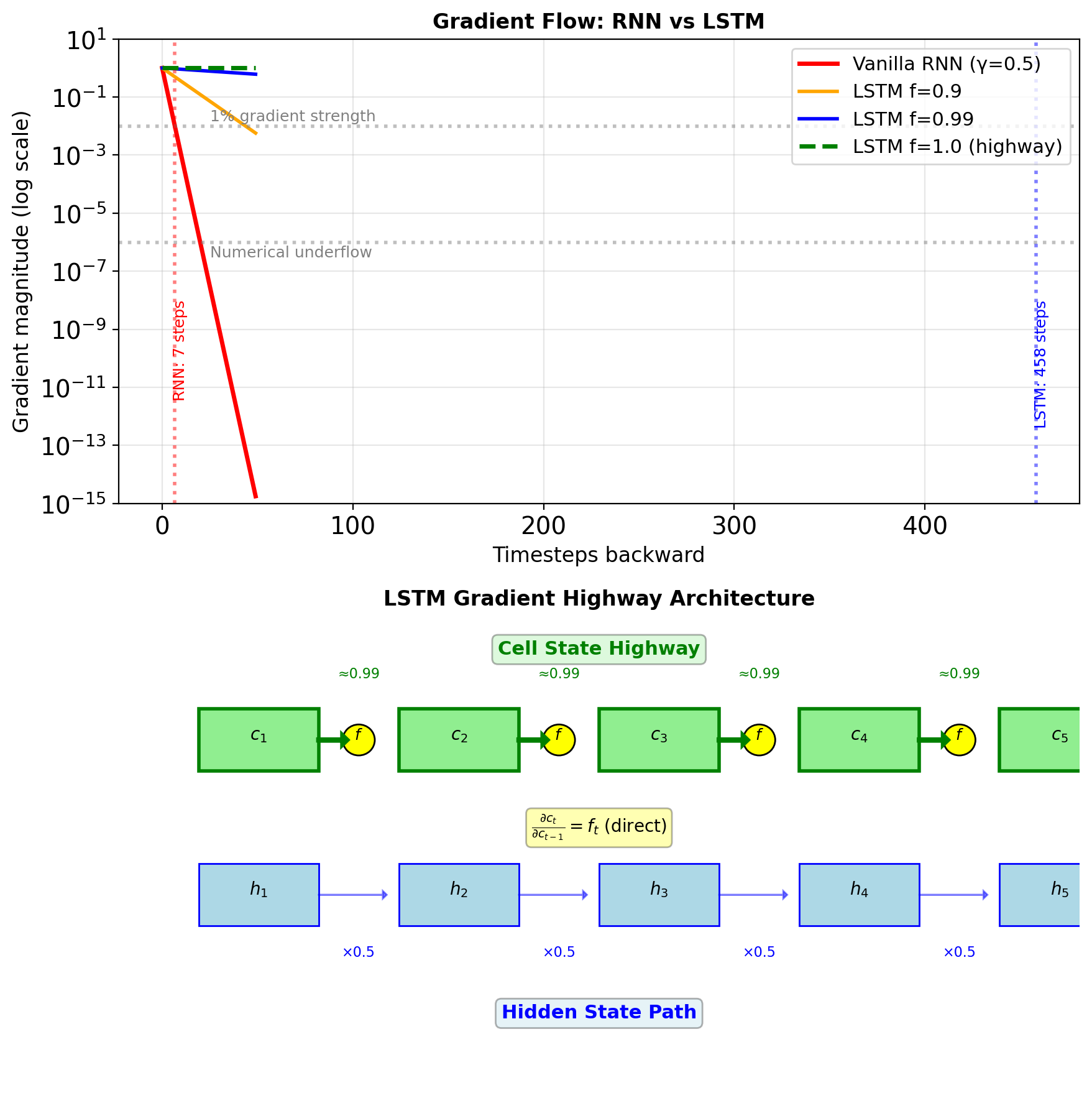

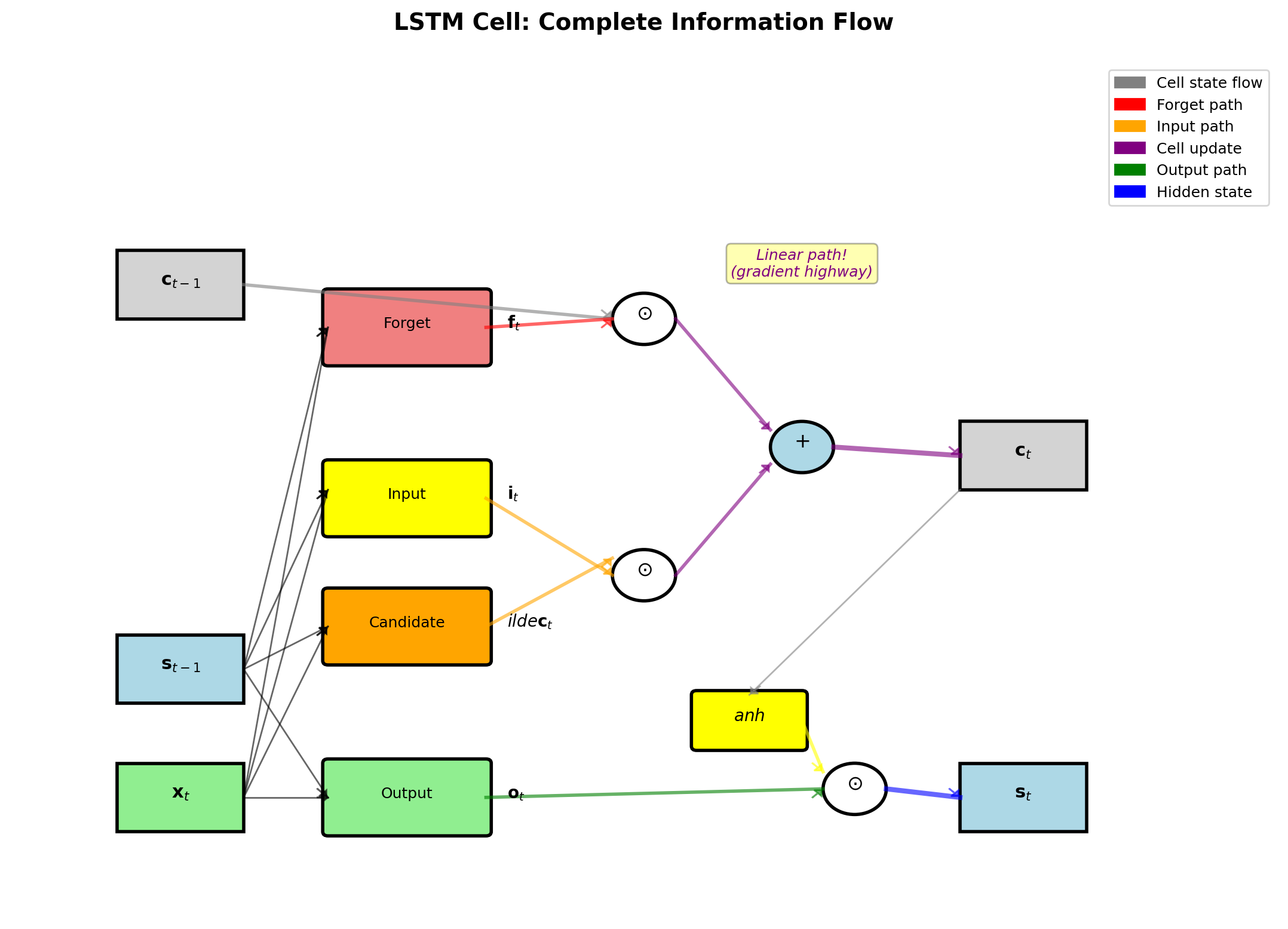

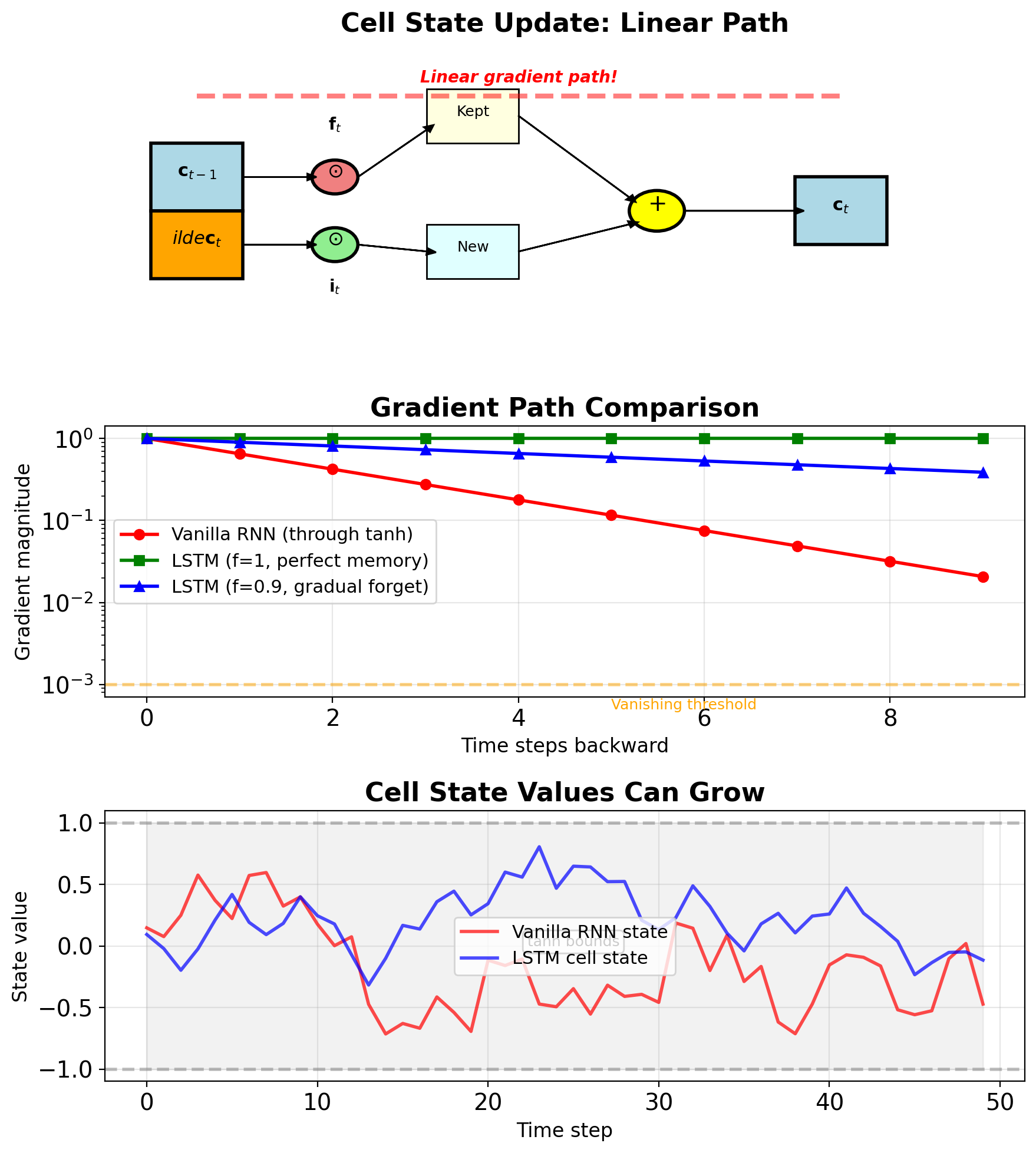

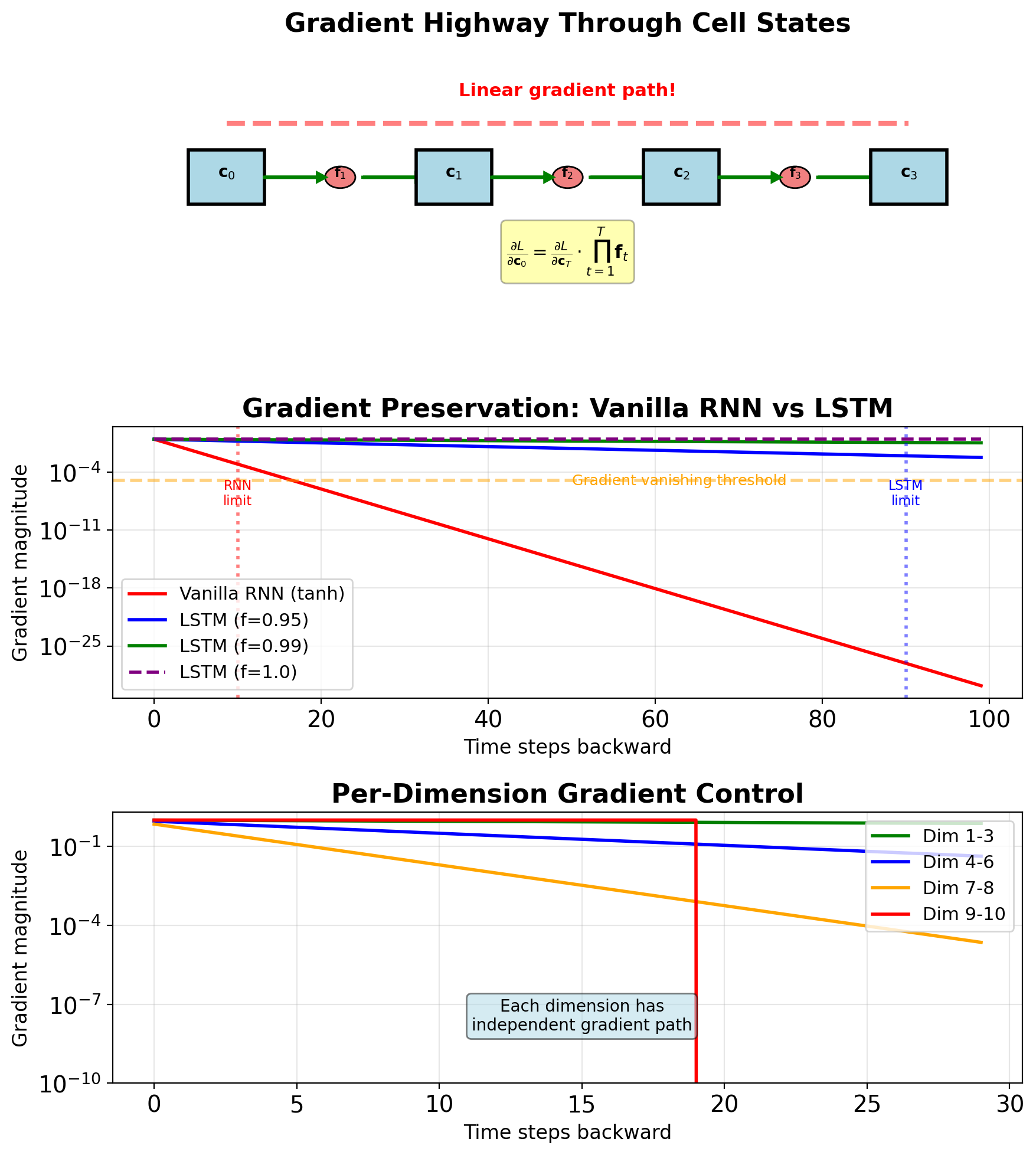

Gates Create Gradient Highways Through Time

\[\frac{\partial L}{\partial \mathbf{s}_0} = \prod_{t=1}^T \underbrace{\|\mathbf{W}\| \cdot |\tanh'|}_{\leq 0.5} \approx 0.5^T\]

After 20 steps: \(0.5^{20} \approx 10^{-6}\) (vanished!)

LSTM’s solution: Create bypass paths

Cell state gradient: \[\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}} = \mathbf{f}_t + \text{other terms}\]

When \(\mathbf{f}_t \approx 1\): Linear path through time!

Gates control gradient flow:

- \(\mathbf{f}_t = 0.9\): Gradient decays as \(0.9^T\)

- \(\mathbf{f}_t = 0.99\): Gradient decays as \(0.99^T\)

- \(\mathbf{f}_t = 1.0\): No decay (highway)

Practical impact:

- Vanilla RNN: 10-20 step memory

- LSTM: 100+ step memory

- Difference: \(0.5^{100}\) vs \(0.99^{100}\) = \(10^{-30}\) vs \(0.37\)

LSTM Gates Preserve Gradient Magnitude

\[\frac{\partial L}{\partial \mathbf{c}_{t-1}} = \frac{\partial L}{\partial \mathbf{c}_t} \cdot \frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}}\]

Cell state update equation: \[\mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + \mathbf{i}_t \odot \tilde{\mathbf{c}}_t\]

Taking derivative w.r.t. \(\mathbf{c}_{t-1}\): \[\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}} = \text{diag}(\mathbf{f}_t) + \underbrace{\frac{\partial \mathbf{f}_t}{\partial \mathbf{c}_{t-1}} \odot \mathbf{c}_{t-1}}_{\text{second-order terms}}\]

When \(\mathbf{f}_t \approx 1\): \[\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}} \approx \mathbf{I}\]

Compare gradient products over T steps:

| Architecture | Gradient Product | T=50 Result |

|---|---|---|

| Vanilla RNN | \(\prod_{t=1}^T (\mathbf{W}^T \text{diag}(\sigma'))\) | \(\approx 10^{-30}\) |

| LSTM (f=0.9) | \(\prod_{t=1}^T \text{diag}(\mathbf{f}_t)\) | \(\approx 0.005\) |

| LSTM (f=0.99) | \(\prod_{t=1}^T \text{diag}(\mathbf{f}_t)\) | \(\approx 0.6\) |

Initialization trick:

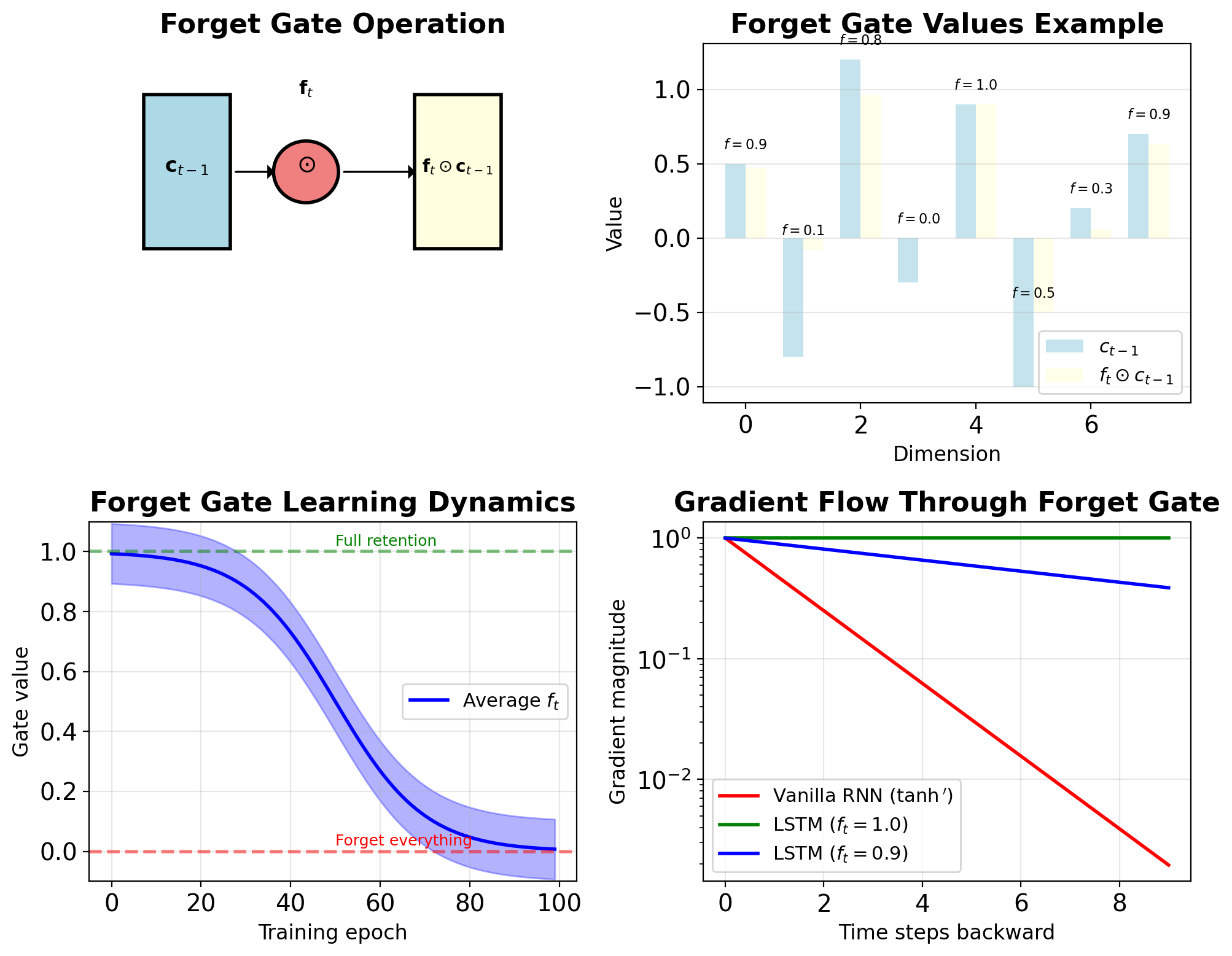

Forget Gate Controls Memory Retention

\[\mathbf{f}_t = \sigma(\mathbf{W}_f[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_f)\]

where \([\mathbf{s}_{t-1}, \mathbf{x}_t]\) denotes concatenation

Dimensions:

- Input: \([\mathbf{s}_{t-1}, \mathbf{x}_t] \in \mathbb{R}^{H+D}\)

- Weights: \(\mathbf{W}_f \in \mathbb{R}^{H \times (H+D)}\)

- Output: \(\mathbf{f}_t \in \mathbb{R}^H\), with \(f_{t,i} \in [0,1]\)

Element-wise gating: \[\mathbf{c}_t^{\text{kept}} = \mathbf{f}_t \odot \mathbf{c}_{t-1}\]

Interpretation:

- \(f_{t,i} = 1\): Keep dimension \(i\) completely

- \(f_{t,i} = 0\): Forget dimension \(i\) completely

- \(f_{t,i} = 0.5\): Partial retention

Learning dynamics:

- Initialize bias \(b_f\) positive (≈1-2)

- Default behavior: complete state retention

- Learns what to forget during training

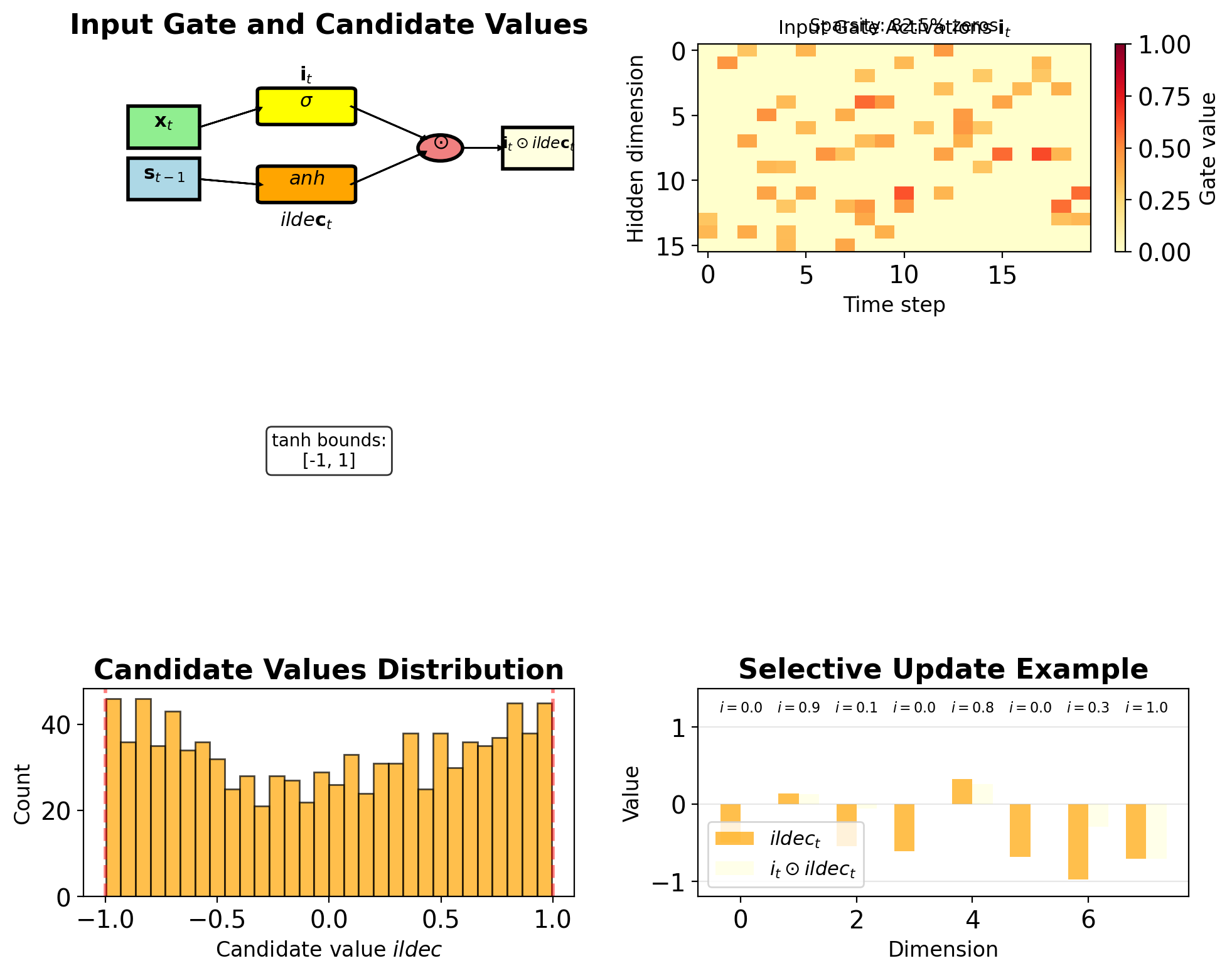

Input Gate Modulates New Information

Input gate (what to store): \[\mathbf{i}_t = \sigma(\mathbf{W}_i[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_i)\]

Candidate values (potential new content): \[\tilde{\mathbf{c}}_t = \tanh(\mathbf{W}_c[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_c)\]

Combined update: \[\mathbf{c}_t^{\text{new}} = \mathbf{i}_t \odot \tilde{\mathbf{c}}_t\]

Complete cell update: \[\mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + \mathbf{i}_t \odot \tilde{\mathbf{c}}_t\]

Design choices:

- Input gate: Controls amount of update

- Candidate: Provides content of update

- Separation allows selective writing

Learned behavior (task-dependent):

- Input gates can learn selective update patterns

- Candidates represent potential state changes

- Gate values emerge from training objectives

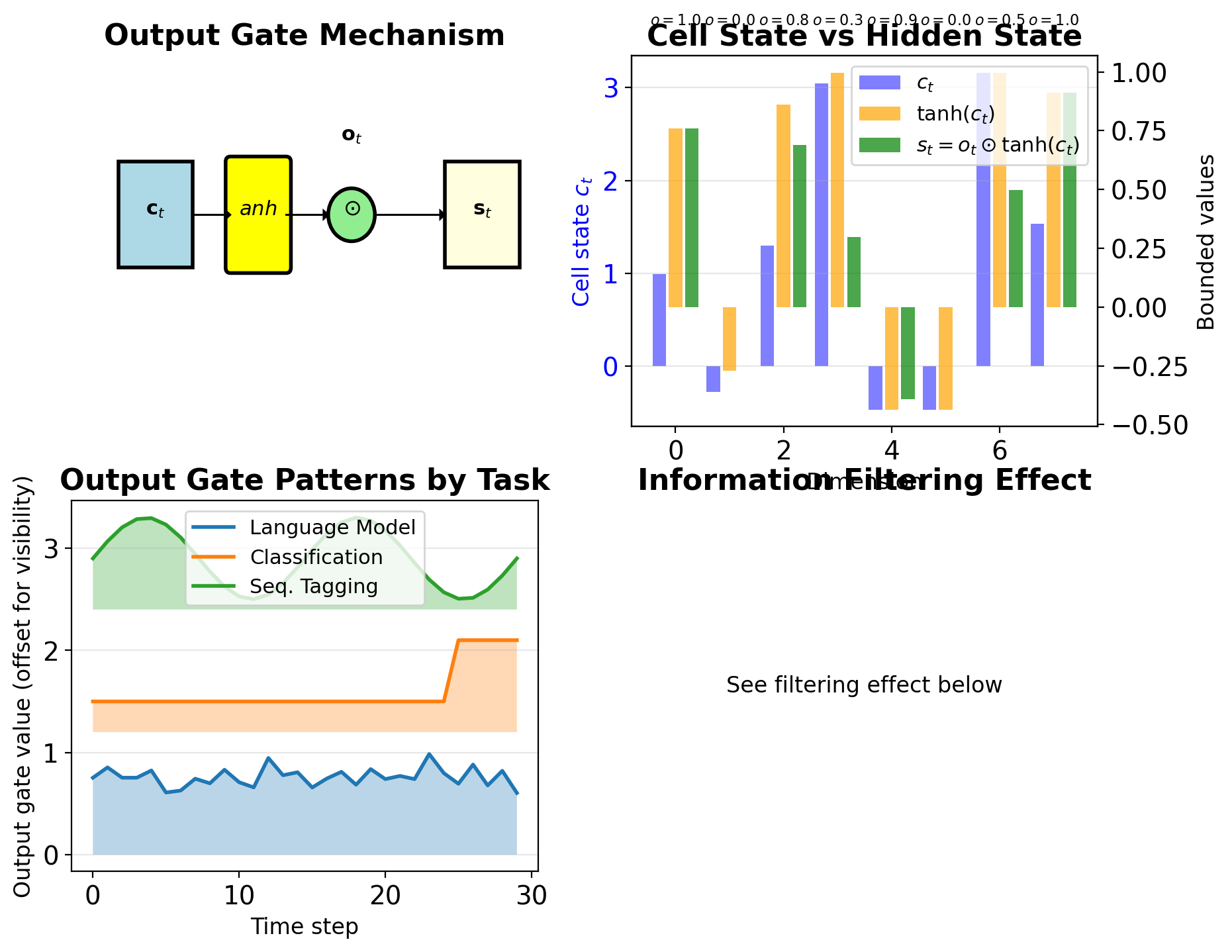

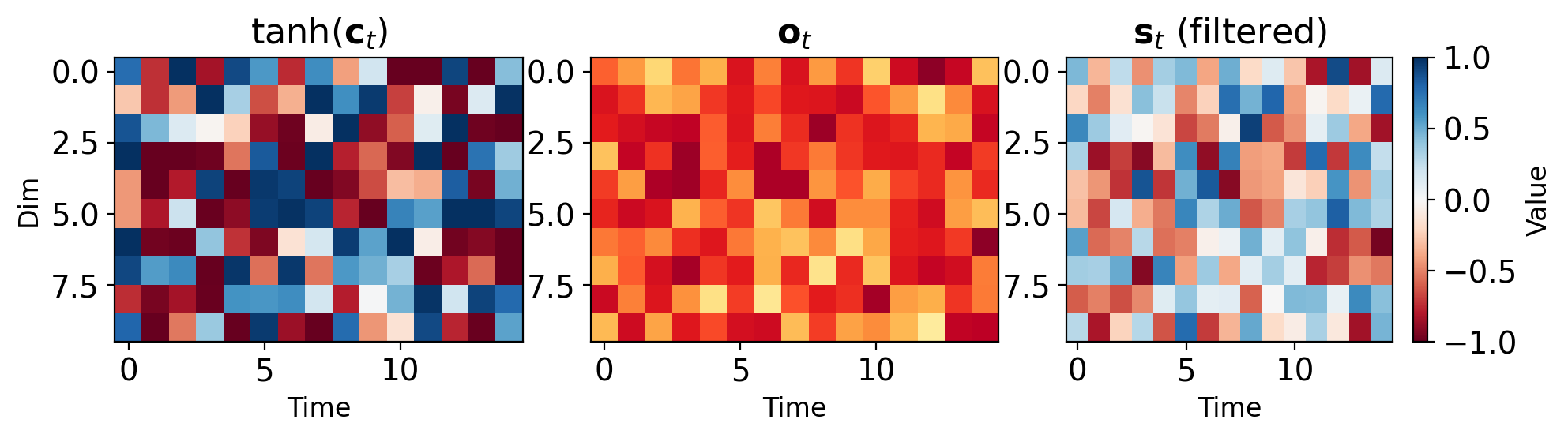

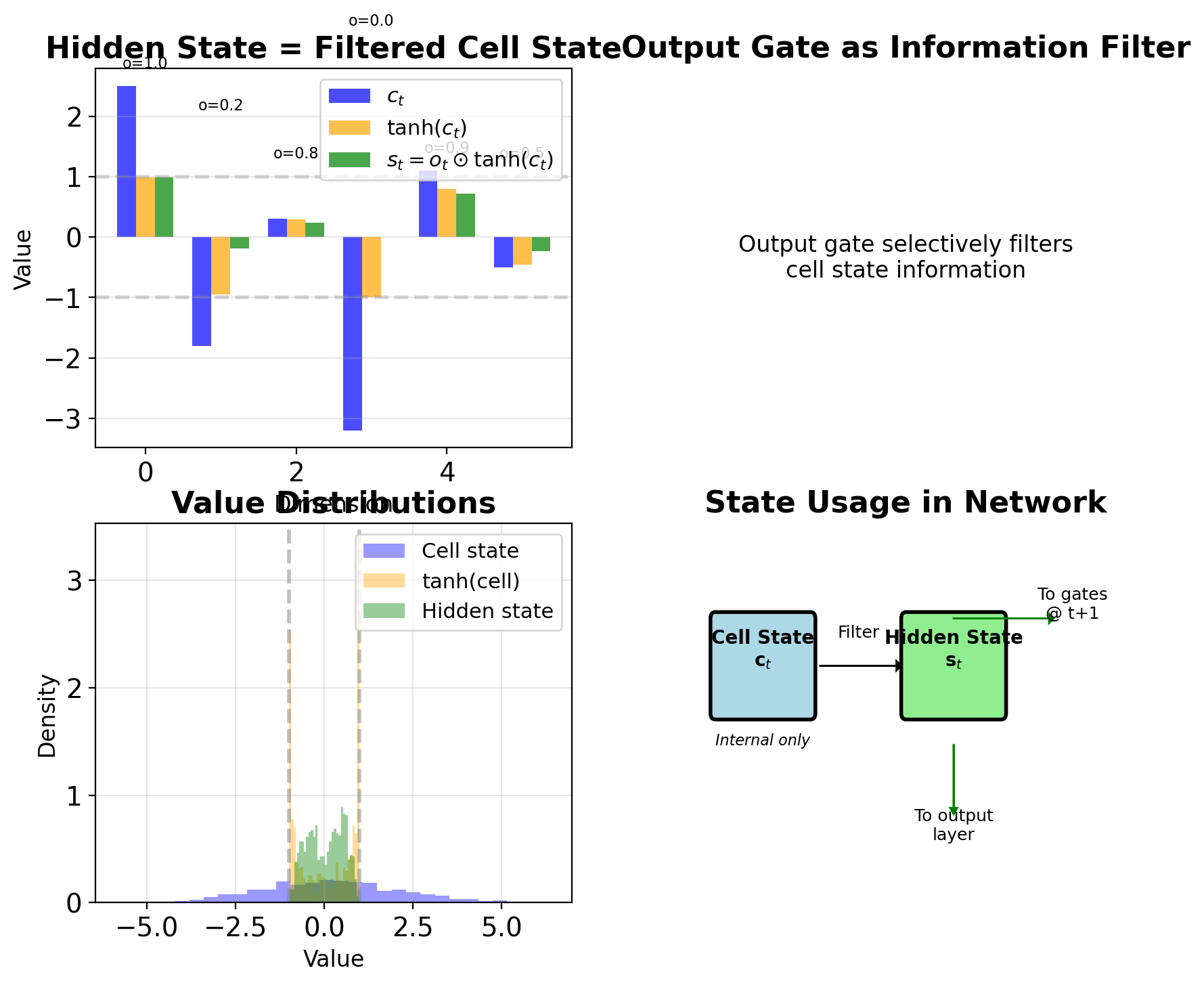

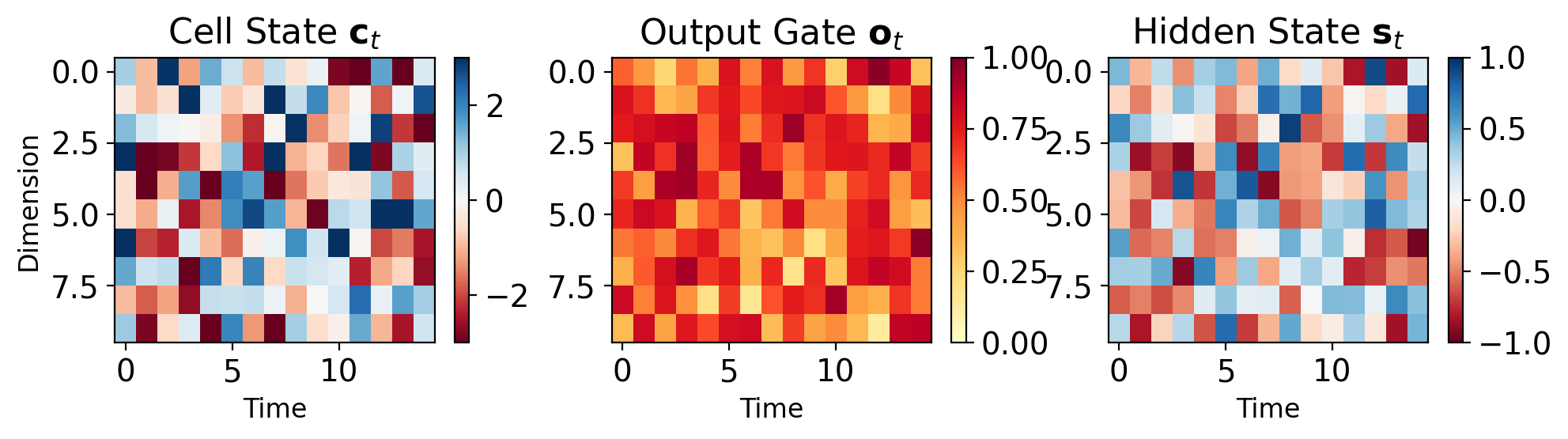

Output Gate Filters Cell State

\[\mathbf{o}_t = \sigma(\mathbf{W}_o[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_o)\]

Hidden state computation: \[\mathbf{s}_t = \mathbf{o}_t \odot \tanh(\mathbf{c}_t)\]

Design rationale:

- Cell state \(\mathbf{c}_t\) can grow unbounded

- \(\tanh(\mathbf{c}_t)\) bounds to [-1, 1]

- Output gate selects what to expose

Interpretation:

- Cell state: Complete internal memory

- Hidden state: Filtered working memory

- Output gate: Read controller

Task-dependent behavior:

- Language modeling: Often fully open

- Classification: Selective at decision points

- Sequence tagging: Varies by position

Dimension: Both \(\mathbf{o}_t, \mathbf{s}_t \in \mathbb{R}^H\)

LSTM Gates Interact to Control Information Flow

Forget previous: \(\mathbf{f}_t \odot \mathbf{c}_{t-1}\)

Add new info: \(\mathbf{i}_t \odot \tilde{\mathbf{c}}_t\)

Update cell: \(\mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + \mathbf{i}_t \odot \tilde{\mathbf{c}}_t\)

Output: \(\mathbf{s}_t = \mathbf{o}_t \odot \tanh(\mathbf{c}_t)\)

Parameter count:

- 4 weight matrices: \(\mathbf{W}_f, \mathbf{W}_i, \mathbf{W}_c, \mathbf{W}_o\)

- Each: \(H \times (H + D)\) dimensions

- Total: \(4H(H + D) + 4H\) parameters

- ~4× vanilla RNN parameters

Computational cost per timestep:

- 4 matrix multiplications

- 3 sigmoid, 2 tanh activations

- 5 element-wise operations

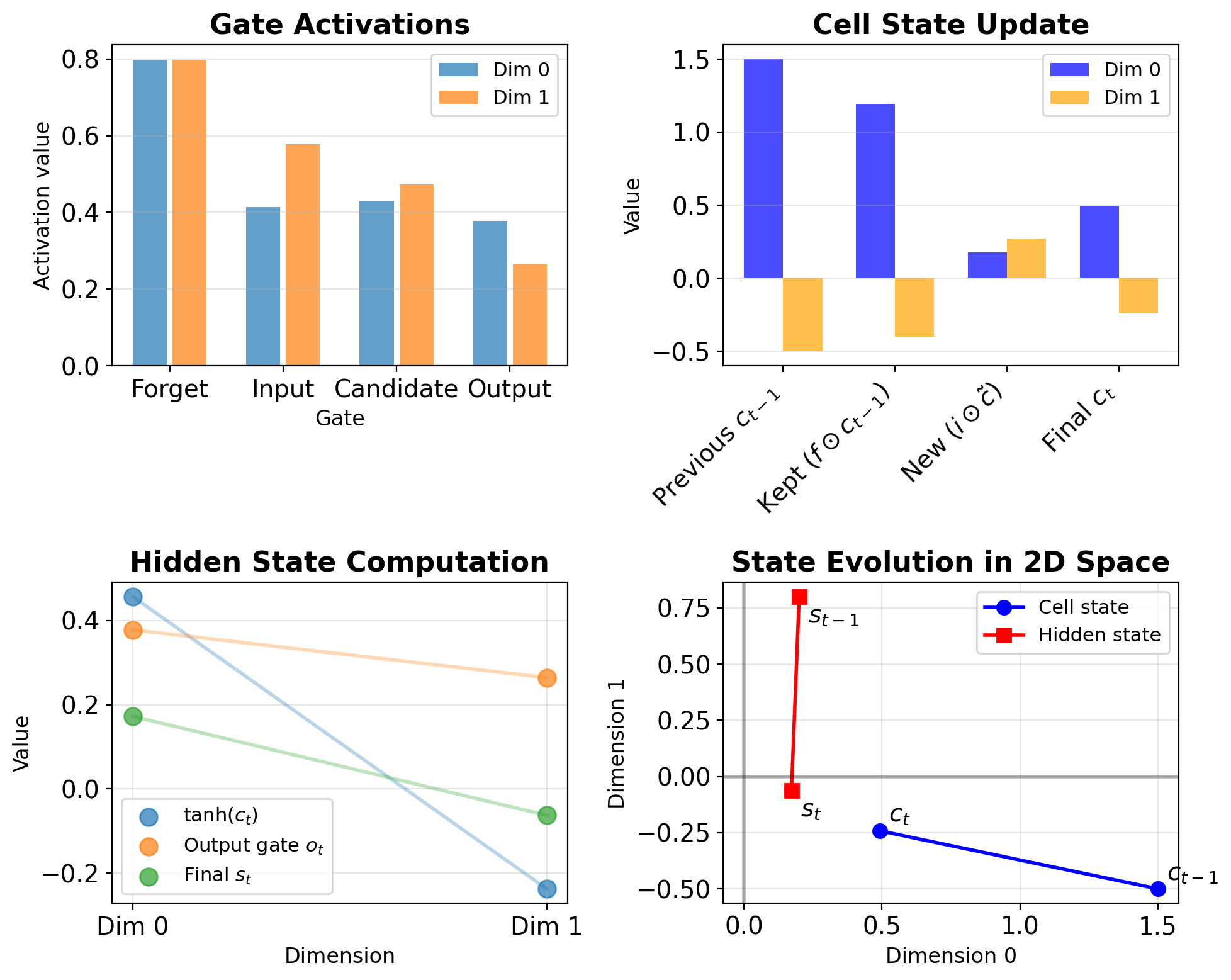

LSTM Operation on 2D Example

Given:

- Hidden size: \(H = 2\)

- Input size: \(D = 2\)

- Current input: \(\mathbf{x}_t = [0.5, -0.3]^T\)

- Previous hidden: \(\mathbf{s}_{t-1} = [0.2, 0.8]^T\)

- Previous cell: \(\mathbf{c}_{t-1} = [1.5, -0.5]^T\)

Step 1: Compute gates (simplified weights shown)

# Concatenate inputs

h_x = concat([s_t_1, x_t]) # [0.2, 0.8, 0.5, -0.3]

# Forget gate

f_t = sigmoid(W_f @ h_x + b_f) # [0.9, 0.1]

# Input gate

i_t = sigmoid(W_i @ h_x + b_i) # [0.2, 0.8]

# Candidate

c_tilde = tanh(W_c @ h_x + b_c) # [0.6, -0.4]

# Output gate

o_t = sigmoid(W_o @ h_x + b_o) # [0.7, 0.5]Step 2: Update cell state

c_t = f_t * c_t_1 + i_t * c_tilde

= [0.9, 0.1] * [1.5, -0.5] + [0.2, 0.8] * [0.6, -0.4]

= [1.35, -0.05] + [0.12, -0.32]

= [1.47, -0.37]Step 3: Compute hidden state

Cell State and Hidden State

LSTM Cell State Update

\[\mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + \mathbf{i}_t \odot \tilde{\mathbf{c}}_t\]

Components:

- \(\mathbf{f}_t \odot \mathbf{c}_{t-1}\): What to keep from past

- \(\mathbf{i}_t \odot \tilde{\mathbf{c}}_t\): What to add new

Improved gradient path:

- No activation function between \(\mathbf{c}_{t-1}\) and \(\mathbf{c}_t\)

- Gradient scaled by forget gate: \(\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}} = \mathbf{f}_t\)

- Better preservation when \(\mathbf{f}_t \approx 1\)

Contrast with vanilla RNN:

- RNN: \(\mathbf{s}_t = \tanh(\mathbf{W}\mathbf{s}_{t-1} + ...)\)

- LSTM: \(\mathbf{c}_t = \mathbf{f}_t \odot \mathbf{c}_{t-1} + ...\)

- RNN forces through tanh every step

- LSTM can pass through unchanged

Cell state can grow unbounded:

- No activation function on \(\mathbf{c}_t\)

- Values can exceed [-1, 1]

- Controlled by output gate when read

Hidden State Provides Filtered View of Cell State

\[\mathbf{s}_t = \mathbf{o}_t \odot \tanh(\mathbf{c}_t)\]

Two-stage process:

- Bound cell state: \(\tanh(\mathbf{c}_t) \in [-1, 1]^H\)

- Filter with output gate: \(\mathbf{o}_t\) selects what to expose

Why this design?

- Cell state preserves raw information

- Hidden state provides normalized output

- Output gate enables selective reading

Dimensions:

- Both \(\mathbf{c}_t, \mathbf{s}_t \in \mathbb{R}^H\)

- Same size, different roles

- Cell: internal memory

- Hidden: external interface

Usage in network:

- \(\mathbf{s}_t\) feeds to next timestep gates

- \(\mathbf{s}_t\) used for output layer

- \(\mathbf{c}_t\) only internal to LSTM

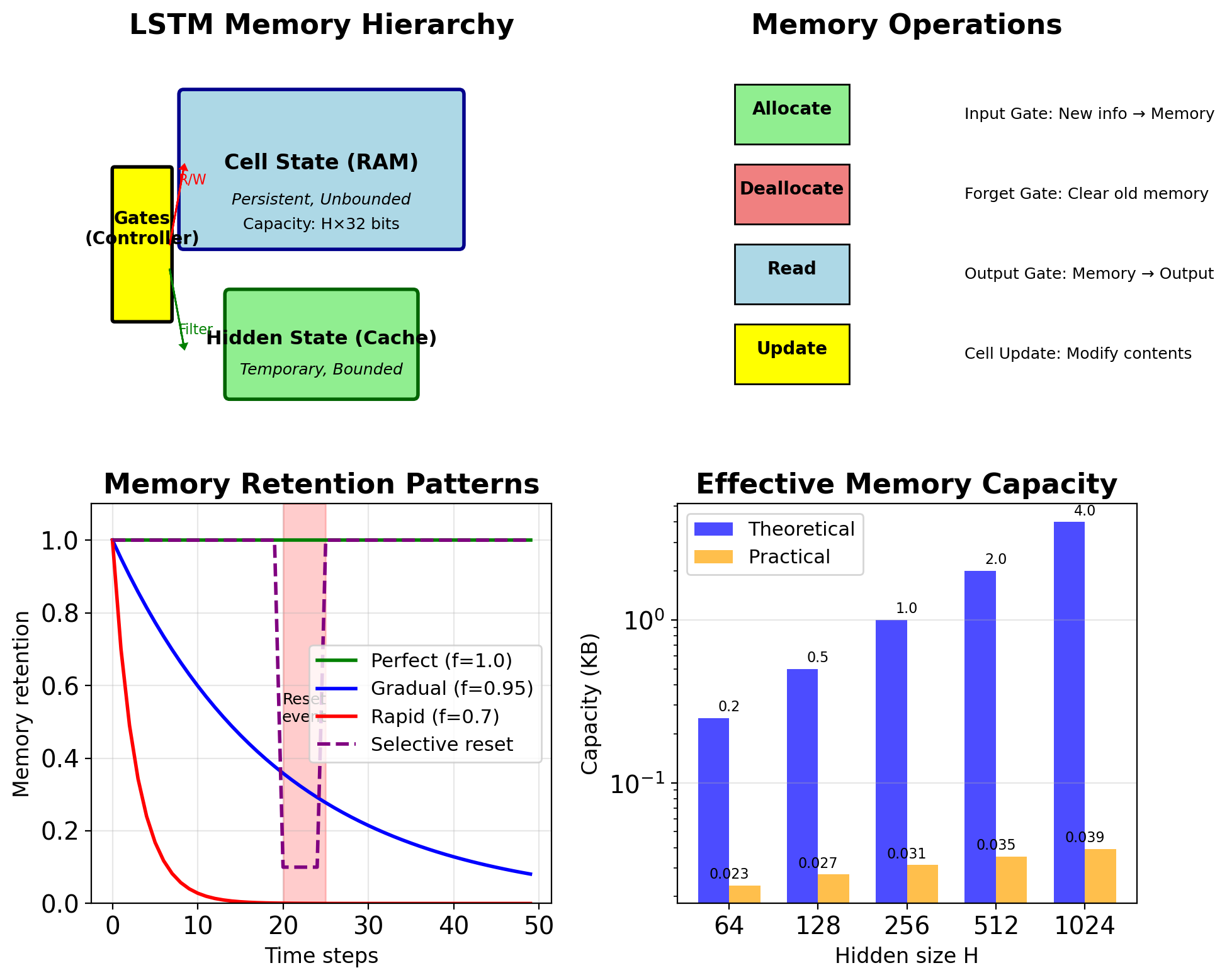

LSTM Cell State Resembles Computer Memory

Cell state = Long-term memory (RAM)

- Persistent storage across time

- Can hold information indefinitely

- Not directly observable

- Large capacity (unbounded values)

Hidden state = Working memory (Cache)

- Temporary, task-focused

- Bounded, normalized values

- Directly observable/usable

- Filtered view of long-term memory

Gates = Memory controller

- Forget gate: Memory deallocation

- Input gate: Memory allocation

- Output gate: Memory read operation

Capacity analysis:

- Theoretical: \(H\) floating point numbers

- Practical: Limited by gate dynamics

- Forget gate ≈ 1: Very long memory

- Forget gate ≈ 0: No memory

Memory access patterns:

- Content-based (gates depend on input)

- Learned, not hand-designed

- Task-specific optimization

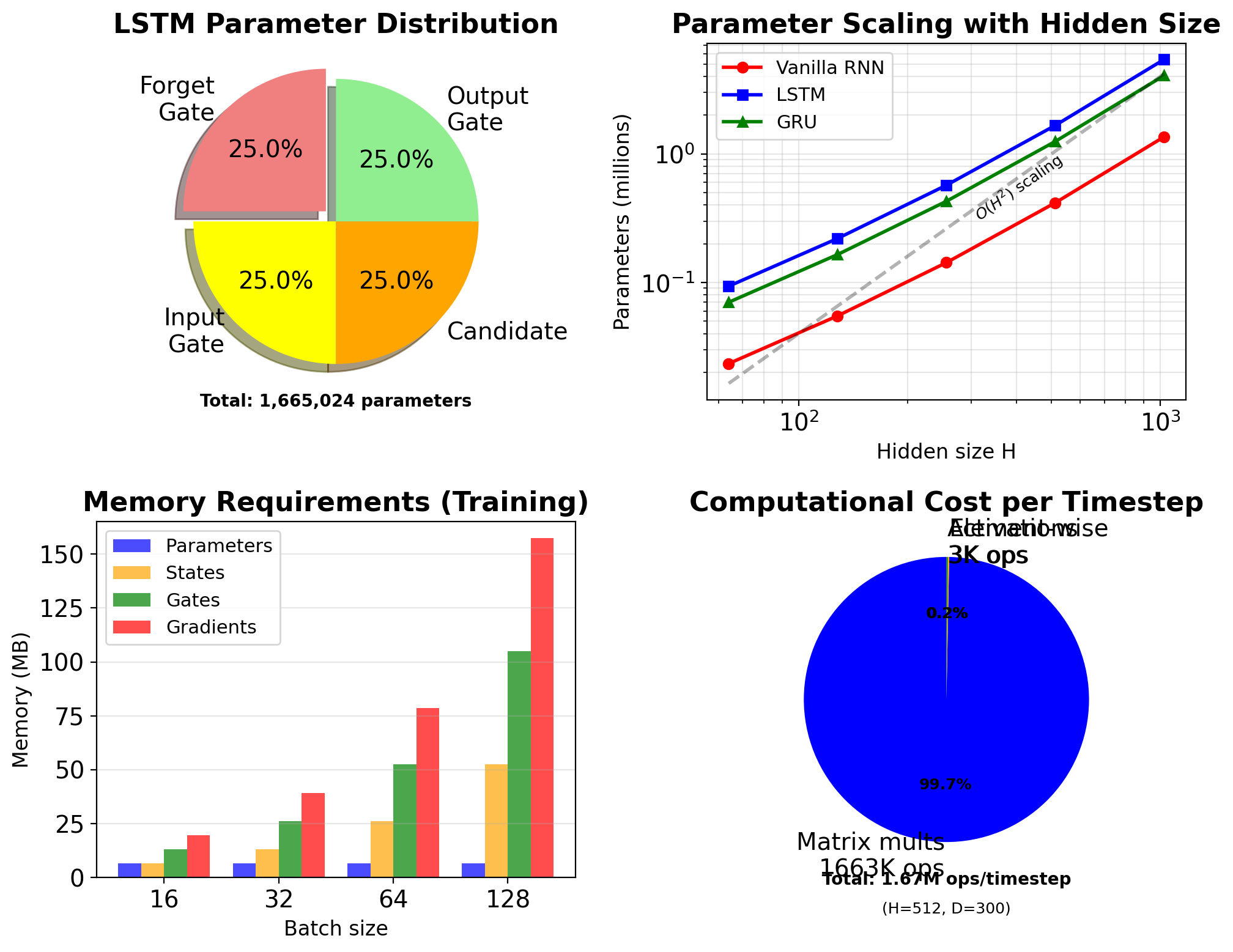

LSTM Has 4× Parameters of Vanilla RNN

Dimensions:

- Input: \(\mathbf{x}_t \in \mathbb{R}^D\)

- Hidden/Cell: \(\mathbf{s}_t, \mathbf{c}_t \in \mathbb{R}^H\)

- Gates: \(\mathbf{f}_t, \mathbf{i}_t, \mathbf{o}_t \in \mathbb{R}^H\)

- Candidate: \(\tilde{\mathbf{c}}_t \in \mathbb{R}^H\)

Weight matrices (4 gates):

- \(\mathbf{W}_f, \mathbf{W}_i, \mathbf{W}_c, \mathbf{W}_o\)

- Each: \(\mathbb{R}^{H \times (H+D)}\)

- Bias: \(\mathbf{b}_f, \mathbf{b}_i, \mathbf{b}_c, \mathbf{b}_o \in \mathbb{R}^H\)

Total parameters: \[N = 4 \times [H \times (H + D) + H] = 4H(H + D + 1)\]

Example (typical NLP):

- \(D = 300\) (embedding), \(H = 512\)

- Params: \(4 \times 512 \times (512 + 300 + 1)\)

- = 1,664,512 ≈ 1.66M parameters

- Vanilla RNN: 416,256 ≈ 0.42M

- LSTM has ~4× parameters

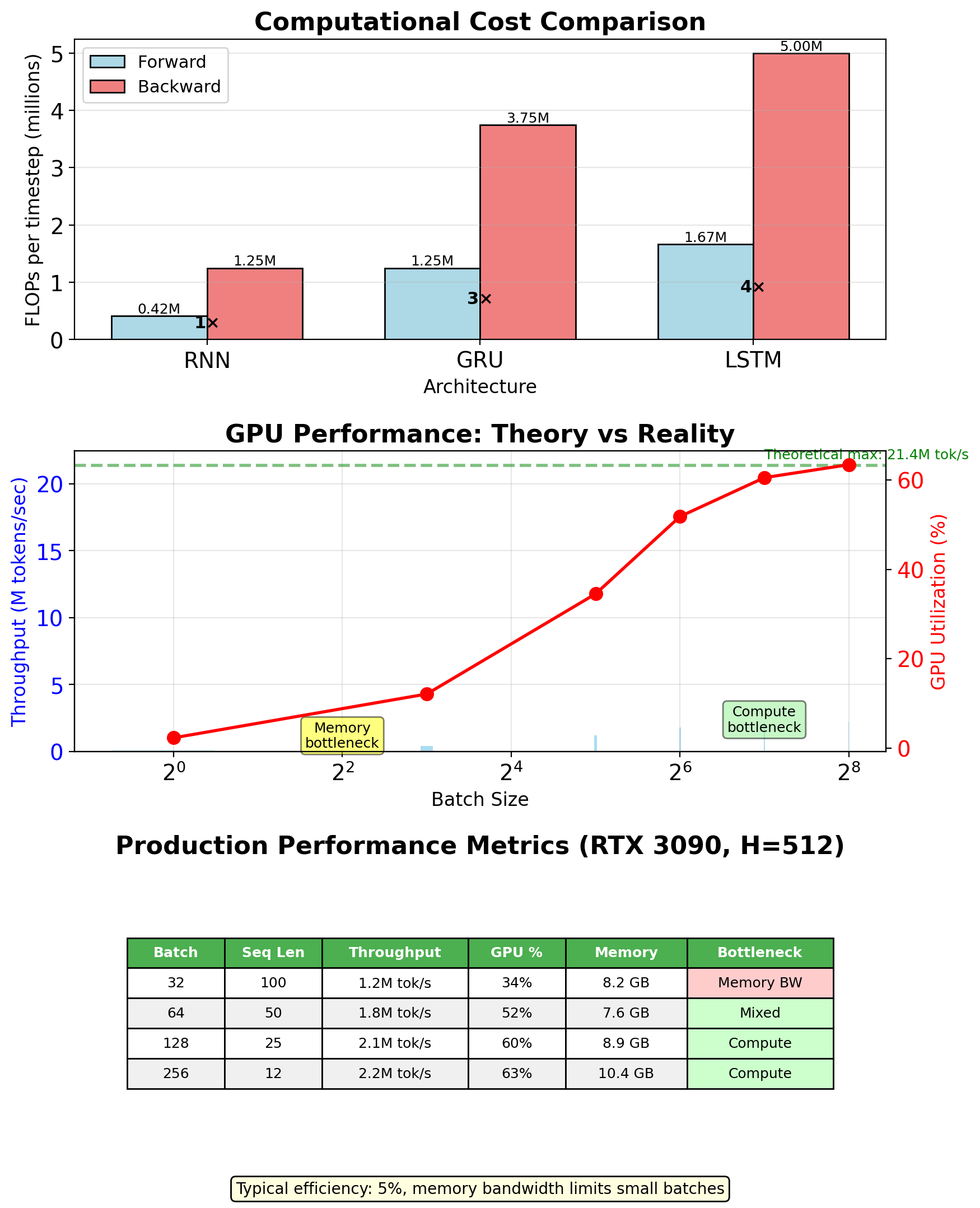

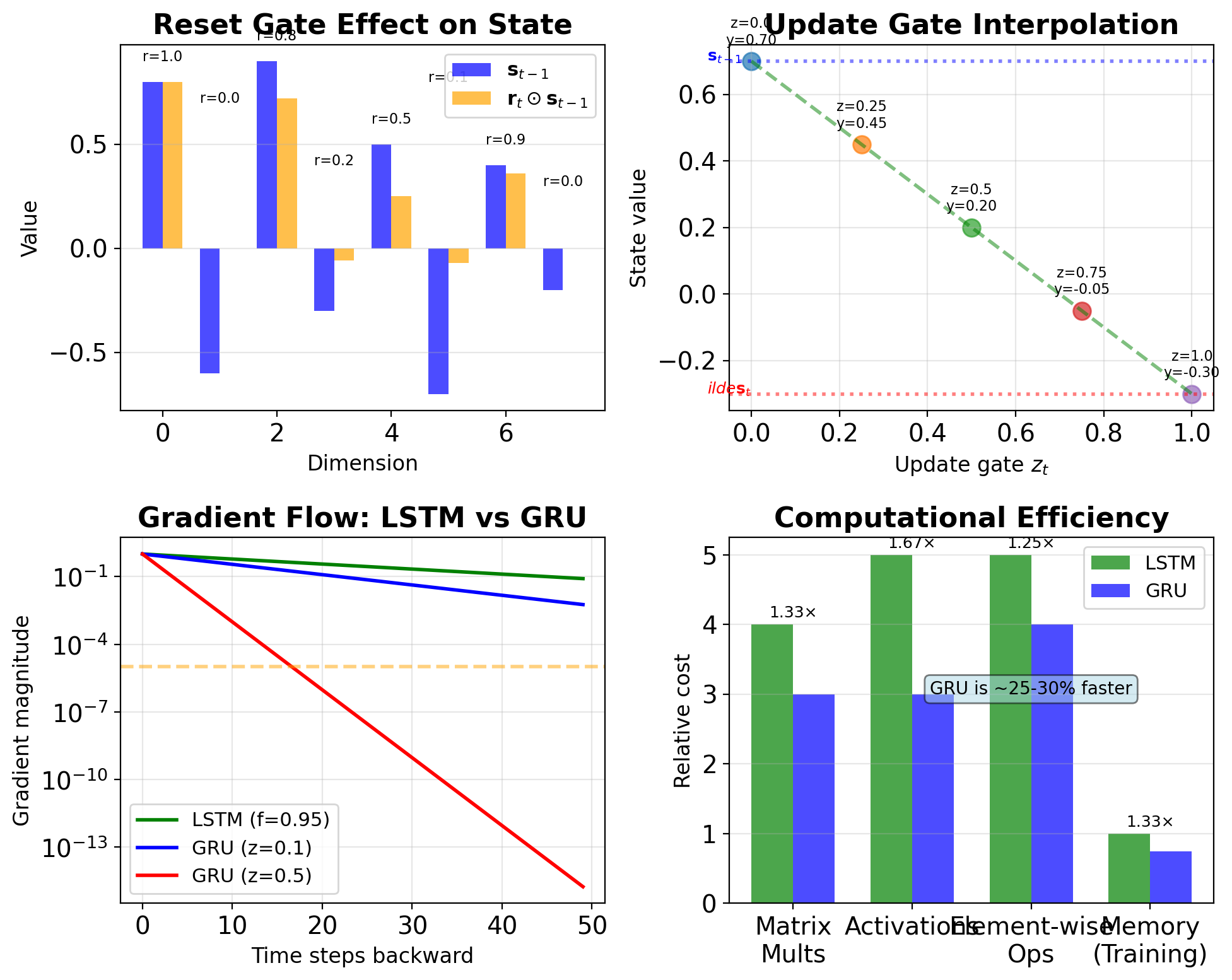

Matrix Operations Dominate LSTM Computation

Gate computations (f, i, o, g): \[4 \times [H(H + D) + H] = 4H(H + D + 1)\]

Cell state update: \[2H \text{ (element-wise ops)}\]

Hidden state: \[H \text{ (element-wise)}\]

Total forward pass: \[\approx 4H(H + D + 1) \text{ FLOPs/timestep}\]

Backward pass (BPTT): \[\approx 12H(H + D + 1) \text{ FLOPs/timestep}\]

Comparison to vanilla RNN:

- RNN: \(H(H + D + 1)\) forward

- GRU: \(3H(H + D + 1)\) forward

- LSTM: \(4H(H + D + 1)\) forward

- 4× computational cost vs RNN

Matrix operations dominate:

- 99.3% matrix multiplications

- 0.7% element-wise operations

- Memory bandwidth is the primary bottleneck

Gradient Flow in LSTM

Cell State Creates Gradient Highway

Cell state gradient: \[\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}} = \mathbf{f}_t\]

No nonlinearity - element-wise multiplication

Compare to vanilla RNN: \[\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}} = \text{diag}(\tanh'(\mathbf{z}_t)) \cdot \mathbf{W}_{ss}\]

LSTM advantages:

- Linear path: No activation function between cell states

- Adaptive: Forget gate controls gradient flow

- Per-dimension: Each dimension has own gradient path

- Can preserve perfectly: If \(f_t = 1\), gradient passes unchanged

Long-term gradient: \[\frac{\partial \mathbf{c}_T}{\partial \mathbf{c}_0} = \prod_{t=1}^{T} \mathbf{f}_t\]

Element-wise product, not matrix product!

Gradient magnitude:

- If \(\mathbf{f}_t \approx 1\): Gradient preserved

- If \(\mathbf{f}_t \approx 0.9\): \(0.9^{100} \approx 10^{-5}\) (still vanishes)

- But much better than vanilla RNN!

Forget Gates Learn to Preserve Gradients

Initialization strategy:

- Bias \(b_f\) initialized to 1-2

- Results in initial \(f_t \approx 0.85-0.95\)

- Default: retain state, not discard

Learning progression:

Early training: High forget gates

- Preserve all information

- Allow gradient flow

Mid training: Selective forgetting

- Identify irrelevant information

- Task-specific patterns emerge

Late training: Refined gating

- Dimension-specific strategies

- Optimal memory-computation trade-off

Gradient contribution: \[\frac{\partial L}{\partial \mathbf{f}_t} = \frac{\partial L}{\partial \mathbf{c}_t} \odot \mathbf{c}_{t-1}\]

Gradient signal:

- Large gradient → high retention priority

- Small gradient → safe to forget

LSTMs Maintain Gradient Magnitude Better Than RNNs

Vanilla RNN gradient product: \[\prod_{t=k}^{T} \|\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}}\| \leq \prod_{t=k}^{T} \gamma_{\text{tanh}} \cdot \|\mathbf{W}_{ss}\|\]

where \(\gamma_{\text{tanh}} \leq 1\) (≈ 0.5 in practice)

LSTM gradient product: \[\prod_{t=k}^{T} \|\frac{\partial \mathbf{c}_t}{\partial \mathbf{c}_{t-1}}\| = \prod_{t=k}^{T} \|\mathbf{f}_t\|\]

where \(\mathbf{f}_t \in [0,1]^H\) (0.9-0.95 in practice)

Comparing gradient flow:

- No weight matrix in LSTM path

- No repeated nonlinearity

- Adaptive per timestep

- Per-dimension control

Empirical measurements (Penn Treebank):

- Vanilla RNN: Gradient < 1e-5 after 10-15 steps

- LSTM: Gradient > 1e-5 up to 100+ steps

- 10× improvement in gradient preservation

LSTMs Extend Effective Memory Horizon

Definition: Distance where gradient > threshold \[\text{Horizon} = \max\{T : \|\frac{\partial L}{\partial \mathbf{s}_0}\| > \epsilon\}\]

\(\epsilon = 10^{-5}\) or \(10^{-6}\)

Analytical approximation:

- Vanilla RNN: \(T_{\text{eff}} \approx \frac{\log \epsilon}{\log \gamma}\)

- LSTM: \(T_{\text{eff}} \approx \frac{\log \epsilon}{\log \bar{f}}\)

where \(\bar{f}\) is average forget gate

Measured horizons (various tasks):

| Model | Horizon | Task |

|---|---|---|

| Vanilla RNN | 10-20 | Most tasks |

| LSTM (default) | 100-200 | Language modeling |

| LSTM (tuned) | 200-500 | Music generation |

| GRU | 80-150 | Translation |

Still has limits:

- Not infinite memory

- Degrades with depth

- Task-dependent effectiveness

LSTM Variants

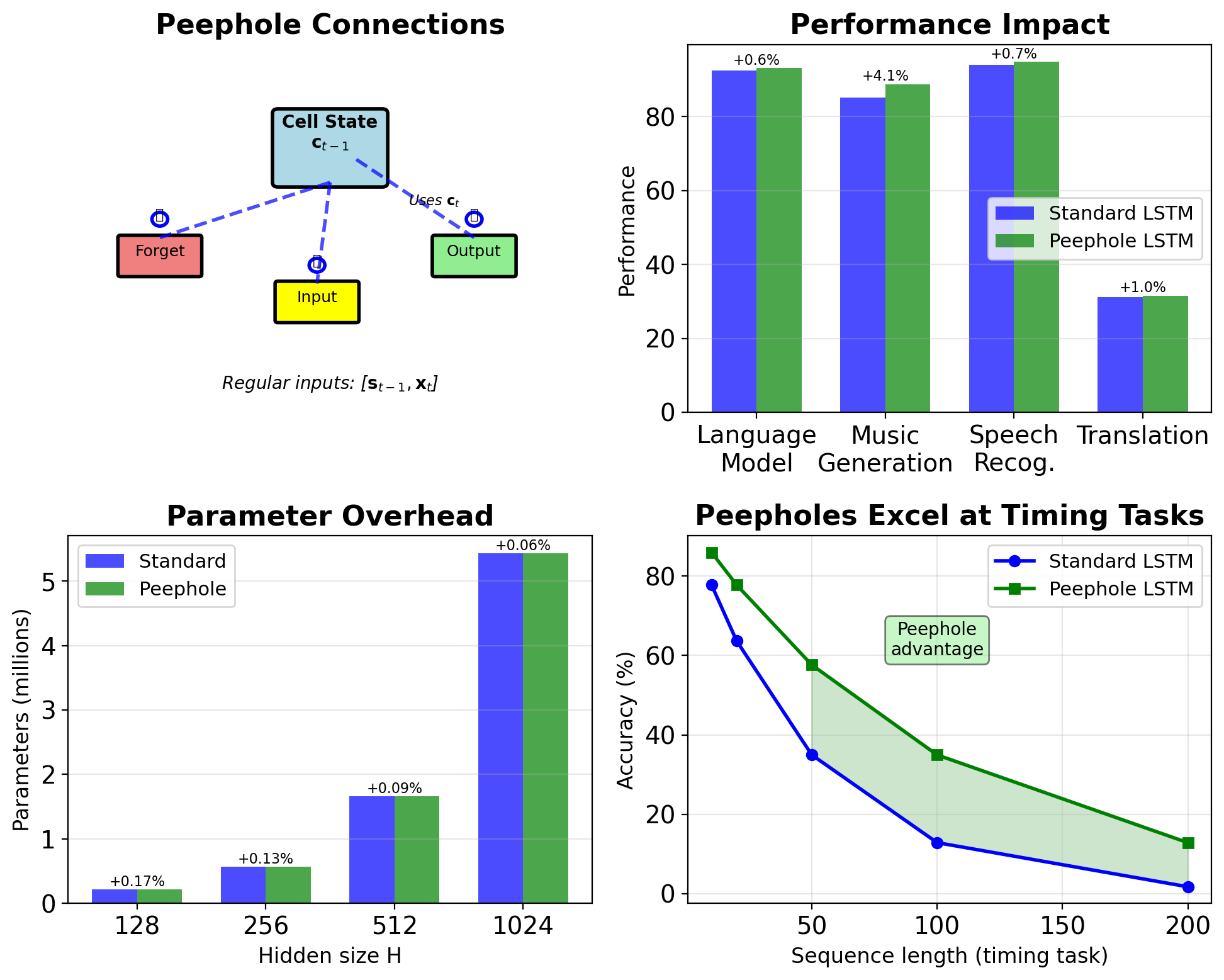

Peephole Connections Let Gates See Cell State

Standard LSTM gates: \[\mathbf{f}_t = \sigma(\mathbf{W}_f[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_f)\]

Peephole LSTM gates: \[\mathbf{f}_t = \sigma(\mathbf{W}_f[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{P}_f \odot \mathbf{c}_{t-1} + \mathbf{b}_f)\]

All three gates get peepholes:

- Forget: uses \(\mathbf{c}_{t-1}\)

- Input: uses \(\mathbf{c}_{t-1}\)

- Output: uses \(\mathbf{c}_t\) (after update!)

Peephole weights:

- \(\mathbf{P}_f, \mathbf{P}_i, \mathbf{P}_o \in \mathbb{R}^H\)

- Diagonal matrices (element-wise)

- Adds 3H parameters total

Motivation:

- Gates see actual memory content

- Better for precise timing tasks

- Helps with counting/rhythm

Performance:

- Minor improvement (1-2%) on most tasks

- Improved performance on music/timing tasks

- Added complexity without commensurate gain

GRU Simplifies LSTM to Two Gates

GRU equations: \[\mathbf{z}_t = \sigma(\mathbf{W}_z[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_z)\] \[\mathbf{r}_t = \sigma(\mathbf{W}_r[\mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b}_r)\] \[\tilde{\mathbf{s}}_t = \tanh(\mathbf{W}[\mathbf{r}_t \odot \mathbf{s}_{t-1}, \mathbf{x}_t] + \mathbf{b})\] \[\mathbf{s}_t = (1-\mathbf{z}_t) \odot \mathbf{s}_{t-1} + \mathbf{z}_t \odot \tilde{\mathbf{s}}_t\]

Differences from LSTM:

- No separate cell state (c_t)

- Two gates instead of three

- Coupled forget/input via update gate z

- Reset gate modulates previous hidden state

Gate roles:

- Update gate z: How much to update (combines forget/input)

- Reset gate r: How much past to use for candidate

Advantages:

- 25% fewer parameters than LSTM

- Faster training/inference

- Often comparable performance

- Simpler to implement

GRU Combines Cell and Hidden States

Reset gate mechanics:

- Controls access to previous state

- \(\mathbf{r}_t \odot \mathbf{s}_{t-1}\) before candidate

- Allows model to “forget” irrelevant past

- Different from LSTM forget gate

Update gate as interpolation: \[\mathbf{s}_t = (1-\mathbf{z}_t) \odot \mathbf{s}_{t-1} + \mathbf{z}_t \odot \tilde{\mathbf{s}}_t\]

This is a convex combination:

- \(\mathbf{z}_t = 0\): Keep all previous

- \(\mathbf{z}_t = 1\): Replace with new

- \(\mathbf{z}_t = 0.5\): Average

Gradient flow in GRU: \[\frac{\partial \mathbf{s}_t}{\partial \mathbf{s}_{t-1}} = (1-\mathbf{z}_t) + \text{other terms}\]

Linear path when \(\mathbf{z}_t\) is small!

Design philosophy:

- Fewer parameters → faster

- Coupled gates → automatic balance

- Single state → simpler dynamics

Part 5: RNNs in Practice

Gradient Clipping Prevents Training Divergence

Norm clipping (standard approach): \[\mathbf{g}_{\text{clipped}} = \begin{cases} \mathbf{g} & \text{if } \|\mathbf{g}\|_2 \leq \theta \\ \theta \cdot \frac{\mathbf{g}}{\|\mathbf{g}\|_2} & \text{if } \|\mathbf{g}\|_2 > \theta \end{cases}\]

Implementation:

Starting points (tune based on gradient statistics):

- Small models: 1-5

- Seq2seq models: 5-10

- Deep networks: 10-25

Monitoring gradient statistics:

- Track percentage of batches clipped

- If >50% clipped: increase threshold

- If <1% clipped: consider decreasing for stability

Comparison with CNNs/Transformers:

- CNNs: Optional, rarely needed

- Transformers: Helpful but not required

- RNNs: Required - will diverge without it

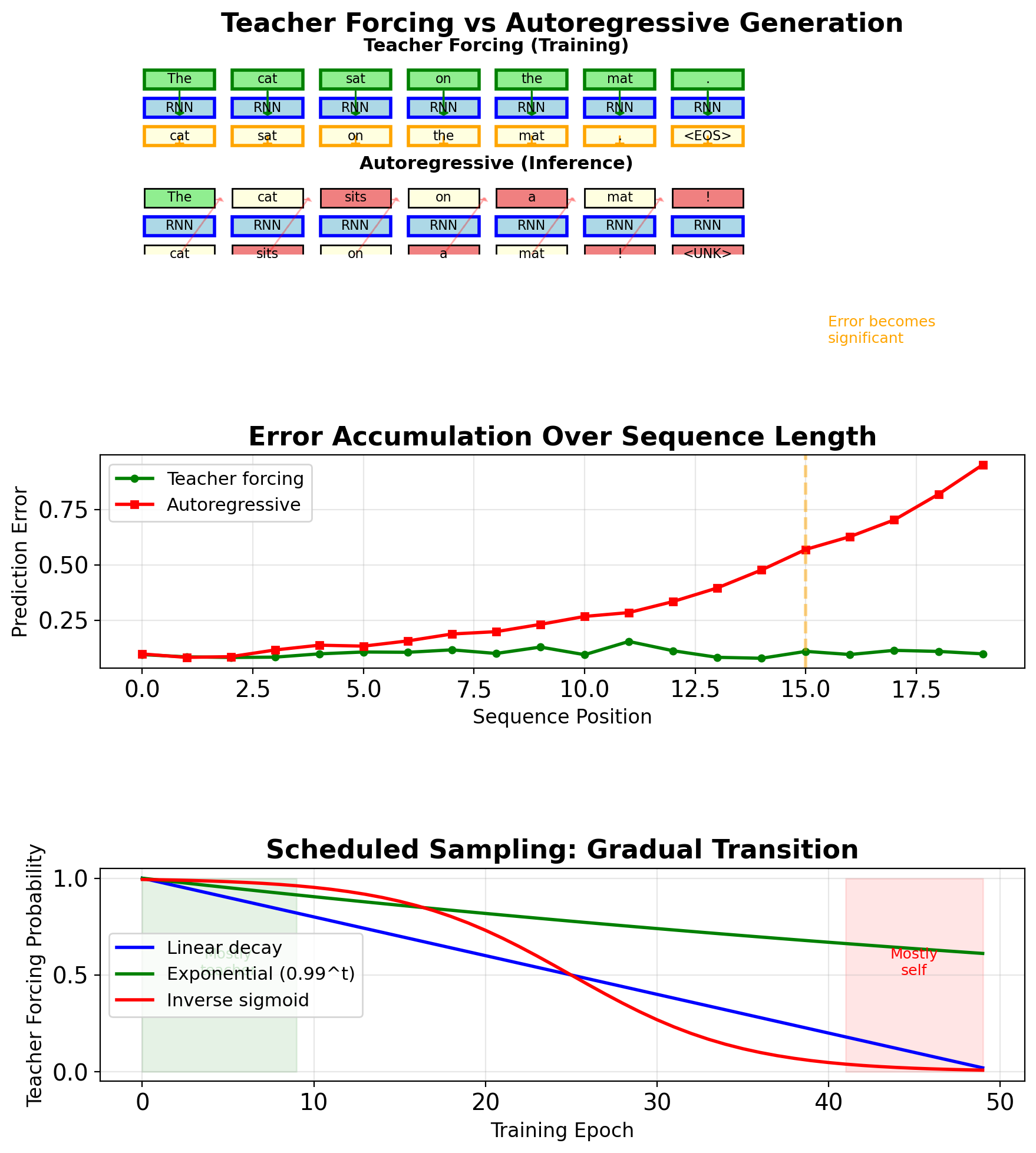

Teacher Forcing Creates Train-Test Mismatch

Training (Teacher Forcing):

- Input at t: ground truth \(y_{t-1}\)

- Model never sees its own errors

- Stable, fast convergence

- Loss: \(\mathcal{L} = \sum_t \ell(f(y_{t-1}), y_t)\)

Inference (Autoregressive):

- Input at t: prediction \(\hat{y}_{t-1}\)

- Errors compound over sequence

- No ground truth available

- Output: \(\hat{y}_t = f(\hat{y}_{t-1})\)

Exposure bias accumulation:

- Step 1: ε error

- Step 2: ε + δε error

- Step T: O(εT) error

Scheduled sampling solution:

- Probability of teacher forcing: \(p = k^i\)

- k = 0.95-0.99, i = epoch

- Gradually expose model to own predictions

- Trade-off: stability vs robustness

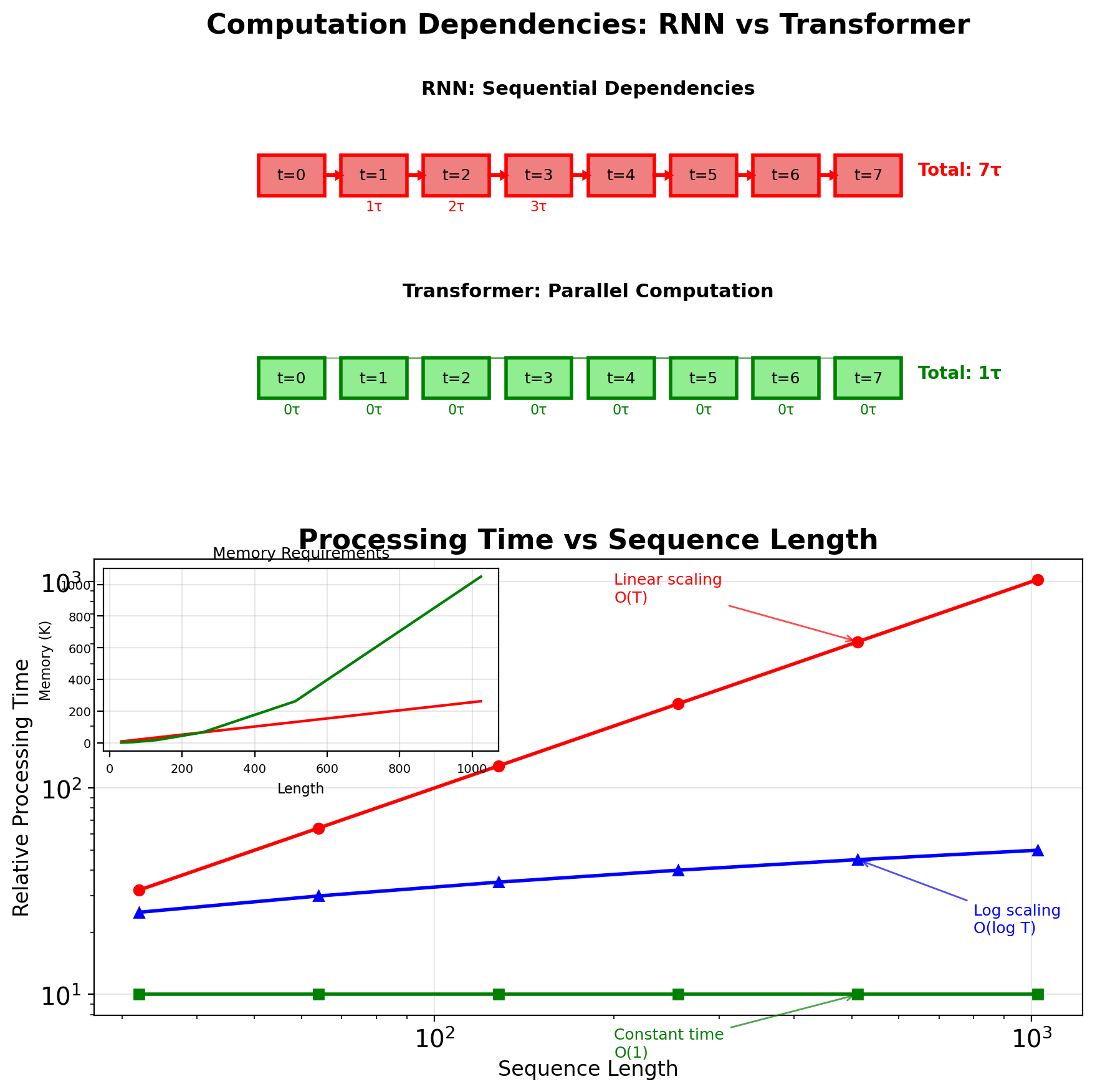

RNNs Cannot Parallelize Across Time Steps

Sequential dependency: \[\mathbf{h}_t = f(\mathbf{h}_{t-1}, \mathbf{x}_t)\]

Cannot compute \(\mathbf{h}_t\) without \(\mathbf{h}_{t-1}\)

Computational complexity:

- Per step: \(O(H^2)\) for hidden state update

- Total sequence: \(O(T \times H^2)\)

- Cannot parallelize across T